Browse our range of reports and publications including performance and financial statement audit reports, assurance review reports, information reports and annual reports.

Conduct of the OneSKY Tender

Please direct enquiries relating to reports through our contact page.

The objective of this audit was to assess whether the OneSKY tender was conducted so as to provide value with public resources and achieve required timeframes for the effective replacement of the existing air traffic management platforms.

Summary and recommendations

Background

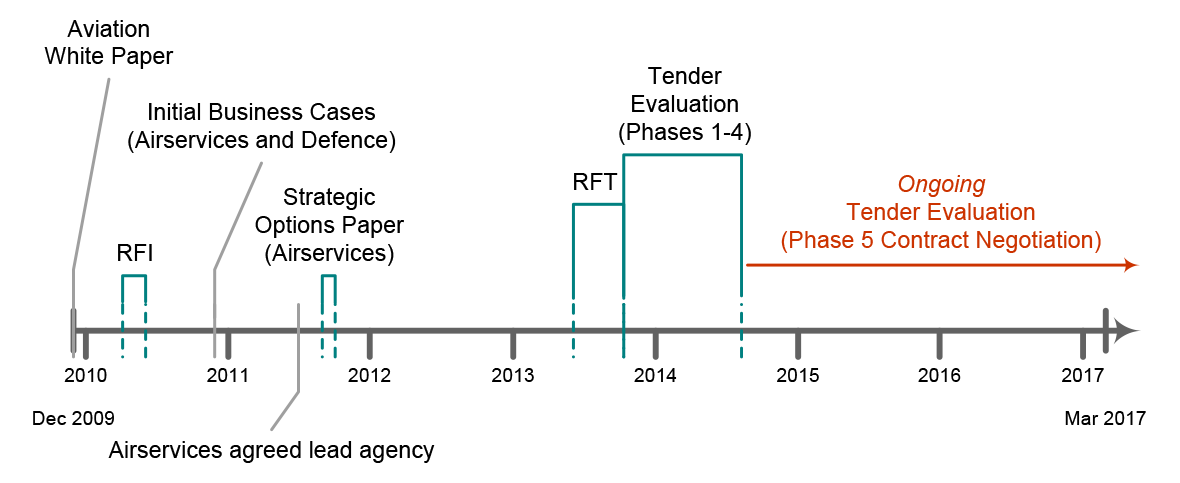

1. The civil air traffic management system operated by Airservices Australia (Airservices) and the separate system operated by the Department of Defence (Defence) for military air traffic are both due to reach the end of their economic lives in the latter part of the current decade. The December 2009 National Aviation White Paper identified expected benefits from synchronising civil and military air traffic management through the procurement of a single solution to replace the separate systems.

2. Under the OneSKY Australia program, Airservices is the lead agency for the joint procurement of a Civil Military Air Traffic Management System (CMATS). CMATS is intended to be delivered through contracts between Airservices and the successful tenderer, with a separate agreement being established between Airservices and Defence for the on-supply of services and goods/supplies. A Request for Tender (RFT) for the joint procurement was released on 28 June 2013. The RFT closed on 30 October 2013, with six respondents (including from the incumbent providers of both the Airservices and Defence ATM platforms).

3. On 27 February 2015, it was announced that an advanced work contracting arrangement would be entered into with Thales Australia, as a next step for the delivery of the OneSKY initiative.

Audit objective and criteria

4. The objective of the audit was to assess whether the OneSKY tender was conducted so as to provide value with public resources and achieve required timeframes for the effective replacement of the existing air traffic management platforms. To form a conclusion against the audit objective, the following high level criteria were adopted:

- Was the OneSKY tender process based on a sound business case and appropriate governance arrangements?

- Did the tender process result in the transparent selection of a successful tender that provided the best whole-of-life value for money solution at an acceptable level of cost, technical and schedule risk, consistent with the RFT?

- Did negotiations with the successful tenderer result in constructive contractual arrangements that ensured continuity of safe air traffic services, the managed insertion of an optimum system of systems outcome within required timeframes, and demonstrable value?

5. The Conditions of Tender had envisaged contracts would be signed in April 2015. As of January 2017, whilst a contract for the entire acquisition and support scope has not yet been executed, a number of contracts have been entered into through an Advanced Work Supply Arrangement for the acquisition contract scope, the first of which commenced in July 2015. Defence has advised its Ministers that contract signature for the entire acquisition and support scope is unlikely to occur before mid-2017. As such, this performance audit has not examined criterion 3.

Conclusion

6. The design of the OneSKY tender process was capable of producing a value for money outcome. The successful tenderer was assessed as significantly stronger in terms of technical capability as well as involving much lower schedule risk. The successful tenderer had also submitted significantly higher acquisition and support prices than the other tenderers. Adjustments made by the Tender Evaluation Committee (TEC) to tendered prices suggested that the successful tenderer offered the lowest cost solution. The TEC’s approach did not highlight to decision-makers the trade-off that needed to be made between the technical merits and cost of the competing tenders. There have also been significant delays with the conduct of the OneSKY tender process.

7. A two-stage tender process was employed involving a Request for Information (RFI) followed by a Request for Tender (RFT). This approach was appropriate for the scale, scope and risk of the joint procurement. It promoted a healthy level of competition for the procurement, with 23 responses to the RFI and nine tender responses received from six tenderers (two respondents offered a total of three alternate tenders). The RFT was released to the market in June 2013, 18 months later than the expected December 2011 release. This delay was further compounded by tender evaluation activities taking more than twice as long as planned. Contracts are unlikely to be signed prior to mid-2017, at least 40 months after tender evaluation commenced.

8. The evaluation governance arrangements were appropriate. They provided an approach that was capable of identifying the best value for money tender. They also guarded against the conflicts of interest issues identified in ANAO Report No.1 2016–17 impacting on the tender evaluation process and outcome.

9. Tender evaluation proceeded through the planned phases, with competitive pressure maintained until late in the process. The records of the evaluation process evidence that the successful tender was assessed to be better than the other remaining candidates from a technical and schedule risk perspective. It is not clearly evident that the successful tender offered the best value for money. This is because adjustments made to tendered prices when evaluating tenders against the cost criterion were not conducted in a robust and transparent manner. Those adjustments meant that the tenderer that submitted the highest acquisition and support prices was assessed to offer the lowest cost solution. It is also not clearly evident that the successful tender is affordable in the context of the funding available to Airservices and Defence.

Supporting findings

Design of the tender process

10. Airservices and Defence took sufficient steps to generate market interest in the procurement process including by running a two-stage tender process—an RFI followed by an RFT.

11. An overarching business case was not prepared for OneSKY. Separate business cases were developed by Airservices and Defence. The Airservices business case has not been reviewed or updated since 2011. In December 2016, Airservices advised the ANAO that it had commenced an update of its business case, which is due for completion in the first quarter of 2017. Shortcomings in Defence’s 2011 business case were identified by a review that was commissioned after Ministers became aware of a significant increase in the initial estimated acquisition costs when a 2014 business case was prepared (on the basis of tender responses).

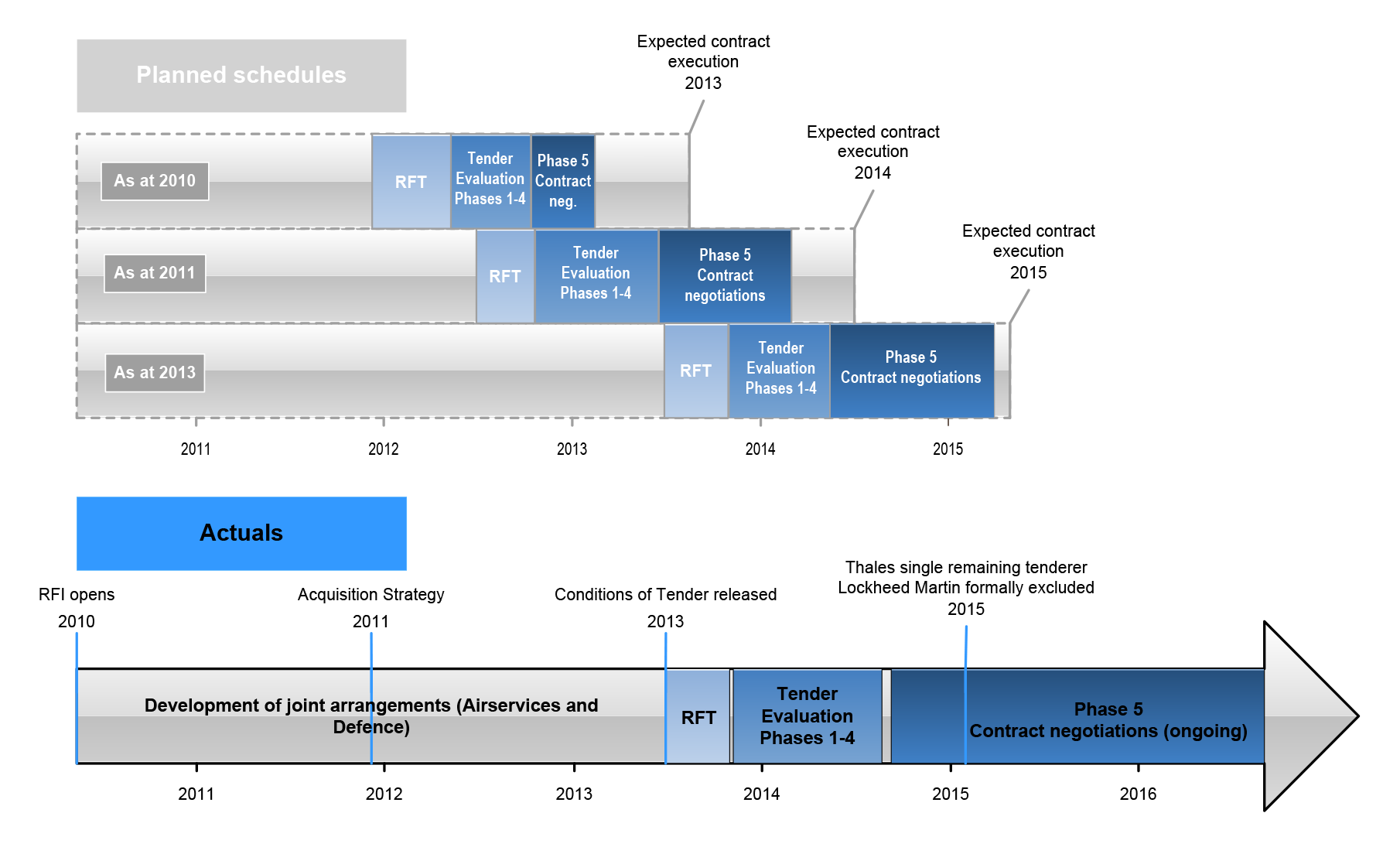

12. Significant delays occurred with the conduct of the tender process in comparison to planned timeframes and when decision-makers had been told that the existing systems would need to be retired. Of note was that the RFT was released to the market in June 2013, 18 months later than the expected December 2011 release. This was compounded by significant delays during tender evaluation and contract negotiations. Specifically, there was a delay of four months during Phase 3 of the tender evaluation, and as of January 2017, contract negotiations continue to exceed the original timeframe, with negotiations running 18 months over the planned 11 month schedule. In July 2016, Defence advised its Ministers that contract signature was ‘unlikely to occur’ before mid-2017.

13. Appropriate evaluation governance arrangements were established. They provided an approach that was capable of identifying the best value for money tender. They also guarded against potential conflicts of interest impacting on the tender evaluation process and outcome. Of note was that evaluation findings and recommendations were built up from detailed evaluation work that reflected input from a large number of Airservices employees and contractors as well as Defence personnel.

Tender outcomes

14. The evaluation processes for the progression, shortlisting and exclusion of tenders proceeded as envisaged with competitive pressure maintained until the conclusion of the Phase 4 tender evaluation. Decisions were then taken to set-aside, and later exclude, the second-ranked tenderer from further consideration rather than enter into parallel negotiations with two tenderers. The reasons for this decision were adequately recorded.

15. An inadequate approach to summarising the evaluation of tenders was employed. Specifically, the approach of ranking each tender against the criteria did not provide a suitable means of identifying the extent to which one tenderer had been assessed as better, or worse, than other respondents, or how well each tenderer had been assessed as meeting the relevant criterion aspect.

16. The evaluation of tendered prices against the cost criterion was not conducted in a robust and transparent manner. There was not a clear line of sight across the phases of the evaluation and the work of the Tender Evaluation Working Groups and the Tender Evaluation Committee (TEC) in relation to the adjustments made by the TEC to tendered prices for evaluation purposes. Of particular significance was the lack of adequate records explaining the TEC adjustments. Those adjustments suggested that the successful tenderer offered the lowest cost solution when the acquisition and support prices it submitted were actually considerably higher than those of the other tenderers.

17. The records of tender evaluation activities and the outcomes did not demonstrate that sufficient attention had been given to whether the tendered acquisition and support costs were affordable for either Airservices or Defence.

Summary of entity responses

18. Airservices and Defence provided formal comments on the proposed audit report, which are included at Appendix 1. Airservices also provided a summary response, as set out below.

Airservices Australia

Airservices notes the report. Airservices welcomes the ANAO’s conclusion that the tender process design was appropriate and that the evaluation governance both provided an approach capable of identifying the best value for money tender and guarded against the perceived conflict of interest issues that had been identified in the ANAO’s previous audit report as requiring further investigation.

Airservices notes that the report does not make any recommendations for improvement.

Airservices has provided further clarity on some of the matters raised as an attachment to this report. Airservices remains firmly of the view that the tender process, having been appropriately designed, has identified the best value for money tender at an acceptable level of risk, having regard to all of the evaluation criteria.

1. Background

The OneSKY Australia program

1.1 Airservices Australia (Airservices) is responsible for managing Australia’s airspace in accordance with the Chicago Convention on International Civil Aviation. Airservices is principally funded by revenue from industry, involving charges for enroute, terminal navigation and aviation rescue and firefighting services. The level of charges is based on five year forecasts Airservices prepares of activity levels (including traffic volumes), operating costs and capital expenditure. As monopoly provider of such services within Australia, Airservices’ prices are regulated by the Australian Competition and Consumer Commission. Where Airservices proposes to increase its prices it is required to notify the Australian Competition and Consumer Commission which is responsible for assessing the proposal, and is able to object to the proposed price.

1.2 Airservices provides civilian airspace management via The Australian Advanced Air Traffic Management System (TAAATS). The existing air traffic management (ATM) platform was implemented in the late 1990s by Thales Australia (Thales), and was commissioned in March 2000. There has been a continual program of incremental software upgrades to meet new requirements and technologies. The platform’s life and associated contract with Thales were due to expire in 2015. A further hardware upgrade and associated deed of variation to the existing support contract with Thales extended the operational capacity of the existing system, but Airservices identified limits to the capacity to extend the economic life of type beyond 2018.1 In 2010, Airservices’ initiated the Air Traffic Management Future Systems program (AFS program) to consider future ATM options.

1.3 The Department of Defence (Defence) is responsible for military aviation operations and air traffic control (including at airports with a shared civil and military use). At approximately the same time as the TAAATS platform was commissioned, Defence commissioned a separate ATM platform for military aircraft, known as the Australian Defence Air Traffic System (ADATS). ADATS is supplied under contract by Raytheon Australia Pty Ltd (Raytheon). ADATS is similarly due to reach the end of its useful life in the latter part of this decade. Following the cessation of initial consideration of systems harmonisation, Defence initiated phase three of Project AIR5431 to replace ADATS.

1.4 The December 2009 National Aviation White Paper identified expected benefits from synchronising civil and military air traffic management. The activities identified in the White Paper for the implementation of a comprehensive, collaborative approach to nation-wide air traffic management included the procurement of a single solution to replace the separate systems.

Conduct of the procurement process

1.5 Under the Commonwealth’s financial framework, Airservices is not required to comply with the Commonwealth Procurement Rules (CPRs), which are issued by the Finance Minister and apply to all non-corporate Commonwealth entities. Rather, as is the case with most corporate Commonwealth entities, Airservices develops and implements its own procurement policies and procedures. Those policies and procedures are required to meet general obligations on the organisation that it promote proper use of resources and employ effective internal controls.

1.6 Following the release of the 2009 Aviation White Paper, delivery of the joint initiative commenced in 2010, with a Request for Information (RFI) issued to industry (refer Figure 1.1 below for key dates and events in the delivery of the OneSKY program). The Airservices AFS program and Defence AIR5431 Phase 3 project are now represented jointly as OneSKY Australia. Within the overall OneSKY Australia program (OneSKY), Airservices is the lead agency for the joint procurement of a Civil Military Air Traffic Management System (CMATS).

Figure 1.1: OneSKY program—key dates and events

Source: ANAO representation of key OneSKY dates.

1.7 CMATS is intended to be delivered through contracts between Airservices and the successful tenderer, with a separate agreement being established between Airservices and Defence for the on-supply of services and goods/supplies. A Request for Tender (RFT) for the joint procurement was released on 28 June 2013. The RFT closed on 30 October 2013, with six respondents (including from the incumbent providers of both the Airservices and Defence ATM platforms).

1.8 On 27 February 2015, it was announced that an Advanced Work contracting arrangement would be entered into with Thales, as a next step for the delivery of the OneSKY initiative. As at January 2017, negotiations for the finalisation of acquisition and support contracts for the provision of the combined civil-military system were ongoing.2

Lead agency and governance arrangements

1.9 In 2009 Airservices and Defence agreed to collaborate on the harmonisation of a civil military ATM system through a partnership approach, and established the Australian Civil Military ATM Committee to manage the coordination of future joint ATM initiatives. The Committee, which was sponsored by the Chief Executive Officer of Airservices and the Chief of Air Force of Defence and included representatives from the Department of Finance, Department of Infrastructure and Civil Aviation Safety Authority, was a discussion only forum, with all outcomes produced by the Committee considered as recommendations. In 2010, Airservices and Defence continued to run separate approval processes, and would initiate separate project groups to implement the Committee’s recommendations (if approved internally). In order to support alignments of processes and timing within projects the Committee was able to establish steering groups between the ‘parallel’ projects.

1.10 In 2011, the Committee’s governance structure continued, with Airservices and Defence co-sponsoring the publication of the Joint Strategic Plan for Air Traffic Management Capability, which provided for the alignment of individual and joint planning. In 2011 an Initial Acquisition Strategy was presented to the Airservices Board, which stated that Airservices ‘will lead the process including the Joint Program on behalf of both organisation and would manage the contract […] with the supplier on behalf of both organisations.’

1.11 The 2012 Operating Level Agreement, formally established the role of a Joint Project Team and existence of a Joint Program Office, to be led by two Joint Program Directors each of who will be responsible to either Airservices or Defence. The Memorandum of Cooperation signed between Airservices and Defence in April 2013, expanded on the lead agency model stating that:

It has been agreed that Airservices will operate as the Lead Agency for the acquisition and sustainment of CMATS. According, as Lead Agent, Airservices will, with Integrated participation by Defence personnel and subject to Defence approval right […] have responsibility for:

- managing the joint program;

- developing and issuing the RFT […];

- undertaking the tender evaluation;

- making a short listing recommendation;

- developing the tender negotiation directive and contract negotiations;

- conducting offer refinement activities and contract negotiations;

- making the final source selection recommendation;

- entering into separate acquisition and sustainment contract with the preferred supplier; and

- in doing so, will apply such Defence policies, as set out in the Conditions of Tender (COT), to meet both Airservices and Defence joint and unique requirements.

1.12 The Memorandum of Cooperation set out Defence’s role as to ‘support all phases of the program through the provision of embedded teams providing relevant subject matter expertise and will actively participate in all applicable CMATS procurement activities so as to assure itself that the new system and sustainment arrangements are appropriate to meet Defence’s requirements.’

1.13 In 2013 the initial Acquisition Strategy was revised to incorporate the expansive lead agency tasking stating that Airservices would take on ‘a more primary role’. This resulted in Airservices assuming responsibility for ‘day to day coordination of a market approach’ as well as leading the ‘evaluation process […] including facilitating negotiation with preferred tenderer/s.’ This revised Acquisition Strategy noted despite ‘a multi-tiered joint governance structure [being] originally detailed in the [Operating Level Agreement] which was initially used to direct and govern OneSKY Australia’ that Airservices and Defence ‘will have regard to their own internal approvals, decision-making processes, delegations, instructions, policies or lines of authority as set out in the [Memorandum of Cooperation].’

Cost sharing arrangements between Airservices and Defence

1.14 The Joint Operational Concept document issued in 2010 recognised Airservices’ and Defence’s intent to set out ‘principles for cost allocation and recovery between the organisations’ for costs incurred by either entity relating to the OneSKY program. In 2012, an Operating Level Agreement between Airservices and Defence was developed, setting out the process for cost recovery (including a dispute process) and also stated that costs would be shared when costs were incurred for the benefit of the Program.

1.15 Airservices and Defence also agreed for the sharing of the final tendered acquisition and support costs relating to CMATS. Specifically, it was agreed that Airservices would directly contract to acquire and support CMATS, with Airservices to provide for the on-supply of the Defence acquisition and support elements of CMATS. The On-Supply Agreement states that for a common cost element, the cost allocation would be 50:50. In order to assist Defence in its funding approval processes, Airservices provided Defence with a Not-to-Exceed price of $255 million for the common elements of CMATS.

1.16 In March 2017, Defence advised the ANAO that:

The cost allocation between Defence and Airservices has not been settled. The cost allocation of 50:50 was an initial allocation. The parties had intended that this will be reviewed once the final requirements and contract price have been (or close to be) agreed. Therefore, the On-Supply Agreement (OSA) restricts the 50:50 cost allocation until the end of Phase 5.

Audit approach

1.17 This performance audit is the second in a two-stage approach by the ANAO to requests received from the then Minister for Infrastructure and Regional Development and the Senate Rural and Regional Affairs and Transport Legislation Committee that the ANAO examine the implementation of the OneSKY program.3

1.18 The first audit was tabled in August 2016 (ANAO Report No.1 2016–17). Its objective was to examine whether Airservices had effective procurement arrangements in place, with a particular emphasis on whether consultancy contracts entered into with the International Centre for Complex Project Management (ICCPM) in association with the OneSKY Australia program were effectively administered.

1.19 The objective of this second audit was to assess whether the OneSKY tender was conducted so as to provide value with public resources and achieve required timeframes for the effective replacement of the existing air traffic management platforms. To form a conclusion against the audit objective, the following high level criteria were adopted:

- Was the OneSKY tender process based on a sound business case and appropriate governance arrangements?

- Did the tender process result in the transparent selection of a successful tender that provided the best whole-of-life value for money solution at an acceptable level of cost, technical and schedule risk, consistent with the RFT?

- Did negotiations with the successful tenderer result in constructive contractual arrangements that ensured continuity of safe air traffic services, the managed insertion of an optimum system of systems outcome within required timeframes, and demonstrable value?

1.20 The Conditions of Tender had envisaged contracts would be signed in April 2015. As of January 2017, whilst a contract for the entire acquisition and support scope has not yet been executed, a number of contracts have been entered into through an Advanced Work Supply Arrangement for the acquisition contract scope, the first of which commenced in July 2015. Defence has advised its Ministers that contract signature for the entire acquisition and support scope is unlikely to occur before mid-2017. As such, this performance audit has not examined criterion 3.

1.21 In light of the findings and conclusions of ANAO Report No.1 2016–17, this second audit also examined any impact of potential conflicts of interest on the tender evaluation process.

1.22 The audit examined relevant records, including email traffic, relating to the conduct of the OneSKY tender and associated entity and government approval processes. As lead agency, Airservices is responsible for maintaining all records associated with the conduct of the tender (which involved Defence personnel being embedded within evaluation and decision-making structures established within Airservices). Relevant Airservices, Defence and Department of Finance records relating to the broader policy and governance aspects of the OneSKY procurement were also examined.

1.23 The audit was conducted in accordance with ANAO auditing standards at a cost to the ANAO of approximately $414 000.

1.24 The team members for this audit were Emilia Schiavo, Hannah Conway, Angus Hirst, Tina Long and Brian Boyd.

2. Design of the tender process

Areas examined

The ANAO examined the design of the OneSKY tender process.

Conclusion

A two-stage tender process was employed involving a Request for Information (RFI) followed by a Request for Tender (RFT). This approach was appropriate for the scale, scope and risk of the joint procurement. It promoted a healthy level of competition for the procurement, with 23 responses to the RFI and nine tender responses received from six tenderers (two respondents offered a total of three alternate tenders). The RFT was released to the market in June 2013, 18 months later than the expected December 2011 release. This delay was further compounded by tender evaluation activities taking more than twice as long as planned. Contracts are unlikely to be signed prior to mid-2017, at least 40 months after tender evaluation commenced.

The evaluation governance arrangements were appropriate. They provided an approach that was capable of identifying the best value for money tender. They also guarded against the conflicts of interest issues identified in ANAO Report No.1 2016–17 impacting on the tender evaluation process and outcome.

Were sufficient steps taken to generate market interest in the procurement process?

Airservices and Defence took sufficient steps to generate market interest in the procurement process including by running a two-stage tender process—an RFI followed by an RFT.

2.1 A joint Request for Information (RFI) was issued on 14 May 2010. Responses were due by 9 July 2010. The Conditions of the RFI outlined that:

- Airservices and Defence had developed a joint operational concept and would consider a range of options for a future national air traffic management solution that (in normal operations mode) operates as one system; and

- a future system will be acquired and implemented under two projects that were being coordinated by two project teams with the objective of producing a national system.

2.2 The RFI sought to ascertain information from industry on a range of issues, including capability, technical risks, and indicative cost information. Another key outcome of the RFI was to develop an overarching program schedule for the joint development of a national ATM system. The preliminary estimates for key future milestones set out in the RFI were:

- complete review of RFI responses: September/October 2010;

- first pass approval4/business case approvals: 2010–11; and

- final operational capability: 2017–18.

2.3 The RFI process did not restrict participation in the Request for Tender (RFT) stage. Specifically, the Conditions of the RFI outlined that participation would not entitle the respondents to participate in the RFT process, and non-participation in the RFI process would not prevent participation in the RFT process.

2.4 Twenty-three responses were received. This comprised six full responses, nine partial responses and eight ‘out of scope’ responses (for example, offers to provide consultancy services).

2.5 A Review Plan was prepared and responses were examined in a manner consistent with that plan.

2.6 Consistent with the planned timeframe, the RFI Review Report was finalised on 30 September 2010. The key conclusions of the RFI process included that:

- industry has the capability and capacity to deliver a national air traffic management system, with a competitive market for these systems;

- the level of technological development, in the most part, was adequate to meet the requirements of a national system;

- an implementation timetable of approximately five years from contract signature appeared to be a reasonable basis for planning; and

- sufficient data was obtained to inform initial business cases on acquisition costs, but not for through-life costing.

2.7 The primary purpose of the RFI process was to obtain information from industry. It also had the benefit of raising awareness of the procurement, and generating interest from potential suppliers.

2.8 There was also direct engagement with industry via a supplier briefing on 15 December 2011 and the release of a draft Joint Function and Performance Specification (JFPS) to obtain industry comment and feedback to test/validate key requirements.

2.9 A Supplier Engagement Plan was developed to set out the strategy by which industry suppliers would be engaged throughout the procurement process. This document recorded the key engagement that had already occurred and set out the strategy to be employed from the period prior to the release of the joint RFT, through to contract execution.

2.10 On 11 December 2012 potential suppliers were updated on the continuing development of the RFT pack. Also on this date, a revised JFPS and Common Operating Concept Document was released.

2.11 The success of the engagement approach was evident from the RFI receiving 15 full or partial responses followed by six parties responding to the RFT with a total of nine tender responses (two respondents offered a total of three alternate tenders).

Were sound business cases developed?

An overarching business case was not prepared for OneSKY. Separate business cases were developed by Airservices and Defence. The Airservices business case has not been reviewed or updated since 2011. In December 2016, Airservices advised the ANAO that it had commenced an update of its business case, which is due for completion in the first quarter of 2017. Shortcomings in Defence’s 2011 business case were identified by a review that was commissioned after Ministers became aware of a significant increase in the initial estimated acquisition costs when a 2014 business case was prepared (on the basis of tender responses).

2.12 Business cases are an important element in procurement governance, setting out the initial objectives, scope, risks, timeframe, cost and associated value for money considerations. For major procurement projects such as OneSKY, it is reasonable to expect that a robust business case, including cost-estimates, is developed. There is also the expectation that the business case retains its currency and relevance throughout the life of the procurement so that it continues to successfully inform and support project decisions.

2.13 Airservices and Defence produced separate business cases in 2011. This aligned with the governance approach taken at that time (refer paragraphs 1.9–1.12). In amongst other costs, Airservices’ business cases estimated costs for the entire ATM system, while Defence’s business cases considered only Defence’s share of the ATM system costs and the additional Defence specific items also required from the procurement (refer paragraphs 1.15–1.16 on the cost sharing arrangement between Airservices and Defence). Neither Airservices’ or Defence’s 2011 business cases were sufficiently robust as neither considered the costs of integration and harmonisation between the two entities. Additionally, at no point has a joint business case been developed.

Airservices

2.14 Drawing on the results of the RFI process, an Initial business case was finalised in January 2011. The purpose of this business case was to support the preliminary assessment of the options available to address the future ATM system requirements of Airservices and its stakeholders. It included an estimate of $727.6 million for the present value of capital and operating costs of a joint tender, between Airservices and Defence, to replace the existing ATM systems through an open tender process.

2.15 This was followed in October 2011 by the Air Traffic Control Future Systems Strategic Options Paper. Joint acquisition and management for the future ATM system was the preferred outcome. This was, again, a business case prepared by, and focused on, Airservices. Its specific objective was to provide the required analysis to allow Airservices’ management to consider the various design options available and select the most appropriate options against cost and other non-financial criteria on the basis of which the AFS program will then progress the RFT and other activities.

2.16 The Strategic Options Paper provided whole-of-life costs for all eight options proposed, which included the cost of the replacement system, the cost of the facilities required and internal implementation costs to Airservices. In October 2011, the Airservices Board endorsed Option F7—a joint Airservices and Defence replacement option.5

2.17 The Strategic Options Paper estimated capital expenditure on systems between 2011–12 and 2032–33 would be $476 million, with operating expenditure on systems over this period of $494 million (a total estimated cost of $970 million).6 Based on these costs, the Strategic Options Paper estimated the net present value whole-of-life project costs for implementing Option F7 was $734.6 million (refer Table 2.1 for a cost breakdown).

Table 2.1: Option F7—whole-of-life project related costs

|

Project related costs |

Option F7 Budget ($ m)a |

|

|

System and Facilities Costs |

Project Related Capital Expenditure |

$359.4 |

|

Project Related Operating Expenditure |

$375.2 |

|

|

Total Project Related Cost |

$734.6 |

|

Note a: Airservices advised that the Strategic Options Paper referenced the financial analysis of the Initial business case, which contained estimated costs that were based on the market research acquired during the RFI.

Source: ANAO analysis of Airservices’ ATC Future Systems Strategic Options Paper.

2.18 Airservices advised the ANAO that the Strategic Options Paper’s estimate was the only approved costing for Airservices since the tender process commenced. The estimated figure was the ‘best available cost guide on which to base decisions’ during the affordability assessments that were to be performed during the tender evaluation phases (refer paragraphs 3.71–3.73).

2.19 Upon receipt of the tender responses, Airservices did not update its business case to reflect the new information available, particularly on price. This resulted in Airservices’ business cases losing currency and relevance to inform and support future project decisions. A November 2015 Defence Gate Review identified the need for an ‘immediate strategic review of the business case’ from Airservices’ and Defence’s perspectives. The two key reasons being the time that had elapsed since the Strategic Options Paper was approved and the current knowledge of the probable cost and schedule implications of pursuing the OneSKY Program in its entirety. The then acting Airservices Chief Executive Officer (CEO) supported the review’s recommendation, which Airservices was scheduled to complete by end-December 2015. In relation to the ANAO’s request that Airservices advise whether a strategic review of the business case was undertaken following the Gate Review, Airservices commented in December 2016 that:

Following the receipt of the Phase 5C Offer and its subsequent assessment, Airservices has re-engaged Deloitte to update the business case prior to making a decision on the overall OneSKY program. The business case is due to be completed in Quarter 1 2017.

Defence

2.20 A two‐pass approval process forms the backbone of Defence’s capability development and materiel acquisition process. The Department of Finance plays an important role by verifying Defence’s cost estimates for capability development projects.

2.21 First pass approval for the Defence component of the project was obtained in November 2011, based on a business case. Cost estimates for two options were derived from responses to the joint RFI, involving total acquisition costs (including contingency) of $380 million or $492 million.

2.22 Between May and August 2014, in the context of the second pass approval, Defence prepared an acquisition business case, drawing on cost-benefit analysis that was based on the RFT responses. The option presented was a refined version of the $380 million option considered at first pass. The capital cost estimate was now $899.6 million.7

2.23 Second pass approval was provided in December 2014, including an approved Defence acquisition cost of $906 million (including a $255 million Not-To-Exceed price for the Defence portion of the common CMATS elements). The budgeted cost of the Defence component increased significantly between the two approvals. Given the size of the increase, a review was commissioned by Defence, at the request of Ministers. That review identified that:

- the first pass submission did not meet the quality requirements set out in Defence’s Capability Development Manual or the department’s costing quality guidance; and

- a major cause of the increase was a substantial change in the requirement between first and second pass. Specifically, the review concluded that, at first pass, Airservices and Defence had each sought to acquire a system that would meet their respective requirements, with no evidence of the harmonisation or integration costs being budgeted (with each agency responsible for their own estimates and budgets). By second pass, Airservices and Defence had agreed to purchase a common, fully harmonised system with the cost of harmonisation contributing 35 per cent of the increase in estimated cost to Defence. In addition, a further 19 per cent of the increase was attributed to Integrated Support Contractor costs (no such costs had been budgeted at first pass).

Were planned tender timeframes met?

Significant delays occurred with the conduct of the tender process in comparison to planned timeframes and when decision-makers had been told that the existing systems would need to be retired. Of note was that the RFT was released to the market in June 2013, 18 months later than the expected December 2011 release. This was compounded by significant delays during tender evaluation and contract negotiations. Specifically, there was a delay of four months during Phase 3 of the tender evaluation, and as of January 2017, contract negotiations continue to exceed the original timeframe, with negotiations running 18 months over the planned 11 month schedule. In July 2016, Defence advised its Ministers that contract signature was ‘unlikely to occur’ before mid-2017.

2.24 At the conclusion of the RFI process it was expected that the RFT would be issued in December 2011 (refer Figure 2.1 for the planned schedule as at 2010). By September 2011, the release date for the RFT had been delayed to February 2012.

2.25 A Critical Project Review undertaken by Airservices in September 2011 noted that ‘a number of factors are impacting the release of the Joint RFT package and a Schedule Change Request will be prepared to seek approval to move out the release of the Joint RFT package by four months’ (to June 2012). That review noted the following causes of the delay:

- under-estimating the amount of time it takes to complete a given activity;

- compression of the schedule with 50 per cent plus overlap ratios between major activities;

- lack of resources; and

- understanding the review and approval process as a joint team with Defence.

2.26 The Airservices Board was advised at its February 2012 meeting that:

Overall the Program is tracking on target for a mid-2012 RFT release. Airservices’ risks and Joint Program risks continue to be tracked and assessed on a monthly basis. A number of areas are currently receiving particular focus, as the potential for these to impact on the schedule is increasing. These include resourcing and the timeliness of document approvals.

2.27 Subsequently the Board was advised at its April 2012 meeting that, due to a number of risks being realised, the anticipated mid-2012 release of the RFT was ‘unlikely and that the release date would be extended by up to twenty weeks.’ The delay was highlighted by a June 2012 Critical Project Review. The review concluded that ‘the RFT is now more than six months late with a forecast contract signature 16 months beyond the original baseline’ and that ‘a scheduled release of the RFT in February 2013 is extremely optimistic.’ Key factors in this situation were identified as follows:

Management is very focused on the schedule for RFT release to the market, but the team has continually underestimated the complexity and scale of this task; particularly the effort to achieve the necessary alignment between Defence and Airservices Australia. This has been compounded by the late allocation of project resources by both DMO and Airservices and somewhat dysfunctional team structures, both for the notionally integrated ‘Joint Team’ and the Airservices Future Systems team. Lines of authority and accountability are also somewhat confused.

2.28 Similar to advice presented to the Board in April 2012, the Joint Gate Review (conducted in August 2012) stated that the lead agency arrangement had ‘not matured effectively’. The review identified the primary cause of an 18-month slippage in schedule over the prior two years as ‘parallel teams, culture, process and approval environments’ (rather than the intended lead agency approach being effectively adopted). It also came to the conclusion that release of the RFT by the (then) planned date of February 2013 was ‘unrealistic’.

2.29 In October 2012, the Airservices Board was informed that there was a ‘high risk’ that the scheduled release of the RFT in February 2013 would not be achieved. As shown in Figure 2.1, the RFT was released on 28 June 2013.

Figure 2.1: Key time delays against the planned RFT schedule

Source: ANAO analysis of Airservices’ documentation.

Tender evaluation

2.30 The RFT closed on 30 October 2013, with six tenders being received (including from the incumbent providers of both the Airservices and Defence ATM platforms).

2.31 The Tender Evaluation Plan (TEP) included a schedule for undertaking each phase of the evaluation process. The timeframe set out in the TEP envisaged that it would take 18 months from the receipt of tenders to conduct evaluation activities, negotiate and sign contracts.

2.32 The initial phases were completed on time, but significant delays occurred with the Phase 3 evaluation (refer Table 2.2), which took twice as long (eight months) as had been planned (four months). This was then compounded by prolonged Phase 5 negotiations (which, as of January 2017, had not been concluded). In July 2016, Defence advised its Ministers that contract signature was ‘unlikely to occur’ before mid-2017. While contract negotiation remains ongoing, work on the design and engineering phase has commenced, and is being undertaken via a number of Advanced Work Orders (see paragraph 1.20).

Table 2.2: Tender evaluation timeframes scheduled versus actuals

|

Phase |

Scheduled timeframe |

Actual timeframe |

|

Phase 1: Initial Screening of Tenders |

1–14 November 2013 |

Completed 13 November 2013. |

|

Phase 2: Initial Capability Assessment |

14 November–15 December 2013 |

Completed 17 December 2013. |

|

Phase 3: Detailed Evaluation |

December 2013–March 2014a |

Completed 24 July 2014. |

|

Phase 4: Supplementary Evaluation |

April 2014–May 2014 |

Commenced in June 2014a before Phase 3 was finalised. Completed 21 August 2014. |

|

Phase 5: Parallel Negotiations and Scope Refinement |

May 2014–March 2015 |

Negotiations commenced on 8 September 2014 and were conducted with one tenderer only. Negotiations are ongoing as at January 2017.b |

|

Contract Execution |

April 2015 |

Ministers have been advised that this is unlikely to occur before mid-2017. |

|

Timeframe totals |

18 months |

At least 40 months |

Note a: Phases 3 and 4 were originally planned to occur sequentially. However, the TEP was formally amended in July 2014, so that one evaluation working group could commence Phase 4 evaluation activities prior to approval of the Phase 3 Report by the Tender Evaluation Committee. One of the key benefits driving this change was to optimise the use of resources and maintain the momentum of the evaluation process.

Note b: As part of Phase 5 negotiations, Airservices and Thales engaged in Advanced Work Supply Arrangements. These arrangements provide for Airservices to contribute to Thales’ engineering and development costs for its proposed solution. The Conditions of Tender anticipated that if Airservices agreed to contribute to certain costs, the negotiated final tender price would be reduced by the amount paid by Airservices.

Source: ANAO analysis of Airservices and Defence records.

Were appropriate governance arrangements established?

Appropriate evaluation governance arrangements were established. They provided an approach that was capable of identifying the best value for money tender. They also guarded against potential conflicts of interest impacting on the tender evaluation process and outcome. Of note was that evaluation findings and recommendations were built up from detailed evaluation work that reflected input from a large number of Airservices employees and contractors as well as Defence personnel.

Management of probity

2.33 ANAO Report No.1 2016–17 examined the process by which a Probity Plan and Protocols was established for the joint procurement process, along with the engagement of an external Probity Advisor and an external Probity Auditor. In that report, the ANAO set out that the Probity Plan and Protocols, along with the Conditions of Tender, Tender Evaluation Plan and Contract Negotiation Strategy, provided a reasonable basis for managing the probity aspects of the tender process, but:

- Airservices did not commission independent probity audits of any phase of the tender process subsequent to the release of the RFT;

- Airservices approach to administering declared conflicts and monitoring ICCPM subcontractors’ compliance with the Probity Plan and Protocols was inconsistent and largely passive. This was reflected in a number of missed opportunities to avoid or effectively manage potential conflict of interest concerns associated with engaging key subcontractors via ICCPM; and

- ICCPM sub-contractors with links to tenderers (including through past employment and as a result of the membership of the ICCPM board) became involved in the evaluation of competing tenders, and subsequently undertook contract negotiations with the successful tenderer, but Airservices did not identify or actively manage the attendant probity risks.8

2.34 Those shortcomings increased probity risks to the conduct of tender evaluation activities and, in this audit, the ANAO examined any impact those shortcomings had on the tender evaluation process.

Evaluation governance framework

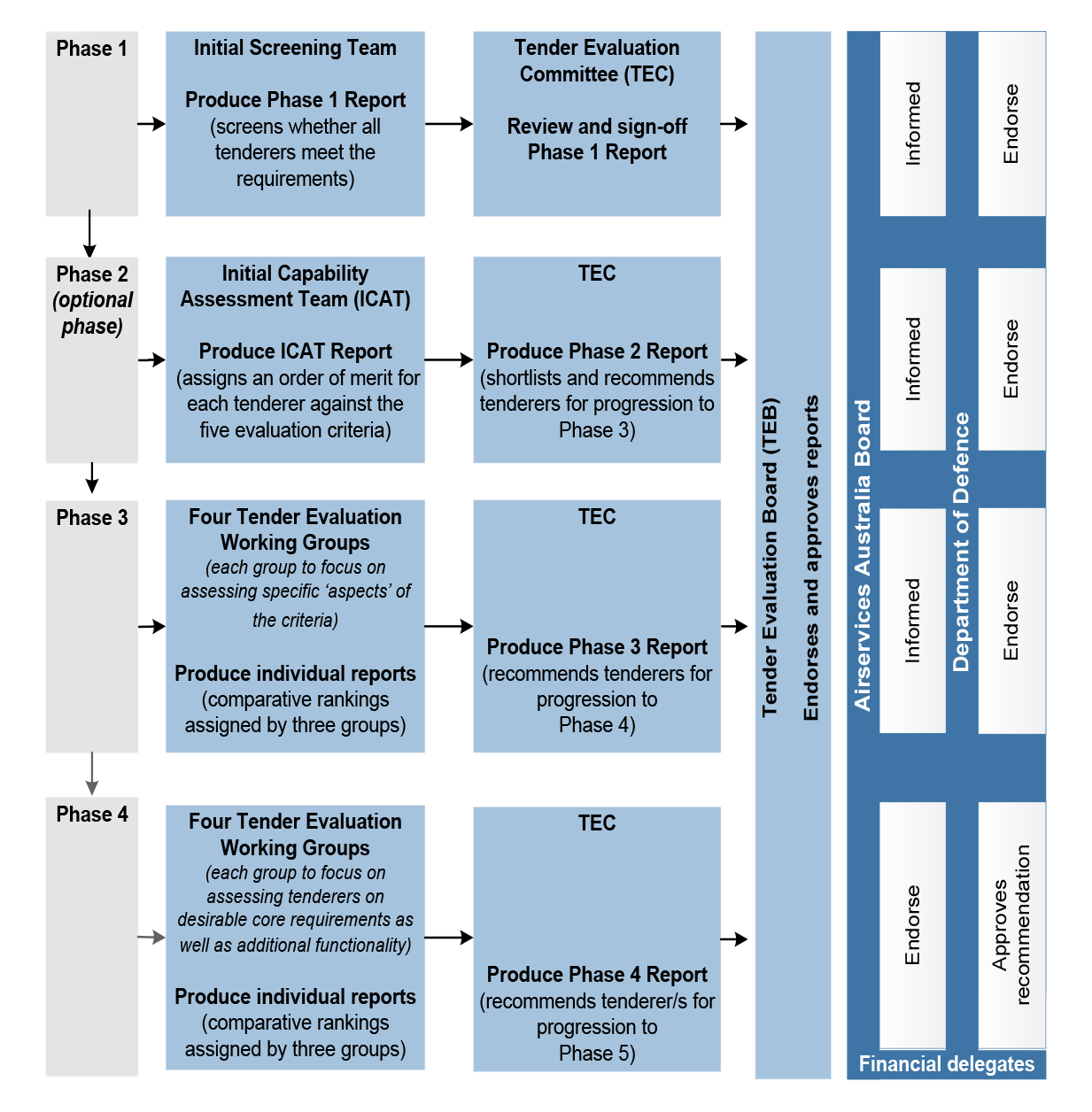

2.35 The TEP set out the process of managing and evaluating tenders in order to provide procurement recommendations to the respective decision-making authorities within Airservices and Defence. The TEP also set out the structure of the Tender Evaluation Organisation (TEO) formed in November 2013, comprising the:

- Tender Evaluation Board (TEB), chaired by the Airservices CEO. It was responsible for making a recommendation as to the preferred tenderer(s) proceeding to Phase 5 contract negotiation;

- the Tender Evaluation Committee (TEC), chaired by Executive General Manager Future Service Delivery within Airservices. Its responsibilities included providing guidance and direction to the four Tender Evaluation Working Groups, providing progress updates to the TEB and preparing reports which make recommendations to the TEB at the conclusion of each evaluation phase; and

- four Tender Evaluation Working Groups, who were responsible for evaluation of tenders against the five evaluation criteria during Phase 3 of the evaluation—‘Detailed Evaluation’ (refer paragraph 3.12 for more information on the Phase 3 evaluation). The four tender evaluation working groups were:

- Technical Operations and Safety;

- Vendor Implementation Capability and Capacity;

- Vendor Long Term Capability and Capacity; and

- Financial Considerations and Commercial Viability.9

2.36 Each evaluation working group was chaired by an Airservices employee, with Airservices providing two of the deputy chairs and Defence the other two. Membership of each evaluation working group also included a number of Airservices and Defence subject matter experts. Each evaluation working group had between two and five sub-evaluation working groups that were responsible for assessing shortlisted tenders against individual criteria elements. Sub-evaluation working groups drew on specialist advisors/subject matter experts. In total, there were more than 100 individuals involved in the work conducted by the evaluation working groups.

2.37 The membership of each of the other entities that comprised the TEO also included representatives from Airservices and Defence. The TEO also included: a number of teams involved in the initial receipt and screening of tender responses; Specialist Advisors; the Legal Advisor; the Procurement Governance Advisor; and the Probity Advisor.

2.38 The TEP stipulated the individuals who were members of each board, committee or working group and the individuals that would perform the nominated advisor roles. It also set out a number of protocols directed at preserving the integrity of the evaluation process. This included requirements for regulating how information would be shared within the TEO, and prohibiting the unauthorised sharing of such information with individuals who were not part of the TEO.

2.39 In addition to the TEP, a Tender Evaluation Work Instruction was prepared. It was to be read in conjunction with the TEP, and provided detailed instructions for the undertaking of the evaluation activities for Phases 2 through to 4.

2.40 Figure 2.2 illustrates the tender evaluation process (excluding Phase 5, which is the ongoing contract negotiation stage10), the corresponding outcomes and responsible decision-making authorities.

Figure 2.2: Tender evaluation: Phases 1 to 4

Source: ANAO analysis of Airservices’ TEP.

2.41 The tender evaluation governance arrangements outlined above were appropriate and provided an approach that was capable of identifying the best value for money tender. They also guarded against potential conflicts of interest impacting on the tender evaluation process and outcome by:

- providing for a four stage review process, including a number of opportunities for dissenting views to be raised and recorded;

- requiring input from a large number of Airservices and Defence personnel (and contractors); and

- providing for evaluation findings and recommendations that were built up from detailed evaluation work (refer Chapter 3).

3. Tender outcomes

Areas examined

The ANAO examined whether the tender process resulted in the transparent selection of a successful tender that provided the best whole-of-life value for money solution at an acceptable level of cost, technical and schedule risk, consistent with the RFT.

Conclusion

Tender evaluation proceeded through the planned phases, with competitive pressure maintained until late in the process. The records of the evaluation process evidence that the successful tender was assessed to be better than the other remaining candidates from a technical and schedule risk perspective. It is not clearly evident that the successful tender offered the best value for money. This is because adjustments made to tendered prices when evaluating tenders against the cost criterion were not conducted in a robust and transparent manner. Those adjustments meant that the tenderer that submitted the highest acquisition and support prices was assessed to offer the lowest cost solution. It is also not clearly evident that the successful tender is affordable in the context of the funding available to Airservices and Defence.

Did the tender evaluation process proceed as planned?

The evaluation processes for the progression, shortlisting and exclusion of tenders proceeded as envisaged with competitive pressure maintained until the conclusion of the Phase 4 tender evaluation. Decisions were then taken to set-aside, and later exclude, the second-ranked tenderer from further consideration rather than enter into parallel negotiations with two tenderers. The reasons for this decision were adequately recorded.

Phase 1

3.1 The initial screening (Phase 1 of the tender evaluation process) concluded that all tenderers had met the requirements for progressing to the next phase of the evaluation. Accordingly, no tenders were excluded at that stage.

Phase 2

3.2 Phase 2 (an optional assessment of tenderers’ capabilities) was included to manage the resource and schedule risks of conducting a full evaluation of all tenders submitted in the event that a large number of responses were received. Its purpose was to shortlist between three and five tenders. The phase occurred at the recommendation of the Tender Evaluation Committee (TEC) and approval by the Tender Evaluation Board (TEB).

3.3 The Initial Capability Assessment Team (ICAT) assessed each tender against the TEP’s five Phase 2 evaluation criteria, which involved assessing only the information contained in Volume 9—Initial Capability Assessment, of the tender responses. Reflecting the different purposes of Phase 2 compared with Phases 3 and 4, the five criteria used in Phases 3 and 4 differed to that in Phase 2 (refer Appendix 2). The criteria applied at each stage were appropriate.

3.4 The Phase 2 report prepared by the ICAT outlined its assessment of the six tender responses. This included establishing an order of merit for each tender response across the five criteria.

3.5 The TEC prepared its own report. That report consolidated the detail from the ICAT’s analysis and established an overarching order of merit for each tenderer against the Phase 2 criteria. The TEC concluded that, while:

… an order of merit has been provided for the three highest ranked tenderers (Thales, Lockheed Martin and Raytheon), there is minimal separation between these three tenderers when considered from a capability to cost relationship perspective.

3.6 The tenderer (Thales) that was ranked highest against three of the five criteria applied in Phase 2 was the same tenderer that was ranked fifth (of six) against the cost criterion. This reflected that its acquisition bid price was the second highest and well above the other two highest ranked tenders at Phase 2 (refer further at paragraphs 3.40–3.43).

3.7 The TEC recommended in its Phase 2 report that the four highest ranked tenders be shortlisted and that the two lowest ranked tenders be set-aside. However, the TEB decided to exclude those two tenders rather than setting them aside.11

Phase 3

Consideration of alternative tenders

3.8 The Conditions of Tender (COT) provided that Phase 3 (Detailed Evaluation) may include the evaluation of any alternative tenders12, and that this evaluation was to apply the published evaluation criteria. A report on the evaluation of the three alternative tenders was prepared in late January 2014, but there was a delay in it being endorsed by the TEB.13 The report did not address each of the five published evaluation criteria. Rather, it recommended that each alternative tender be set-aside ‘based on the lack of information provided and the subsequent ability of the TEO to appropriately assess the alternative solution against the evaluation criteria outlined in the COT.’

Exclusion of a tender during Phase 3

3.9 On 3 April 2014, three months into the Phase 3 evaluation, the fourth-ranked tender at Phase 2 was excluded by the TEB from further consideration. This decision by the TEB reflected advice and recommendations from the TEC which had prepared an ‘Update Report’ that concluded that the tender presented a ‘range of deficiencies of such a magnitude that Airservices would not be able to implement their offer’ and therefore recommended the fourth-ranked tender be excluded as it was ‘clearly non-competitive’ when compared against the other three tenders. An earlier TEC discussion paper, highlighting to the TEB the prospect of an early exclusion, noted the time consuming nature of this tenderer’s response and that a saving of up to six weeks would be achieved.

3.10 This left three tenders for detailed evaluation in Phase 3.

Phase 3 process

3.11 Detailed tender evaluation during Phase 3 was undertaken by the four evaluation working groups.

3.12 Five criteria were used to evaluate tenders in Phase 3. The four different evaluation working groups assessed against assigned ‘aspects’ (effectively sub-criteria) of the evaluation criteria. There were a total of 22 aspects, with as many as 10 aspects, and as few as two aspects, related to any one criterion (refer Appendix 2).

3.13 In one instance, evaluation of an aspect was allocated in the TEP to two evaluation working groups. Specifically, the ninth aspect for the second criterion, which related to each tenderer’s approach to dispute resolution during the life of the contracts. The TEP identified both the Finance evaluation working group and the Long Term Capability evaluation working group as being responsible for assessing this aspect. The probity sign-off for the TEP identified the risk associated with this approach—the TEC potentially receiving two separate rankings from the relevant evaluation working groups for this aspect. The probity advice highlighted that this was an issue that Airservices would need to manage during the evaluation process, particularly as the TEC was required to consider the evaluation criteria as a whole.

3.14 The risk identified was realised. The two evaluation working groups reached different conclusions for each shortlisted tenderer’s performance against this aspect of the second criterion.

3.15 Each evaluation working group produced an evaluation report at the conclusion of Phase 3. Each evaluation working group also produced a report at the conclusion of Phase 4. The reports were signed-off by the Chair, Deputy Chair and each member of the evaluation working group.

3.16 To provide the TEC with assurance that evaluation working group processes, work practices and activities were applied fairly to all tenders and consistent with the TEP and Tender Evaluation Work Instruction, the Probity Advisor conducted a review of Phase 3 evaluation working group processes. That review identified:

- one non-compliance with the TEP (which related to the format of sub-function reports to be attached to one evaluation working group’s report not including an overall compliance level against a sub-function);

- a number of risks to compliance with the TEP; and

- two non-compliances with the Work Instruction (which related to the extent to which all members of two evaluation working groups had reviewed and agreed on the aggregate report for each evaluation task, and had been involved in agreeing the report for the sub-evaluation criteria aspect).

3.17 A key shortcoming in the assurance review conducted by the Probity Advisor related to this work excluding the evaluation of pricing. The Probity Plan and Protocols stated that the ‘Probity Advisor will provide sign-off at key stages throughout the Program lifecycle including sign off on: […] any process or plans for assessing industry responses.’ However, the Probity Plan and Protocols stipulated that ‘all requests for sign off will be issued by Airservices’. In response to the ANAO’s request for probity sign-off of the TEC’s risk-cost adjustment, Airservices advised in December 2016 that the Probity Advisor’s input ‘was not specifically sought’. As a result the Probity Advisor’s Phase 3 sign-offs did not identify, or comment upon, the TEC’s adjustment of tenderer prices to reflect its assessment of risk and contingency (refer paragraphs 3.51–3.59).

3.18 The TEC was chaired by Airservices, with Airservices also providing the Deputy Chair. Six of the remaining members were also provided by Airservices (including the individuals contracted through ICCPM to perform the roles of Lead Negotiator and Deputy Lead Negotiator14) with two members provided by Defence.

3.19 Minutes were kept of TEC meetings, including of the risk-pricing meetings undertaken by the TEC (refer paragraph 3.57). The TEC also produced reports setting out the results of its work, including the basis for its recommendations to the TEB. Those reports were signed off, without dissent, by each TEC member.

Phase 3 results

3.20 At the conclusion of Phase 3, none of the tendered solutions was assessed to satisfy all 2609 of the Joint Function and Performance Specification requirements. Each of the three tenders was considered to have unacceptable non-compliances by the relevant evaluation working group.

3.21 The Technical evaluation working group report assessed the Thales tender to be ‘clearly superior’ in its compliance. Thales was assessed as having a lower number and percentage of non-compliance compared to Lockheed Martin Australia (LMA) and Raytheon against one category—‘Unacceptable Non-Compliance (Very Important Requirements)’. Thales was assessed as having 4 of 83 (or 5 per cent) instances of non-compliance against this category, compared to LMA’s 12 of 75 (or 16 per cent) and Raytheon’s 17 of 54 (or 31 per cent). In December 2016, Airservices advised the ANAO that:

The 2609 requirements were not all of the same nature in terms of their impact on the overall solution nor level of importance. Tenderers were alerted that these requirements were not equally important (even where they had the same designation). As noted by the TOS TEWG [Technical evaluation working group], some requirements overlapped or were essentially the same requirement stated in different ways (ie, a tenderer could receive multiple non-compliances for the same issue).

In other words, while the TOS TEWG noted the number of unacceptable non-compliances, its focus was properly on the significance rather than absolute number or percentage of non-compliances.

3.22 None of the tenders was assessed to meet operational needs and each was considered to have the potential to be further developed to achieve an acceptable baseline solution. However, the amount of effort (in terms of cost and schedule) to bring the Thales solution to an ‘acceptable baseline’ was assessed to be ‘minimal’ compared with ‘very significant’ for the other two tenders. Negotiations commenced in early 2015 and are not expected to be concluded until at least mid-2017.

3.23 At the conclusion of Phase 3, the TEC identified that Thales was significantly ahead of LMA. Thales was ranked first for four of the five criteria (the exception being the second criterion) and assessed as offering ‘the best value for money’ solution at an ‘acceptable level of risk’. Thales’ solution was also considered (subject to effective Phase 5 negotiations) to be executable in a manner that supported Initial Operational Capability (IOC) with tolerable risk. Conversely, LMA’s solution was evaluated as containing a number of ‘serious deficiencies’ and that the risks to achieving IOC would be ‘very high’ and require a ‘great deal of time and effort during Phase 5 negotiations’.

3.24 The TEC:

- concluded that the Thales tender represented best value for money at an acceptable level of risk; and

- made a recommendation that the tenders from LMA and Thales proceed to Phase 4 evaluation.

Phase 4 and 5

3.25 The evaluation processes for the progression, shortlisting and exclusion of tenders proceeded as envisaged until the conclusion of Phase 4 of the tender evaluation.

3.26 At the conclusion of Phase 3, two tenders progressed to Phase 4 for supplementary evaluation. The Phase 4 report recommended that only one tenderer, Thales, proceed to the final contract negotiation phase and for LMA to be ‘set aside’.

3.27 The Contract Negotiation Strategy approved in September 2014 identified five stages of the Phase 5 negotiations, with the purpose of Phase 5A being to clarify, improve and refine Thales’ tendered offer, including through the submission of a Phase 5A offer. The purpose of Phase 5B was to achieve mutual disclosure and constructive testing, jointly by Thales, Airservices and Defence as to constraints, opportunities, assumptions, risks and dependencies as a basis for the critical negotiation of key scope and terms to be conducted during Phase 5C.

3.28 On 12 January 2015, the Lead Negotiator sought CMATS Review Board (CRB15) agreement to the exclusion of the second tenderer from further consideration. The CRB agreed that, as a result of the work undertaken in parts 5A and 5B of Phase 5, the:

LMA offer is now considered to be clearly non-competitive … and does not offer a viable basis for negotiation in competition with the value proposition being advanced by TA [Thales].

3.29 The tenderer was advised of its exclusion on 30 January 2015.

Were the methodologies for ranking tenders appropriate?

An inadequate approach to summarising the evaluation of tenders was employed. Specifically, the approach of ranking each tender against the criteria did not provide a suitable means of identifying the extent to which one tenderer had been assessed as better, or worse, than other respondents, or how well each tenderer had been assessed as meeting the relevant criterion aspect.

Ranking, rather than scoring, tenderer responses against the criteria

3.30 It is important that the evaluation process provides for tenders to be rated consistently against the published criteria and for the results to effectively and consistently differentiate between competing tenders of varying merit.

3.31 The ranking approach adopted by three of the evaluation working groups did not provide a suitable means for comparing the merits of the shortlisted tenders. Recording rankings against each criterion does not provide a means of identifying the extent to which one tenderer had been assessed as better or worse than other respondents, or how well each tenderer had been assessed as meeting the relevant criterion aspect.

3.32 For example, the evaluation working group evaluation of the three shortlisted tenders against the first aspect of criterion 2 concluded that:

All three Vendors returned strong responses for this Criteria Aspect reflecting respectively their extensive experience and maturity in this field. Ultimately the final order of merit was determined by the additional value of experience that Thales and Raytheon both bring with their past and current experience with Airservices and Defence and related personnel and processes.

3.33 As a result, LMA was ranked third against this aspect (the other two tenderers were ranked equal first).

3.34 In contrast, there were other instances where a ‘1’ ranking did not reflect that a tenderer had been assessed as meeting the aspect to a high degree. For example, the three vendors were assessed against the fourth aspect of criterion 2 as ranging from ‘poor and average for many components’. Rankings of 1, 2 and 3 were awarded.

Developing the order of merit

3.35 As part of Phases 2, 3 and 4, the TEC (as required by the TEP) undertook a group assessment, and on a consensus basis established an overarching order of merit of the tenders based on the order of merit assessment against each criterion.

3.36 The final approved version of the TEP specified the approach the evaluation working groups were to take to record the results of the Phase 3 evaluation work. Three of the four evaluation working groups were required to establish a comparative order of merit against each evaluation criteria aspect allocated to them. This covered 18 of the 24 criteria aspects.

3.37 The TEP required that the Finance evaluation working group not prepare a comparative order of merit of each tenderer against the fourth and fifth evaluation criteria (involving six criteria aspects). The fourth criterion related to tendered costs, and the fifth criterion to commercial risks from entering into contracts with the tenderer.

3.38 Initially, like the other three evaluation working groups, the Finance evaluation working group had been required to provide a comparative ranking. The requirement on the Finance evaluation working group to provide a comparative ranking was removed on 29 August 2013.

3.39 The final approved version of the TEP did not set out the rationale for each tenderer not being ranked against each evaluation criteria and criteria aspect (for the TEWG, for the cost criterion). In December 2016, Airservices advised the ANAO that:

The difference in approach reflected the different criteria that were allocated to each TEWG [evaluation working group] and the skill sets of those TEWGs. While the FCCV TEWG [Finance evaluation working group] was allocated two evaluation criteria, the TEP provided for the TEWG Chair to split those criteria across the financial and commercial sub-teams. It is apparent from the TEWG Report that:

(a) the financial sub-team was comprised largely of accounting experts. It looked at all of evaluation criteria 4 except aspect 1(c). It also looked at aspect 2(a) of evaluation criteria 5 (as this related to financial viability).

(b) the commercial sub-team was largely comprised of legal experts. It looked at aspect 1(c) of evaluation criteria 4 and the remaining aspects of evaluation criteria 5. It also looked at compliance by the tenderers with the proposed contractual provisions relating to dispute resolution.

Given that each sub-team was not looking at a whole evaluation criteria and in some cases evaluation criteria aspects were split, it was not appropriate for each sub-team to produce a ranking. Accordingly, the choice was either for the two sub-teams to together come up with a ranking or for the TEC to undertake the rankings in relation to evaluation criteria 4 and 5. The TEC undertook the rankings because it had overall responsibility for determining the ranking for evaluation criteria 4 and 5, and it had access to information that individual TEWGs did not have.

Were tendered prices evaluated in a robust and transparent manner?

The evaluation of tendered prices against the cost criterion was not conducted in a robust and transparent manner. There was not a clear line of sight across the phases of the evaluation and the work of the Tender Evaluation Working Groups and the Tender Evaluation Committee (TEC) in relation to the adjustments made by the TEC to tendered prices for evaluation purposes. Of particular significance was the lack of adequate records explaining the TEC adjustments. Those adjustments suggested that the successful tenderer offered the lowest cost solution when the acquisition and support prices it submitted were actually considerably higher than those of the other tenderers.

Analysis of pricing

3.40 There were significant variances in the acquisition and support prices submitted by the six tenderers.

3.41 The acquisition bid prices ranged from $326 million to $644 million, with a median of $483 million. The major elements of the tendered acquisition costs related to system engineering, software engineering, provision of software licences and provision of hardware.

3.42 The prices bid for the annual support cost ranged from $12 million to $35 million with a median of $23 million.

3.43 The acquisition and support prices tendered by Thales were significantly higher than the other two tenderer’s that proceeded through Phases 3 and 4 of evaluation. Specifically, the Finance evaluation working group’s report outlined that Thales’ acquisition price was 108 per cent higher than the lowest price of the three shortlisted tenders, and 47 per cent higher than the price of the other shortlisted tenderer. Similarly, the working group’s report outlined that Thales’ support price was 114 per cent and seven per cent higher than the prices submitted by the other two shortlisted tenderers.

Phase 2 evaluation

3.44 The Phase 2 evaluation of bid prices by Initial Capability Assessment Team (ICAT) took into account the tendered prices, and highlighted six factors that should be considered as a result of significant variation in bid prices across all tenders.

3.45 As it was required to do for each of the five criteria, the ICAT arrived at an order of merit for the cost criterion.

3.46 There were two signed versions of the ICAT’s Phase 2 report. The first (version 1.0) was signed by each member of the ICAT on 6 December 2013. The second report (version 1.1) was also signed by each member of the ICAT (on 11 December 2013).16 Airservices advised the ANAO in December 2016 that the second version was a result of the TEC’s meeting (10 December 2013) identifying that the content of the ICAT report did not adequately reflect the findings of the ICAT as presented to the TEC at the 10 December meeting. The TEC agreed that the ICAT report would:

… be revised based on clarifications discussed at the meeting. It was noted the proposed revisions were to provide clarity to the information contained in the report and would not change the assessments provided in the report.

3.47 Airservices further advised the ANAO that the TEC’s main issue and request for clarification centred on the order of merit provided for criterion 5, which was based on the ICAT’s assessed confidence on the validity of tendered costs. According to Airservices the:

- TEC specifically raised concerns regarding the ICAT’s ability to adequately assess the risks in relation to tendered costs based solely on access to only one volume of information (Volume 9);

- ICAT agreed that they were unable to support the level of confidence initially reported, with sufficient evidence; and

- ICAT subsequently revised its criterion 5 ranking so that it was based solely on tendered costs.

3.48 Contrary to what the TEC agreed, the revised ICAT report changed the order of merit for two tenderers for criterion 5. Raytheon was assigned with a number one ranking, as opposed to a number three, and LMA a three ranking, as opposed to a one. The ICAT’s supporting analysis for criterion 5 however remained unchanged between the two versions of the report.

Phase 3 evaluation

3.49 The Finance evaluation working group, as required by the TEP and foreshadowed in the COT, considered the proposed payment schedule and financial risks in evaluating the cost of ownership of each of the shortlisted tenderers.

3.50 The evaluation working group’s evaluation report included its calculation of risk-adjusted acquisition and support costs, and a total risk-adjusted price over the 17-year life of type for the new system. This analysis increased the acquisition price of the LMA (six per cent) and Raytheon (26 per cent) tenders by more than the Thales tender (one per cent), reflecting the assessment that there were limited financial risks identified for the Thales tender, as well as a number of opportunities for potential cost savings. Nevertheless, the evaluation working group’s analysis was that the risk-adjusted acquisition, support and whole-of-life cost of the Thales tender remained 33 per cent higher than the lowest cost option, and 20 per cent higher than the other tender still under consideration.

3.51 In addition to the Finance evaluation working group’s analysis, the TEC undertook its own risk-adjustment process. There was no planned methodology for how this analysis would be undertaken in the TEP. The ANAO also could not identify that it had been clearly flagged to tenderers in the COT, or set out in the TEP, that such analysis would be undertaken.17 Further, the risk-adjustment activity undertaken by the TEC was not subject to probity scrutiny (refer paragraph 3.17).

3.52 In terms of the methodology, in December 2016 Airservices advised the ANAO that there was ‘limited’ evidence that the TEC members agreed to the method of the cost-risk adjustment activities. Specifically, Airservices advised the ANAO that an email by a member of the TEC, sent on 15 April 2014, outlined a methodology for the proposed integration of the four evaluation working groups’ evaluations and for the TEC to conduct a cost risk adjustment activity. Airservices further advised that this email was:

… sent to all members of the TEC and a number of additional SME’s [Subject Matter Experts]. Following on from this email a meeting was established by the aforementioned TEC member to all recipients of the email entitled “CMATS Planning” to be held on the 16th April 2014. An item on the agenda for this meeting was to ‘Discuss approach to Risk Adjustment of the three remaining bids’. No formal minutes of this meeting were taken but the outcome was the agreement by the members of the TEC to the methodology and approach to the cost and risk adjustment methodology for the purposes of the evaluation.

3.53 The TEC’s Phase 3 report stated that its price adjustment process involved:

Systemic consideration of each significant risk identified by the TEWGs [evaluation working groups] and the TEC, with the assistance of SMEs [Subject Matter Experts], estimation of the magnitude of the baseline cost and schedule risk and the scale of any additional contingency necessary to provide high confidence in its treatment.

3.54 The TEC’s Phase 3 report presented the results of this analysis as bearing upon how it arrived at an order of merit for criterion 4. Specifically, the TEC’s Phase 3 report stated:

In considering the order of merit for Total Cost of Ownership, the TEC considered baseline pricing, financial and schedule risk profiles, potential internal Airservices and Defence costs and the TEC considered view as to the overall competence of the offering. The TEC agreed that Lockheed Martin and Raytheon should both be ranked 3 for this evaluation criterion to accurately reflect the significant financial risks within the respective tenders.