Browse our range of reports and publications including performance and financial statement audit reports, assurance review reports, information reports and annual reports.

Evaluating Aboriginal and Torres Strait Islander Programs

Please direct enquiries through our contact page.

The objective of this audit was to examine the effectiveness of the design and implementation of the Department of the Prime Minister and Cabinet’s (PM&C’s) evaluation framework for the Indigenous Advancement Strategy (IAS), in achieving its purpose to ensure that evaluation is high quality, ethical, inclusive and focused on improving outcomes for Indigenous Australians.

Summary and recommendations

Background

1. Program evaluation (commonly referred to as ‘evaluation’) can be defined as the systematic and unbiased assessment of the efficiency, effectiveness or appropriateness of government policies or programs (or parts of policies or programs). Under the enhanced Commonwealth performance framework, performance monitoring and comprehensive evaluations are identified as key mechanisms that entities can use for reporting on their performance through their annual performance statements.1

2. The Department of the Prime Minister and Cabinet (PM&C or the department) has been the lead agency for Aboriginal and Torres Strait Islander Affairs since 2013. With the introduction of the Indigenous Advancement Strategy (IAS) in 2014, 27 programs were consolidated into five broad programs under a single outcome, with $4.8 billion initially committed over four years from 2014–15. The Australian National Audit Office’s (ANAO’s) performance audit of the IAS (Auditor-General Report No.35 2016–17) noted that the department did not have a formal evaluation strategy or evaluation funding for the IAS for its first two years.2

3. In February 2017 the Minister for Indigenous Affairs announced funding of $40 million over four years from 2017–18 to strengthen IAS evaluation, which would be underpinned by a formal evidence and evaluation framework.3 In February 2018 the department released an IAS evaluation framework document, describing high level principles for how evaluations of IAS programs should be conducted, and outlining future capacity-building activities and broad governance arrangements.4

Rationale for undertaking the audit

4. There is strong interest from Parliament and the community in ensuring funding provided through government programs achieves intended outcomes. Effective performance measurement and evaluation is critical to determining whether these outcomes are being achieved.

5. The audit was undertaken to provide assurance that the design and early implementation of the department’s evaluation framework for the IAS has been effective. It also provided an opportunity to assess the early impacts of the framework on evaluation practices and inform its ongoing management.

Audit objective and criteria

6. The objective of the audit was to examine the effectiveness of the design and implementation of the department’s evaluation framework for the IAS in achieving its purpose to ensure that evaluation is high quality, ethical, inclusive and focused on improving outcomes for Aboriginal and Torres Strait Islander peoples.

7. To form a conclusion against this objective, the ANAO adopted the following high level criteria:

- Has the department’s evaluation framework been designed to support the achievement of the Government’s policy objectives? (Chapter 2)

- Is the department’s evaluation framework being effectively implemented and managed? (Chapter 3)

- Are evaluations being conducted in accordance with the department’s evaluation framework to improve outcomes for Aboriginal and Torres Strait Islander peoples? (Chapter 4)

Conclusion

8. Five years after the introduction of the IAS, the department is in the early stages of implementing an evaluation framework that has the potential to establish a sound foundation for ensuring that evaluation is high quality, ethical, inclusive and focused on improving the outcomes for Aboriginal and Torres Strait Islander peoples.

9. Following substantial delays in establishing an evaluation framework, the department is now designing a framework that has the potential to support the achievement of the Government’s policy objectives by strengthening evaluations under the IAS. The design of the framework has been informed by recognised principles of program evaluation, relevant literature, previous evaluation activity and stakeholder feedback. The framework could more clearly link evaluation to the Government’s objectives for the IAS and other relevant strategic frameworks such as Closing the Gap.

10. The department’s implementation and management of the IAS evaluation framework is partially effective. Management oversight arrangements are developing, and evaluation advice provided to program area staff has been relevant and high quality. The department has not developed a reliable methodology for measuring outcomes of the framework and its evaluation procedures are still being developed.

11. As the department is still developing procedures to support the application of the IAS evaluation framework, it is too early to assess whether evaluations are being conducted in accordance with the framework. The department’s approach to prioritising evaluations should be formalised by developing structured criteria for assessing significance, contribution and risk. The department has taken recent steps to: mandate early evaluation planning; publish completed evaluations; and ensure findings are acted upon.

Supporting findings

Design of the Framework

12. From its initial policy commitment to develop an evaluation framework for the IAS by June 2014, the department set several deadlines for finalising the framework that were not met. In 2017 the department established a dedicated evaluation program under the IAS and committed to establishing a new evaluation framework. By July 2018 most elements of the IAS evaluation framework were in place.

13. The development of the framework was informed by relevant literature, including other entities’ evaluation strategies and recognised program evaluation principles, and the limited evaluation activity previously undertaken in Aboriginal and Torres Strait Islander programs. The department undertook consultation on the framework and incorporated stakeholder feedback into its design.

14. The extent to which the IAS evaluation framework aligns with relevant strategic frameworks is mixed. The framework document includes references to the enhanced Commonwealth performance framework and a future whole-of-government evaluation strategy for policies and programs that affect Aboriginal and Torres Strait Islander peoples. However, it provides limited detail of how evaluations under the framework will assess contribution to Closing the Gap or whether the Government’s policy objectives for the IAS are being achieved.

Implementation and Management of the Framework

15. The department has established an implementation process for the IAS evaluation framework, which could be improved through more regular reviews of its project activity schedule. Implementation has included a range of activities designed to improve evaluation quality and build evaluation capability.

16. While its performance criterion is relevant, the department has not developed a reliable methodology for measuring the longer-term outcomes of the framework. The department’s performance targets for the IAS evaluation framework focus on the delivery of short-term outputs.

17. The department is developing procedures and tools for evaluation activities but they are not yet fully accessible or comprehensive. It has facilitated discrete evaluation training activities and evaluation advice provided to program area staff has been relevant and high quality.

18. The department’s management oversight arrangements for the IAS evaluation framework are maturing. A mechanism for monitoring evaluation findings and management responses is being developed.

19. There are early indications that the implementation of the IAS evaluation framework may be developing a culture of evaluative thinking within IAG. Developing a plan for how framework implementation activities will lead to the desired changes in maturity would assist the department in achieving its future maturity levels.

Application of the Framework

20. The department has implemented a process for prioritising evaluations under the IAS evaluation framework through development of its annual evaluation work plan. Formalising this process by developing structured criteria for assessing significance, contribution and risk and conducting a strategic analysis of gaps in evaluation coverage would aid the department in employing a consistent and robust approach to prioritising evaluations.

21. From October 2018 the department mandated integrating evaluation strategies into the design of all new or refreshed policies and programs and developing evaluation strategies for existing programs prior to conducting evaluations. Prior to this, evaluations were not consistently planned at an early stage of program development.

22. The department has some processes in place to support the design of respectful, independent and ethical evaluations of Aboriginal and Torres Strait Islander programs. The development of additional guidance and business processes would help to clarify requirements and embed these principles.

23. Although the department has committed to increasing its focus on impact evaluation, it is too early to assess whether evaluations under the new IAS evaluation framework will be impact-focused and evidence-based.

24. The department is working to increase compliance with the IAS evaluation framework requirements to publicly release evaluation reports or summaries and develop management responses. Evaluation findings have been used to support decision-making and improvements in service delivery in four IAS programs.

Recommendations

Recommendation no.1

Paragraph 3.12

The Department of the Prime Minister and Cabinet ensure its performance information for Program 2.6 is supported by a reliable methodology for measuring the longer-term outcomes of better evidence from the evaluation framework.

Department of the Prime Minister and Cabinet response: Agreed.

Recommendation no.2

Paragraph 3.21

The Department of the Prime Minister and Cabinet develop a comprehensive and easy to navigate set of procedures to support the implementation of the IAS evaluation framework.

Department of the Prime Minister and Cabinet response: Agreed.

Recommendation no.3

Paragraph 4.10

The Department of the Prime Minister and Cabinet formalise its evaluation prioritisation process by developing structured criteria for assessing significance, contribution and risk and conducting a strategic analysis of gaps in evaluation coverage.

Department of the Prime Minister and Cabinet response: Agreed.

Summary of entity response

25. The proposed report was provided to the Department of the Prime Minister and Cabinet. The department did not provide a summary response. Its full response is reproduced at Appendix 1.

Key messages for all Australian Government entities

Below is a summary of key messages, including instances of good practice, which have been identified in this audit that may be relevant for the operations of other Australian Government entities.

Performance and impact management

1. Background

Program evaluation

1.1 Program evaluation (commonly referred to as ‘evaluation’) can be defined as the systematic and unbiased assessment of the efficiency, effectiveness or appropriateness of government policies or programs (or parts of policies or programs). Common types of evaluation include process, outcome, impact and economic evaluation. Evaluation forms an important part of the policy cycle, as it can provide insights and suggestions to inform refinements to policies or programs or the design of future interventions. Evaluation also plays a key role in accountability, by providing a means for entities to report to the Government, Parliament and other stakeholders on whether their use of public resources is delivering on objectives and providing benefits.

1.2 Evaluation is closely related to performance monitoring; they can be considered complementary activities. Performance monitoring involves measuring and reporting progress against output and outcome targets (or key performance indicators), whereas evaluation provides a more in-depth assessment of performance. Under the enhanced Commonwealth performance framework, introduced through the Public Governance, Performance and Accountability Act 2013 (PGPA Act), performance monitoring and comprehensive evaluations are identified as key mechanisms that entities can use for reporting on their performance through their annual performance statements.5

Aboriginal and Torres Strait Islander program evaluation

1.3 Over the past decade there has been commentary on the coverage and robustness of monitoring and evaluation of Aboriginal and Torres Strait Islander programs.6

1.4 For example, the issue was raised in the Department of Finance and Deregulation’s 2009 Strategic Review of Indigenous Expenditure, which was commissioned to assess how well Australian Government Aboriginal and Torres Strait Islander programs and whole-of-government coordination arrangements were placed to achieve the Government’s objectives, particularly the Closing the Gap targets. The report stressed the need for a more rigorous approach to program evaluation at a whole-of-government level, including:

- better planning and resourcing of evaluation activity in the design of new Aboriginal and Torres Strait Islander programs (such as putting in place an appropriate evaluation strategy, developing program logics7, and establishing data collection mechanisms and baseline measurements);

- more integrated and better coordinated approaches to performance monitoring and program evaluation;

- more strategic priority-setting and coordination of evaluation effort (such as looking at interactions between programs and the relative efficiency and effectiveness of different approaches);

- greater independence in evaluation teams; and

- the use of more rigorous evaluation methods.8

1.5 A 2011 review by the Closing the Gap Clearinghouse of the overarching themes for successful programs in overcoming Aboriginal and Torres Strait Islander disadvantage found:

- there was a lack of high-quality quantitative evaluations; and

- when quantitative evaluations were conducted, they often did not include comparison groups that would enable the impact of programs or strategies on Aboriginal and Torres Strait Islander disadvantage to be determined.9

1.6 In October 2012 the proceedings of a meeting hosted by the Productivity Commission on the role of evaluation in Aboriginal and Torres Strait Islander policy noted:

Participants argued that it was necessary to have a coherent framework for evaluating Indigenous policies and programs… There was general agreement that evaluation plans should be embedded (and funded) in the design of programs, a practice that should be regarded as ‘a serious part of the policy process’ but is more common in other countries than in Australia. The lack of assessment or evaluation has not only resulted in significant gaps in the Australian evidence base, but has also contributed to ‘a litany of poor policies being recycled’.10

1.7 Subsequently, the Productivity Commission’s National Indigenous Reform Agreement Performance Assessment 2013–14, published in November 2015, argued that there was extensive focus on monitoring broad outcome targets relating to Aboriginal and Torres Strait Islander disadvantage, but little evidence of what works to bridge outcome gaps.11

Evaluating the Indigenous Advancement Strategy

1.8 In September 2013 responsibility for Aboriginal and Torres Strait Islander Affairs was transferred to the Department of the Prime Minister and Cabinet (the department or PM&C) through a machinery of government change, and 27 programs consisting of 150 administered items, activities and sub-activities from eight separate entities were moved into the department. With the introduction of the Indigenous Advancement Strategy (IAS) in 2014, the 27 programs were consolidated into five broad programs under a single outcome, with $4.8 billion initially committed over four years from 2014–15.

1.9 The Australian National Audit Office’s (ANAO’s) performance audit of the IAS (Auditor-General Report No.35 2016–17) found the department did not effectively implement the strategy. The audit report included four recommendations, to which the department agreed, focusing on improving IAS grant administration processes, performance measurement and the operation of its Regional Network. The report also noted that the department did not have a formal evaluation strategy or evaluation funding for the IAS for its first two years.12

1.10 In 2017 the Minister for Indigenous Affairs announced funding of $40 million over four years from 2017–18 to strengthen IAS evaluation. The media release noted that this funding would support a multi-year program of evaluations, underpinned by a formal evidence and evaluation framework to strengthen reporting, monitoring and evaluation at a contract, program and outcome level.13

1.11 From 2017–18 a new IAS program was created (Program 2.6) for evaluation and research into Aboriginal and Torres Strait Islander policies and programs. The IAS program structure and program objectives are presented in Table 1.1.

Table 1.1: Indigenous Advancement Strategy program structure, 2018–19

|

Program |

Objectives |

|

2.1 Jobs, Land and Economy |

Get adults into work, foster Aboriginal and Torres Strait Islander business and assist them to generate economic and social benefits from effective use of their land, particularly in remote areas. |

|

2.2 Children and Schooling |

Get children to school, particularly in remote Aboriginal and Torres Strait Islander communities, improve education outcomes, including measures to improve access to further education, and support families to give children a good start in life. |

|

2.3 Safety and Wellbeing |

Ensure that the ordinary law of the land applies in Aboriginal and Torres Strait Islander communities and ensure they enjoy similar levels of physical, emotional and social wellbeing enjoyed by other Australians. |

|

2.4 Culture and Capability |

Support Aboriginal and Torres Strait Islander people to maintain their culture, participate equally in the economic and social life of the nation and ensure that Aboriginal and Torres Strait Islander organisations are capable of delivering quality services to their clients, particularly in remote areas. |

|

2.5 Remote Australia Strategies |

Ensure strategic investments in local, flexible solutions based on community and Government priorities. |

|

2.6 Evaluation and Research |

Improve the lives of Aboriginal and Torres Strait Islander peoples by increasing evaluation and research into policies and programs impacting on them. |

|

2.7 Program Support |

Departmental support program to the six Indigenous Advancement Strategy programs, reflecting the Government’s commitment to reduce red tape and duplication and ensure resources are invested on the ground where they are most needed through the principle of empowering communities. |

Source: PM&C, Portfolio Budget Statements 2018–19, Budget Related Paper No. 1.14, Prime Minister and Cabinet Portfolio, Canberra, 2018, pp. 40–46.

Indigenous Advancement Strategy evaluation framework

1.12 After undertaking public consultation in late 2017, the department released an IAS evaluation framework document in February 2018. The framework document is high-level and principles-based, describes how evaluations of IAS programs should be conducted, and outlines future capacity-building activities and broad governance arrangements. It states that the goals of the IAS evaluation framework are to:

- generate high quality evidence that is used to inform decision-making;

- strengthen Aboriginal and Torres Strait Islander leadership in evaluation;

- build capability by fostering a collaborative culture of evaluative thinking and continuous learning;

- emphasise collaboration and ethical ways of doing high quality evaluation at the forefront of evaluation practice in order to inform decision-making; and

- promote dialogue and deliberation to further develop the maturity of evaluation over time.14

1.13 The department’s Policy Analysis and Evaluation Branch15, within the Indigenous Affairs Group (IAG), is responsible for leading the implementation of the IAS evaluation framework, including developing an annual evaluation work plan, managing strategic evaluations and providing expert advice to IAG program areas on evaluation. IAG program areas are responsible for conducting the majority of program evaluations under the framework. To receive funding for evaluations under Program 2.6 they must approach the evaluation branch and gain approval from the IAG Program Management Board.16 As at April 2019 the evaluation branch had an average of 15.4 full-time equivalent staff working on evaluation.

Rationale for undertaking the audit

1.14 There is strong interest from Parliament and the community in ensuring funding provided through government programs achieves intended outcomes. Effective performance measurement and evaluation is critical to determining whether these outcomes are being achieved.

1.15 The audit was undertaken to provide assurance that the design and early implementation of the department’s evaluation framework for the IAS has been effective. It also provided an opportunity to assess the early impacts of the framework on evaluation practices and inform its ongoing management.

Audit approach

Audit objective, criteria and scope

1.16 The objective of the audit was to examine the effectiveness of the design and implementation of the department’s evaluation framework for the IAS in achieving its purpose to ensure that evaluation is high quality, ethical, inclusive and focused on improving outcomes for Aboriginal and Torres Strait Islander peoples.

1.17 To form a conclusion against this objective, the ANAO adopted the following high level criteria:

- Has the department’s evaluation framework been designed to support the achievement of the Government’s policy objectives? (Chapter 2)

- Is the department’s evaluation framework being effectively implemented and managed? (Chapter 3)

- Are evaluations being conducted in accordance with the department’s evaluation framework to improve outcomes for Aboriginal and Torres Strait Islander peoples? (Chapter 4)

1.18 The audit focussed on evaluation activities conducted by the department since the commencement of the IAS in July 2014, and the development of its evaluation framework over this period. It did not examine evaluations of Aboriginal and Torres Strait Islander programs conducted by other Australian Government agencies or the states and territories.

Audit methodology

1.19 The audit methodology included:

- examining departmental records related to the establishment of the IAS evaluation framework;

- analysis of documentation relating to recent program evaluations conducted by the department; and

- interviews with departmental staff in the evaluation branch and IAG program areas.

1.20 The audit was conducted in accordance with the ANAO auditing standards at a cost to the ANAO of approximately $335,000.

1.21 The team members for the audit were Daniel Whyte, Lynette Tyrrell, David Lacy, Iain Gately, James Woodward and Deborah Jackson.

2. Design of the Framework

Areas examined

This chapter examines whether the Department of the Prime Minister and Cabinet’s (the department’s or PM&C’s) evaluation framework was designed to support the achievement of the Government’s policy objectives for the Indigenous Advancement Strategy (IAS).

Conclusion

Following substantial delays in establishing an evaluation framework, the department is now designing a framework that has the potential to support the achievement of the Government’s policy objectives by strengthening evaluations under the IAS. The design of the framework has been informed by recognised principles of program evaluation, relevant literature, previous evaluation activity and stakeholder feedback. The framework could more clearly link evaluation to the Government’s objectives for the IAS and other relevant strategic frameworks such as Closing the Gap.

Were timeframes for the design of the framework met?

From its initial policy commitment to develop an evaluation framework for the IAS by June 2014, the department set several deadlines for finalising the framework that were not met. In 2017 the department established a dedicated evaluation program under the IAS and committed to establishing a new evaluation framework. By July 2018 most elements of the IAS evaluation framework were in place.

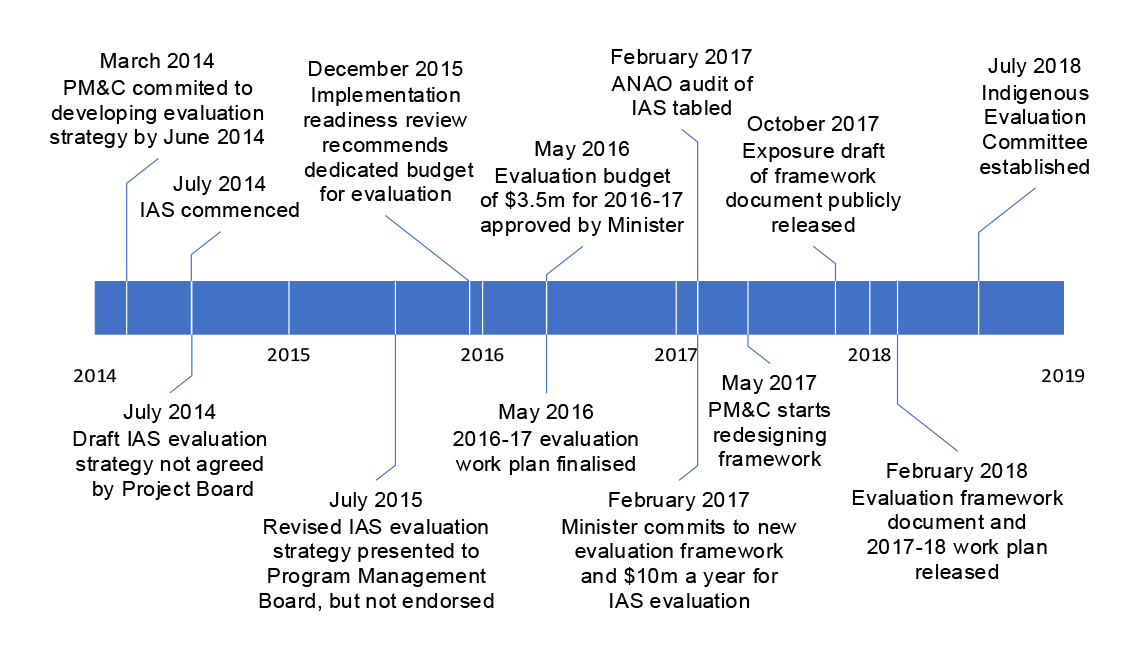

2.1 This section describes the department’s progress in designing the IAS evaluation framework from 2014 to 2018. A high-level timeline for the design of the IAS evaluation framework is shown in Figure 2.1.

Figure 2.1: Timeline of the design of the IAS evaluation framework

Source: ANAO analysis.

Initial work on establishing an IAS evaluation framework

2.2 In March 2014 the department developed an implementation strategy for the IAS in which it committed to developing an evaluation and performance improvement strategy by June 2014, which would be agreed by the Minister for Indigenous Affairs (the Minister). This commitment stated that the strategy would: focus on timely performance measurement and testing how and why interventions work; incorporate a rolling annual plan of priority evaluations; and be overseen by an evaluation committee.

2.3 The evaluation branch presented a draft evaluation and performance improvement strategy to the Indigenous Affairs Reform Implementation Project Board17 (the board) in July 2014. The briefing package included a high-level project plan outlining activities that would be completed in late 2014, such as:

- establishing an evaluation committee;

- finalising the strategy and consulting with Aboriginal and Torres Strait Islander and mainstream stakeholders prior to its implementation;

- finalising the first rolling evaluation work plan; and

- developing business rules (such as policies and procedures relating to evaluation quality and ethics approval).

2.4 The board did not agree to the strategy and, noting a lack of detail about activities, requested that a revised framework be brought to its next meeting in late July 2014. A revised framework was not presented at the board’s next meeting; instead the evaluation branch provided a revised schedule that indicated the draft rolling evaluation work plan would be developed and the evaluation committee established by August 2014. These timeframes were not met.

2.5 In July 2015 the evaluation branch presented a revised draft of the evaluation and performance improvement strategy to the newly-formed Indigenous Affairs Group (IAG) Program Management Board18 for information rather than endorsement. The agenda paper stated that: the branch would come back with a formal process for prioritising evaluations for 2015–16 in a multi-year plan; and, rather than establishing a separate evaluation committee, the board could fulfil this role under its terms of reference.

2.6 In November 2015 an implementation readiness review of the IAS by the Department of Finance recommended that PM&C allocate a dedicated budget for evaluation by June 2016. After consulting with program areas in late 2015, the evaluation branch developed its first rolling evaluation work plan in March 2016. The plan outlined 28 evaluation projects that were underway or expected to commence in 2016 or 2017. In March 2016, on the basis of the implementation readiness review recommendation and 2016 evaluation work plan, the department asked the Minister to agree to expenditure of $7 million over 2016–17 and 2017–18 to fund identified evaluation projects, stating:

[The funding being sought] would only be a fraction of the funding level recommended internationally for evaluation which is in the range of 2–10% of programme funds. … At some point the current situation will become untenable as it is not sustainable to continue to fund activities that lack a good evidence base.

2.7 In May 2016 the Minister approved a budget of $3.5 million for 2016–17, but did not approve funding for 2017–18. Later in 2016 the Minister approved two additional evaluation projects, bringing the total evaluation commitment for 2016–17 to around $4.5 million. An internal paper prepared in late 2016 noted: ‘Single year funding makes it impossible to plan and implement evaluations of larger programs as to do such evaluations well can take several financial years from concept to completion’.19

2.8 The ANAO’s performance audit of the IAS (Auditor-General Report No.35 2016–17) noted that the department did not have a formal evaluation strategy or evaluation funding for the IAS for its first two years.20

2018 IAS evaluation framework

2.9 Following the ANAO’s performance audit of the IAS, in February 2017 the Minister announced funding of $10 million a year from 2017–18 to strengthen IAS evaluation.

2.10 With a new IAS program to support evaluation, dedicated multi-year funding and a commitment to underpin evaluation with a formal framework, the department commenced work on redesigning its evaluation framework in May 2017. A paper to the IAG Executive Board in June 2017 outlined an intention to undertake consultation with stakeholders in July 2017 and publicly release a framework document and 2017–18 evaluation work plan in August 2017. The paper also indicated consideration be given to establishing an independent Indigenous Evaluation Committee.

2.11 In August 2017 the department provided a draft evaluation framework document, which had been developed in consultation with key stakeholders, to the Minister for noting. The brief stated that the framework document would be released as an exposure draft for three weeks in September 2017. The final framework document was to be provided to the Minister for approval at the end of September prior to its public release. Release of the exposure draft was delayed pending the Minister’s noting of the brief, and it was published on the department’s website for public consultation from 10 to 30 October 2017.

2.12 In October and November 2017 the department finalised the 2017–18 evaluation work plan and gained authority from the Minister to approve the procurement of evaluation services under Program 2.6. It also reviewed submissions and updated the evaluation framework document during November and December 2017.

2.13 In January 2018 the department briefed the Minister that the framework document and work plan would be released on 22 January 2018 and provided copies of the final documents for information. The brief asked the Minister to agree to annual funding of $150,000 from Program 2.6 to support the establishment of an Indigenous Evaluation Committee. The Minister signed the brief on 27 February 2018. He did not agree to fund the committee21 and instructed the department to seek his approval for the membership of the committee.

2.14 The framework document and work plan were released on the department’s website on 28 February 2018. The Minister agreed to the membership of the Indigenous Evaluation Committee and an annual budget of $100,000 in April 2018. The Committee membership was announced in July 2018.

2.15 In summary, following the Minister’s 2017 announcement, the department has delivered most key elements of its evaluation framework (see Table 2.1).

Table 2.1: Delivery dates for 2018 IAS evaluation framework elements

|

Framework element |

Date |

|

Dedicated budget for IAS evaluations announced |

February 2017 |

|

Formal evaluation framework published |

February 2018 |

|

Annual rolling evaluation work plan first published |

February 2018 |

|

Indigenous Evaluation Committee members announced |

July 2018 |

|

Business rules and procedures for evaluation developed |

Ongoing |

Source: ANAO analysis.

Has the framework been informed by relevant literature, previous evaluation activity and stakeholder feedback?

The development of the framework was informed by relevant literature, including other entities’ evaluation strategies and recognised program evaluation principles, and the limited evaluation activity previously undertaken in Aboriginal and Torres Strait Islander programs. The department undertook consultation on the framework and incorporated stakeholder feedback into its design.

Relevant literature

2.16 The department made a decision early in the design process that the evaluation framework would be principles-based, and in June 2017 informed the Minister that it would be based on ‘international best practice’. The final framework document included nine ‘best practice principles’, grouped into four broad criteria: relevant, robust, credible and appropriate (see Appendix 2 for a full description of the principles).

2.17 The ANAO analysed the department’s evaluation principles and found they had been informed by recognised program evaluation principles and other Australian Government entities’ evaluation frameworks:

- all principles align with recognised evaluation principles22; and

- seven principles (integrated, evidence-based, impact-focused, transparent, independent, timely and fit-for-purpose) were adapted from principles outlined in evaluation strategies published by other Australian Government departments in 2015.23

2.18 In addition, the published framework document cites a number of relevant publications, including:

- guidance material from the Department of Finance, Australian Institute of Aboriginal and Torres Strait Islander Studies and the ANAO;

- evaluation textbooks and publications on evaluation methods produced by international organisations; and

- academic articles on Aboriginal and Torres Strait Islander program evaluation.

Previous evaluation activity

2.19 Following the machinery-of-government changes in late 2013 that saw the department take responsibility for Aboriginal and Torres Strait Islander Affairs, an internal review of its inherited programs found:

[30 per cent] of the activities transferred into PM&C had been evaluated in the past five years, and only 12 per cent of activities reported having evaluation strategies in place. The fragmentation of programmes exacerbated the evaluation limitations, with 35 per cent of the evaluations provided being only partial evaluations. Only 20 per cent of activities mapped had full evaluations.

More than 50 per cent of the evaluations conducted were process focused evaluations as opposed to outcome evaluations. Of the outcome evaluations, only 30 per cent showed mostly positive outcomes. This equates to only seven per cent of activities being able to show they were achieving mostly positive results against stated objectives.24

2.20 In addition, an internal options paper developed by the department in late 2016 identified two issues with its approach to evaluation under the IAS: a need for evaluation reports and government responses to be made public in a timely way; and lack of ongoing certainty of funding.

2.21 The department has sought to address these issues in its design of the IAS evaluation framework through:

- the ‘integrated’ principle — which includes a focus on early evaluation planning;

- the ‘impact-focused’ principle — which promotes the use of impact evaluation;

- the ‘transparent’ principle — which requires all evaluation reports or summaries to be made publicly available and senior management to provide responses to evaluations, with proposed actions recorded and followed up; and

- the provision of a dedicated budget of $10 million a year for evaluation.

Stakeholder feedback

2.22 During July and August 2017 the department invited comment on the draft framework from identified ‘critical friends’:

- five evaluation specialists from Australian universities;

- three Aboriginal and Torres Strait Islander bodies/organisations — the Lowitja Institute, National Congress of Australia’s First Peoples and Prime Minister’s Indigenous Advisory Council; and

- four Australian Government entities — the Australian Institute of Aboriginal and Torres Strait Islander Studies, ANAO25, Department of Foreign Affairs and Trade and Productivity Commission.

2.23 In addition, as noted in paragraph 2.11 above, an exposure draft of the framework was published on the department’s website for public consultation from 10 to 30 October 2017. The department invited approximately 120 organisations or individuals to provide comment on the exposure draft and received 10 submissions, primarily from Aboriginal and Torres Strait Islander peak bodies and academic evaluation specialists.

2.24 While the department did not maintain complete records of its consultation, there is evidence that stakeholder feedback informed the final design of the framework. Key changes that were made to the framework document in response to stakeholder feedback include:

- creating a separate ‘ethical’ principle under the ‘credible’ criterion;

- increased reference to respect for the values and aspirations of, and collaboration with, Aboriginal and Torres Strait Islander peoples; and

- a commitment to establish an independent evaluation committee, based on the model of the Department of Foreign Affairs’ evaluation framework for aid programs.

2.25 Further, stakeholders commented on the need for greater clarity on: implementation arrangements, particularly on how increased collaboration with and involvement of Aboriginal and Torres Strait Islander peoples would be operationalised; and how the framework relates to the Closing the Gap framework and evaluating the IAS as a whole. The department considered this feedback, and added a requirement that all evaluations under the IAS build in appropriate collaboration processes, but it did not address the comments on the broader policy context in revisions to the framework document.

Does the framework align with relevant strategic frameworks?

The extent to which the IAS evaluation framework aligns with relevant strategic frameworks is mixed. The framework document includes references to the enhanced Commonwealth performance framework and a future whole-of-government evaluation strategy for policies and programs that affect Aboriginal and Torres Strait Islander peoples. However, it provides limited detail of how evaluations under the framework will assess contribution to Closing the Gap or whether the Government’s policy objectives for the IAS are being achieved.

Alignment with the IAS

2.26 An evaluation framework for a major strategy, such as the IAS, should outline how it will support an assessment of whether the Government’s policy objectives for the strategy are being achieved. Evaluations should consider the extent to which policies or programs are successful in achieving their stated objectives. They should also consider whether policies and programs are aligned with and contribute to the Government’s higher-level objectives (for example, Closing the Gap).

2.27 As noted in paragraph 1.12, the IAS evaluation framework document is a principles-based document that describes how evaluations of IAS programs should be conducted. It states that the framework is designed to ensure evaluations are ‘focused on improving outcomes for Indigenous Australians’ and ‘make a positive contribution to the lives of Aboriginal and Torres Strait Islander peoples’.26 However, it provides little detail about how evaluations under the framework will facilitate an assessment of whether the Government’s policy objectives for the IAS are being achieved. In addition, the IAS evaluation framework document does not include any references to the Government’s five key priority areas for Aboriginal and Torres Strait Islander program investment27 or the IAS program objectives or outcomes.

2.28 The department’s 2018–19 annual evaluation work plan, published in December 2018, outlined a proposed project to develop a strategy to assess the ‘system effectiveness’ of the IAS. In line with this, in February 2019 the department engaged a contractor to develop an outcomes framework for Programs 2.2 (Children and Schooling) and 2.3 (Safety and Wellbeing) by mid-2019, with the intention that it could be extended to apply across the IAS.

Alignment with whole-of-government frameworks

2.29 The ANAO’s recent performance audit of Closing the Gap (Auditor-General Report No.27 2018–19) noted that the department’s IAS evaluation framework document does not include any references to the Closing the Gap framework.28 The document does not articulate how evaluations under the framework will assess the contribution of IAS programs to the Closing the Gap targets.

2.30 At the time the department was developing its IAS evaluation framework, the Australian Government did not have a whole-of-government evaluation-related strategy or framework to guide evaluation activities. Guidance on the Public Governance, Performance and Accountability Act 2013 (PGPA Act) enhanced Commonwealth performance framework provides a description of what evaluation is, typical approaches, and strengths and limitations, but does not outline any standards or requirements for evaluations undertaken within the Australian Government.29 The department’s IAS evaluation framework document references the performance requirements under the PGPA Act, noting that monitoring and evaluation systems have complementary roles in generating evidence.

2.31 Through the 2017–18 Budget, the Government agreed that the Productivity Commission would develop a whole-of-government evaluation strategy for policies and programs that affect Aboriginal and Torres Strait Islander peoples, overseen by a new commissioner with experience in this area. The IAS evaluation framework document states that it is ‘intended to align with the wider role of the Productivity Commission in overseeing the development and implementation of a whole of government evaluation strategy of policies and programs that effect Indigenous Australians’.30 However, due to delays in the passage of legislation that would allow the appointment of a new commissioner and subsequent delays in appointing a commissioner31, the development of the whole-of-government evaluation strategy did not commence until April 2019. The proposed evaluation strategy is to be completed by July 2020 and include: a principles based framework for evaluation of policies and programs affecting Aboriginal and Torres Strait Islander peoples; the identification of priorities for evaluation; and the development of an approach for reviewing agencies conduct of evaluations in accordance with the strategy.

3. Implementation and management of the framework

Areas examined

This chapter examines whether the Department of the Prime Minister and Cabinet (the department or PM&C) is implementing and managing its Indigenous Advancement Strategy (IAS) evaluation framework effectively.

Conclusion

The department’s implementation and management of the IAS evaluation framework is partially effective. Management oversight arrangements are developing, and evaluation advice provided to program area staff has been relevant and high quality. The department has not developed a reliable methodology for measuring outcomes of the framework and its evaluation procedures are still being developed.

Areas for improvement

The ANAO made two recommendations aimed at ensuring the department has: appropriate performance measures for the evaluation framework; and evaluation procedures to support framework implementation. The ANAO also suggested that the department more regularly review and update its project activity schedule and develop a plan for how it will achieve its desired future evaluation maturity levels.

Has the department established an implementation process?

The department has established an implementation process for the IAS evaluation framework, which could be improved through more regular reviews of its project activity schedule. Implementation has included a range of activities designed to improve evaluation quality and build evaluation capability.

3.1 To ensure the effectiveness of a new policy initiative, an implementation plan should be developed prior to the commencement of the initiative that clearly articulates how new processes, programs and services will be delivered on time, on budget and to expectations. Key areas for consideration include project scheduling and management, governance, stakeholder engagement, risk management, monitoring and evaluation, and resource management.32

3.2 Between May and September 2017, during the period it was designing key elements of the IAS evaluation framework, the evaluation branch drafted several incomplete planning documents. These documents outlined planned timeframes for the design, consultation and public release of the IAS evaluation framework document and 2017–18 work plan, but did not include any subsequent implementation activities.

3.3 The framework document, published in February 2018, included commitments to implement various activities designed to improve evaluation quality and build the department’s evaluation capability, including establishing an Indigenous Evaluation Committee (IEC), developing an online evaluation handbook complemented by internal support materials and publishing an annual report on IAS evaluation activities. No timeframes were specified in the framework for the implementation of these activities, and, at the time of its publication, the department did not have an implementation plan outlining how these activities would be delivered.

3.4 The department advised the ANAO that its focus from late 2017 to early 2018 was on establishing the IEC, developing the 2017–18 annual evaluation work plan and establishing arrangements for administering Program 2.6, rather than planning for longer-term framework implementation.

3.5 In mid to late 2018 the evaluation branch completed several implementation planning activities, including developing a program logic, project plan and project activity schedule. The ANAO reviewed the project activity schedule and found all activities the department had committed to in its IAS evaluation framework document could be mapped to an associated activity in the schedule. The majority of these activities had either been completed or were underway. Although some elements of the project activity schedule were updated during the audit, some key activities in the schedule that the department informed the ANAO were underway were still classified as ‘TBA’ or ‘possible future activities’ and had not been assigned delivery timeframes. It would be good practice for the department to review and update its project schedule regularly to ensure the schedule provides an accurate plan for implementation.

Has the department established relevant and reliable performance measures for the framework?

While its performance criterion is relevant, the department has not developed a reliable methodology for measuring the longer-term outcomes of the framework. The department’s performance targets for the IAS evaluation framework focus on the delivery of short-term outputs.

3.6 As noted in paragraph 3.1, establishing appropriate mechanisms for monitoring and evaluating the performance of a policy initiative is an important aspect of implementation planning. The IAS evaluation framework document includes three activities relating to assessing its performance:

- providing cross-cutting evaluations undertaken by the evaluation branch to the IEC for review and publishing the IEC reviews along with the evaluations33;

- publishing an annual report on IAS evaluation activities that includes reviews of randomly selected IAS evaluations; and

- undertaking an independent meta-review of IAS evaluations after three years.

3.7 The department advised that IEC reviews of cross-cutting evaluations and the compilation of an annual report on IAS evaluation would focus on evaluations fully conducted under the framework. As no evaluations had been fully conducted under the framework at the time of this audit, the department had not commenced these activities.

3.8 The evaluation branch has also established performance measures at the departmental and divisional level for assessing the performance of the IAS evaluation framework (see Table 3.1 and Table 3.2). The Portfolio Budget Statement performance criterion is an effectiveness measure and is relevant to the Program 2.6 objective (‘to improve the lives of Indigenous Australians by increasing evaluation and research into policies and programs impacting on Indigenous Peoples’34), but the branch has not developed a reliable methodology for measuring performance against this criterion.35 The majority of its performance targets and measures relate to the delivery of outputs (for example, publishing the annual work plan, establishing the IEC and delivering projects within agreed timeframes).

Table 3.1: Program 2.6 performance criteria for the IAS evaluation framework

|

Year |

Performance criteria |

Targets |

|

2018–19 |

Increased understanding of whether IAS funding and policies are effective. |

Publication of the Annual Evaluation Work Plan taking into account size, reach and ‘policy risk’ of the program or activity and the strategic need of the evaluation. Establishment of an Indigenous Evaluation Committee in 2018 to strengthen the quality, credibility and independence of evaluation activity. |

|

2019–20 and beyond |

Increased understanding of whether IAS funding and policies are effective. |

Publication of the Annual Evaluation Work Plan each September taking into account size, reach and ‘policy risk’ of the program or activity and the strategic need of the evaluation. |

Source: PM&C, Budget 2018–19, Portfolio Budget Statements 2018–19, Budget Related Paper No. 1.14. Prime Minister and Cabinet Portfolio, 2018, p. 45.

Table 3.2: Divisional performance criteria for the IAS evaluation framework

|

Year |

Divisional level activity/key performance indicator (KPI) |

Measurement |

|

2018–19 |

Increasing the evidence base and supporting an evidence based culture Contribute to building the evidence-base so the Group is well-positioned to understand the likely outcome of government investment and using evidence to inform policy and ensure investment is well targeted and adaptable. KPI: The Prime Minister, Minister for Indigenous Affairs, the Cabinet and Executive and stakeholders are highly satisfied with the quality, relevance and timeliness of research and evaluation advice and support provided. |

Policy research and evaluation projects are delivered within agreed timeframes. Evaluation Work Plan is approved and published by end Sept 2018. Attendance rates at IAG Seminars. |

Source: PM&C internal documentation.

3.9 An internal audit report completed in May 2018 on the department’s 2018–19 performance measures noted with regard to the measures for Program 2.6:

…the targets are outputs or deliverables rather than outcomes of Program 2.6. This is understandable given that Program 2.6 provides support to the other programs, but it may be beneficial to include an outcome focused criterion/target in the 2018–19 Corporate Plan.

3.10 The report included a recommendation that the department enhance its performance criteria and targets for Program 2.6 in its corporate plan, and suggested a potential target could be ‘the appropriate refinement of IAS policies and strategies based on the outcomes of evaluation and research, evidenced by case studies’. While the department agreed to the recommendation, it did not implement it. The department’s 2018–19 Corporate Plan only includes very high-level performance measures and does not include any performance criteria or targets for Program 2.6.36

3.11 Establishing a dedicated Budget program for evaluation provides transparency about evaluation expenditure and enables high-level monitoring of its performance, providing relevant and reliable performance measures are developed. In line with the internal audit recommendation, the department should develop a reliable methodology for measuring the longer-term outcomes of the evaluation framework. If the methodology involves using case studies, it should ensure that it follows the guidance on using case studies as a reliable measure of performance outlined in Auditor-General Report No.17 2018–19 Implementation of the Annual Performance Statements Requirements 2017–18.37

Recommendation no.1

3.12 The Department of the Prime Minister and Cabinet ensure its performance information for Program 2.6 is supported by a reliable methodology for measuring the longer-term outcomes of better evidence from the evaluation framework.

Department of the Prime Minister and Cabinet response: Agreed.

3.13 The Department agrees there are evaluation capability, accountability and increased transparency objectives (associated with the Framework) that can be measured in the longer term. The Department is considering how best to measure such benchmarks so they provide meaningful information (i.e. a useful feedback loop) on how the Department’s evaluation culture evolves over time. The Department is in the process of developing its methodology for measuring longer-term outcomes of the Framework, with work expected to be completed by mid-2020.

3.14 An important consideration when developing these will be the complex policy space in which the IAS operates. When measuring the Framework’s effectiveness we will need to recognise the role of mainstream service delivery, the tax and transfer system and States and Territories. The role of the Framework is to assess the impact of the department’s policies and programs against their objectives.

Has the department established appropriate procedures, tools, training and communications?

The department is developing procedures and tools for evaluation activities but they are not yet fully accessible or comprehensive. It has facilitated discrete evaluation training activities and evaluation advice provided to program area staff has been relevant and high quality.

Procedures and tools

3.15 To effectively embed better evaluation practices, an entity should provide guidance to staff on its evaluation approach that is supported by clear procedures and training. As discussed in Chapter 2, the development of business rules and procedures to support the evaluation framework is ongoing. The IAS evaluation framework document states that the department will develop:

- resource materials to support and encourage staff, service providers and Aboriginal and Torres Strait Islander communities to do and use evaluation in line with the core values and principles; and

- an online evaluation handbook complemented by internal support materials.

3.16 The department has commenced the development of an online handbook, which it expects will be completed by mid-2019. The handbook is expected to cover a range of different evaluation approaches and methodologies and will be aligned to the goals of the IAS evaluation framework.

3.17 The department also provides procedural guidance and technical evaluation documentation in its evaluation toolkit. The toolkit is accessible from the department’s evaluation intranet site and comprises a range of documents including procedural guidance, tip sheets, templates, research articles and internal and external guest presentations. While the toolkit was developed prior to the release of the 2018 IAS evaluation framework document, some content has been developed or refined since in response to evolving business rules.

3.18 An August 2018 review, commissioned by the evaluation branch, noted that the development of procedural guidance had been an ‘iterative process’, which had caused some confusion for program areas, and the structure of the toolkit made it difficult to navigate. It also noted that there were gaps in the toolkit, as some business rules and procedures had not been finalised. The review recommended a new guidance document hierarchy and identified additional guidance documents that the branch should develop.

3.19 At the time of this audit, the actions recommended in the review had not been implemented and business rules and procedures were still being developed. In particular, there was not adequate guidance on:

- prioritising evaluations;

- collaboration with Aboriginal and Torres Strait Islander peoples on the design, conduct and use of evaluations;

- managing independence of evaluators and evaluation teams; and

- using evaluations to support decision-making and improvements in service delivery.

3.20 The development of IAS evaluation guidance materials is included as an enabling activity in the 2018–19 annual evaluation work plan and the department advised that it was working to develop interactive procedures to support evaluation activities. The department should ensure that adequate guidance is developed on all aspects of the IAS evaluation framework, including its best practice principles, to support the implementation of the framework.

Recommendation no.2

3.21 The Department of the Prime Minister and Cabinet develop a comprehensive and easy to navigate set of procedures to support the implementation of the IAS evaluation framework.

Department of the Prime Minister and Cabinet response: Agreed.

3.22 Work is underway in the Department to set procedures in place, with an emphasis on ease of navigation, comprehensiveness and capability building.

Training

3.23 Since the release of the IAS evaluation framework in 2018, the evaluation branch has facilitated various training activities including:

- presenting a range of short evaluation training sessions targeted at evaluation branch and Indigenous Affairs Group (IAG) program area staff, covering topics such as evaluation terminology, impact evaluation, data analysis and the IAS evaluation framework; and

- contracting the Australian Institute of Aboriginal and Torres Strait Islander Studies to deliver ethics training.

3.24 IAG program managers, interviewed by the ANAO in late 2018, provided feedback that their staff were attending the training provided by the evaluation branch and it was raising awareness about evaluation within their teams. The branch has not conducted analysis of the effectiveness of its training activities to assess whether it meets program areas’ needs.

3.25 While the evaluation branch did not conduct a training needs assessment to inform the development of its evaluation training activities, it undertook some early planning on the potential content and structure of an evaluation training package. While the department advised the ANAO that the development of a training package that would support the diverse range of evaluation undertaken in IAG was challenging and resource intensive, it should develop training materials to support IAG staff in core activities such as developing an evaluation strategy or procuring evaluation services.

Communications and engagement

3.26 The evaluation branch finalised a communication and engagement framework in January 2019 that documents its current engagement activities and identifies further opportunities, such as establishing a seminar series, presenting evaluation findings at conferences and updating its intranet site.

3.27 The branch’s primary engagement activity is the technical evaluation advice it provides to IAG program areas. For evaluations funded under Program 2.6, the branch’s process is to review key evaluation procurement documentation (such as requests for tender, ethics reviews, tender assessments and draft contracts), focussing on assessing the methodological rigour of proposed evaluation approaches and value for money.

3.28 The branch conducts a ‘triage’ assessment to determine the team member best placed to assist with required evaluation work and uses a template to document its advice on areas for improvement to program areas. There is close alignment between the templates and the requirements of the evaluation framework. The evaluation branch tracks evaluation activities it is supporting in a SharePoint tool called the Evaluation Tracker. The advisor for each evaluation enters data in the tracker using a ‘code book’, which supports a standardised approach to data entry.

3.29 The processes used by the evaluation branch to provide, manage and track technical advice to program areas are appropriate given they align with the framework and are supported by documented and standardised processes. IAG program managers interviewed by the ANAO reported that the advice provided by the evaluation branch was responsive, high quality and aimed at building evaluation capability within IAG program areas.

Has the department established effective management oversight arrangements?

The department’s management oversight arrangements for the IAS evaluation framework are maturing. A mechanism for monitoring evaluation findings and management responses is being developed.

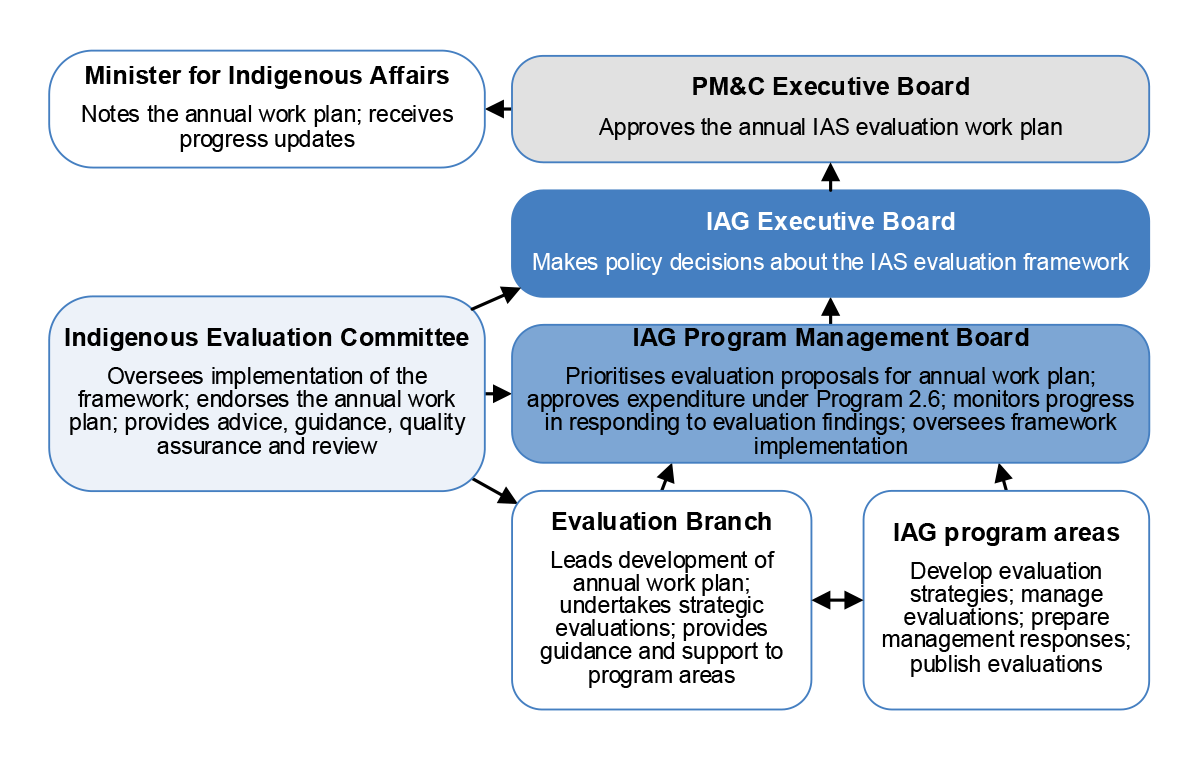

3.30 The IAS evaluation framework document states that clear governance is required to guide roles and responsibilities for IAS evaluation, including prioritising evaluation effort, ensuring evaluation quality, assessing implementation progress, supporting evaluation use and building evaluation capacity. The management oversight structure for the IAS evaluation framework is depicted in Figure 3.1.

Figure 3.1: Oversight structure for IAS evaluation framework

Source: ANAO analysis of departmental documentation.

Management oversight bodies

3.31 The evaluation framework document states that the PM&C Executive Board is responsible for approving the annual evaluation work plan and reviewing progress reports against it. There was limited evidence that the IAG Executive Board were involved in the oversight of IAS evaluation activities. The two key oversight bodies have been the IEC and IAG Program Management Board.

Indigenous Evaluation Committee

3.32 The IEC members were announced in July 2018 after the Minister for Indigenous Affairs approved its membership and budget in April 2018. Its terms of reference state that its objective is to strengthen the quality, credibility and influence of the evaluations of policies and programs led by the department through the provision of independent strategic and technical advice.

3.33 As at April 2019 the IEC had met three times and had endorsed the 2018–19 annual evaluation work plan. The IEC expressed concern in late 2018 that a number of completed evaluations referenced in 2018–19 annual evaluation work plan had not been published. (Compliance with the framework requirement to publish evaluation reports is discussed in more detail in Chapter 4.)

IAG Program Management Board

3.34 The role of the IAG Program Management Board (the board) is to make decisions and provide advice to IAG program areas and the IAG Executive on the implementation of programs, particularly the IAS. The evaluation branch has been working to formalise and expand the role of the board in overseeing the implementation of the IAS evaluation framework and IAS evaluation activity. In February 2018 the branch presented a paper to the board seeking agreement to expand its terms of reference to include:

- supporting the development of the annual evaluation work plan;

- considering evaluation reports in order to integrate findings into policy and program improvement;

- monitoring progress of identified actions from management responses to completed evaluation reports;

- championing the use of evidence in policy development and service delivery; and

- providing oversight for the implementation of measures to support the framework.

3.35 While the board agreed to the proposal in February 2018, its terms of reference had not been updated to reflect the expanded role as at April 2019.

Monitoring evaluation findings and management responses

3.36 Since late 2018 the evaluation branch has been working to clarify roles and responsibilities for monitoring and enforcing compliance with aspects of the evaluation framework; in particular, the requirements outlined in the IAS evaluation framework document that:

all evaluation reports or summaries will be made publicly available … [and]

senior management will provide responses to evaluations following completion, and identified actions will be followed up and recorded.38

3.37 In October 2018 the evaluation branch presented a paper to the Program Management Board seeking its agreement that the Program Office (a separate division within IAG) be tasked with recording and monitoring evaluation findings and subsequent management responses in a central database to support the board’s oversight role. The board agreed that it had a role in monitoring evaluation findings and management responses and asked the evaluation branch to submit a paper to the IAG Executive Board to ensure the right mechanism is developed to fulfil this function.

3.38 An internal audit of the IAS evaluation framework, completed in November 2018, also recommended that an action owner be identified for all IAS evaluation recommendations and that a formal process be developed for tracking and monitoring the implementation of actions arising from evaluations. The department agreed with the recommendation stating that the IAG Executive Board would decide the most appropriate mechanism for implementation.

3.39 Rather than submitting a paper to the IAG Executive Board, in April 2019 the evaluation branch gained approval from the Associate Secretary, the Chair of the IAG Executive Board, for the implementation of a range of activities to strengthen governance arrangements and the role of the IAG Program Management Board in policy development and evaluation oversight, including the tracking and reporting of agreed action items from management responses.

3.40 The department started tracking the status of action items arising from completed evaluations and action owners in a spreadsheet from February 2019, and it is scoping requirements for a more advanced system to capture evaluation findings and recommendations, and management responses.

Is a culture of evaluative thinking being developed within the Indigenous Affairs Group?

There are early indications that the implementation of the IAS evaluation framework may be developing a culture of evaluative thinking within IAG. Developing a plan for how framework implementation activities will lead to the desired changes in maturity would assist the department in achieving its future maturity levels.

3.41 One of the goals of the IAS evaluation framework is to ‘build capability by fostering a collaborative culture of evaluative thinking and continuous learning’.39 The framework seeks to build this culture of evaluative thinking through implementing activities under three streams: collaboration, capability and knowledge.

3.42 In May 2018 the evaluation branch developed a maturity model for the implementation of the framework. The maturity model describes the department’s self-assessed level of evaluation maturity in June 2017 and outlines where it would like the maturity level to be in June 2019, June 2020 and June 2021, but does not explain how this will be achieved (the maturity model is shown at Appendix 3). By June 2019 the model anticipates that ‘appreciation of the benefits of evaluation [would be] improving’ and ‘evaluation [would be] viewed as core business for the Group, not simply a compliance activity’.

3.43 To assist in understanding current levels of knowledge and awareness about evaluation, the evaluation branch conducted a survey of IAG program area staff from December 2018 to January 2019. The results for questions relating to support for and perceptions of evaluation indicate a majority of staff (89 per cent) see evaluation as important and roughly half (51 per cent) agree that senior executives in their division support or promote evaluation (see Figure 3.2).

Figure 3.2: IAG staff survey results on evaluation perceptions and support

Source: PM&C internal documentation.

3.44 The ANAO also conducted interviews with IAG program managers in late 2018. Most managers interviewed demonstrated an understanding of the value of evaluation, were aware of the evaluation framework and annual work plan processes and were able to provide examples of evaluations that were being conducted in their program areas.

3.45 Embedding an evaluative culture within an entity can take several years and requires appropriate resourcing, expertise in change management and strong commitment from senior management. Survey results and feedback from program areas suggests that the implementation of the IAS evaluation framework may be starting to generate the desired cultural change within IAG. Developing a plan for how framework implementation activities will lead to the desired changes in maturity would assist the department in achieving its future maturity levels.

3.46 The department’s dedicated evaluation funding, specialised evaluation support function and increased level of oversight of evaluation activities are positive indicators for future culture change.

4. Application of the framework

Areas examined

This chapter examines whether evaluation activities are conducted is in accordance with the Department of the Prime Minister and Cabinet’s (the department’s or PM&C’s) Indigenous Advancement Strategy (IAS) evaluation framework.

Conclusion

As the department is still developing procedures to support the application of the IAS evaluation framework, it is too early to assess whether evaluations are being conducted in accordance with the framework. The department’s approach to prioritising evaluations should be formalised by developing structured criteria for assessing significance, contribution and risk. The department has taken recent steps to: mandate early evaluation planning; publish completed evaluations; and ensure findings are acted upon.

Areas for improvement

The ANAO made one recommendation aimed at formalising the prioritisation of evaluation activities. The ANAO also suggested developing procedures to support the application of better practice principles.

4.1 Since the publication of the 2018 IAS evaluation framework document, the department’s evaluations of Aboriginal and Torres Strait Islander programs have been guided by a set of nine best practice principles: integrated, respectful, evidence-based, impact-focused, transparent, independent, ethical, timely and fit-for-purpose (see Appendix 2 for descriptions of the principles).

4.2 To assess the extent to which the department has been applying the principles, the ANAO examined departmental business processes and documentation, and conducted interviews with Indigenous Affairs Group (IAG) program managers. To obtain a baseline assessment of the application of the best practice principles prior to the implementation of the IAS evaluation framework, the ANAO analysed 29 evaluations or reviews listed in the department’s 2018–19 evaluation work plan that were underway as at October 2018 or had been completed in 2017 or 2018 (a list of evaluations and reviews in the 2018–19 work plan and their status is at Appendix 4). Table 4.1 outlines the best practice principles examined in each sub-section of the chapter.

Table 4.1: Best practice principles examined in this chapter

|

Best practice principles |

Chapter sub-section |

|

Fit-for-purpose |

Are evaluations being effectively prioritised by considering coverage, significance, contribution and risk? |

|

Integrated; Timely |

Are evaluations being planned at an early stage of program development? |

|

Respectful; Independent; Ethical |

Are evaluations being designed to be respectful, independent and ethical? |

|

Evidence-based; Impact-focused |

Are evaluations impact-focused and evidence-based? |

|

Transparent; Integrated |

Are evaluation findings being published and used to support decision-making and improvements in service delivery? |

Source: ANAO.

Are evaluations being effectively prioritised by considering coverage, significance, contribution and risk?

The department has implemented a process for prioritising evaluations under the IAS evaluation framework through development of its annual evaluation work plan. Formalising this process by developing structured criteria for assessing significance, contribution and risk and conducting a strategic analysis of gaps in evaluation coverage would aid the department in employing a consistent and robust approach to prioritising evaluations.

Prioritisation processes

4.3 Under the IAS evaluation framework’s ‘fit-for-purpose’ best practice principle, the scale of effort and resources devoted to evaluations should be proportional to the potential significance, contribution or risk of the program or activity. In line with this, the IAS evaluation framework document states:

Prioritisation will consider significance, contribution and risk. Significant, high risk programs/activities will be subject to comprehensive independent evaluation. While evaluation priorities will be identified over four years, priority areas remain flexible in order to respond to changing circumstances.40

4.4 Since 2016 the department’s mechanism for prioritising evaluation activities has been through the development of three annual evaluation work plans:

- a 2016 work plan finalised in March 2016 and published on its intranet;

- a 2017–18 work plan published on its website in February 2018; and

- a 2018–19 work plan published on its website in December 2018.

4.5 From 2019–20, the department has committed to publishing its annual evaluation work plan by September each year.

4.6 While different processes were used to develop these work plans, each involved cataloguing existing evaluation activities and conducting a ‘bottom-up’ process to identify new activities. This process involved three steps: the evaluation branch consulting other areas of IAG to identify and develop evaluation proposals; the branch assessing the technical merit, significance, contribution and risk for submitted proposals; and oversight bodies considering and endorsing the draft plan.

4.7 The IAS evaluation framework document provides high-level definitions of significance, contribution and risk, but no detail on how to operationalise these criteria in a prioritisation process. The evaluation branch has draft internal working documents outlining prioritisation approaches used by other entities, but has not developed procedural guidance for the department. In interviews with the ANAO, IAG program managers reported that they did not have structured mechanisms for determining evaluation priorities within their program areas. Consequently, in identifying proposals for the annual work plan it is possible that key priority areas may be missed. Developing structured criteria for assessing significance, contribution and risk would aid the department in employing a consistent and robust approach to prioritising evaluations.

Evaluation coverage

4.8 The IAS evaluation framework document notes: ‘it is not possible nor desirable to evaluate all activities funded by the Department. Sometimes monitoring is sufficient’.41 It also notes the evaluation framework was designed to operate ‘in tandem’ with the department’s IAS performance framework, a separate internal framework maintained by the Program Office that covers the monitoring of all IAS grants.

4.9 At the request of the Indigenous Evaluation Committee (IEC), the department undertook analysis of the geographical spread of evaluations to inform its 2018–19 work plan. However, since the 2013 review discussed in paragraph 2.19, it has not undertaken a strategic ‘top-down’ review of its programs, administered items, activities and sub-activities to identify any gaps in evaluation coverage or determine which areas are of greatest significance, contribution or risk. Undertaking such an analysis, with structured criteria for assessing significance, contribution and risk, would enable the department to have greater assurance that its evaluations are being prioritised appropriately.

Recommendation no.3

4.10 The Department of the Prime Minister and Cabinet formalise its evaluation prioritisation process by developing structured criteria for assessing significance, contribution and risk and conducting a strategic analysis of gaps in evaluation coverage.

Department of the Prime Minister and Cabinet response: Agreed.

4.11 The Department will continue developing its operational evaluation criteria to assist decision makers in their judgements about how to prioritise future evaluation efforts. This however will not be an overly prescriptive or onerous formal assessment and a level of judgement will always be required in priority setting. Any criteria should be designed to provide guidance on whether an evaluation is required, and if so help assess the feasibility of a quality evaluation (given any data, time or other constraints or opportunities). Evaluations should not be undertaken unless a quality evaluation can be assured. The objective is not to increase the number of evaluations undertaken, but to embed rigorous and evidence-based evaluation to inform good policy design.

Are evaluations being planned at an early stage of program development?