Browse our range of reports and publications including performance and financial statement audit reports, assurance review reports, information reports and annual reports.

Future Submarine — Competitive Evaluation Process

Please direct enquiries relating to reports through our contact page.

The objective of this audit was to assess the effectiveness of Defence’s design and implementation of arrangements to select a preferred Strategic Partner for the Future Submarines Program.

Summary and recommendations

Background

1. In February 2015, the Government announced an acquisition strategy for Australia’s Future Submarine, involving a competitive evaluation process.1 The Future Submarine will replace the Royal Australian Navy’s six Collins Class Submarines which, without an extension to their service life, are due to be withdrawn from service by 2036.

2. The competitive evaluation process was not aimed at eliciting and assessing a full design for the Future Submarine, or identifying firm cost and schedule data. These processes will be undertaken with the successful international partner subsequent to the competitive evaluation. Direction de Constructions Navales Services (DCNS) of France; ThyssenKrupp Marine Systems GmbH (TKMS) of Germany; and the Government of Japan participated in the competitive evaluation process. The Australian Government announced DCNS as the successful International Partner on 26 April 2016:

DCNS of France has been selected as our preferred international partner for the design of the 12 Future Submarines, subject to further discussions on commercial matters.2

3. The objective of the audit was to assess the effectiveness of Defence’s design and implementation of arrangements to select a preferred international partner for the Future Submarine program (SEA 1000). To form a conclusion against the objective, the ANAO adopted the following high-level audit criteria:

- Defence designed a fit-for-purpose process for evaluating and selecting an international partner for the Future Submarine program, and to support the establishment of a sovereign capability to sustain the Future Submarine Fleet.

- Defence effectively implemented the agreed evaluation process to select an international partner for the Future Submarine program, and to support the establishment of a sovereign capability to sustain the future submarine fleet.

Conclusion

4. Defence effectively designed and implemented a competitive evaluation process to select an international partner for the Future Submarine program.

Supporting findings

Design of the competitive evaluation process

5. Defence designed a fit-for-purpose process to evaluate and select an international partner for Australia’s Future Submarine program.

- Defence determined the suitability of the three participants based on their proven ability to design and build diesel-electric submarines.

- Defence established an evaluation organisation that provided appropriate governance and oversight, including from an independent expert advisory panel that provided assurance and advice directly to the Government.

- Defence developed a suite of preliminary capability requirements to inform the participants of its strategic and operational expectations of the Future Submarine fleet.

- Defence developed and documented a competitive evaluation framework which included: a comprehensive set of criteria; an evaluation methodology with clear and consistent assessment processes; and clearly defined roles and responsibilities.

Implementation of the competitive evaluation process

6. Defence effectively implemented the competitive evaluation process:

- Defence implemented the required probity procedures relating to the identification and management of conflicts of interest and confidentiality. All staff and contractors were required to attend probity briefings and complete declarations of conflicts of interest.

- Defence implemented procedures to maintain a consistent approach when interacting with the participants at the workshops, review sessions, and when answering questions.

- Payments made to the participants were approved as required by the Public Governance, Performance and Accountability Act 2013 (Cth).

- Defence implemented the documented evaluation procedures to measure the performance of the participants against the criteria. This allowed the individual evaluation working groups to produce well-reasoned conclusions. These conclusions were incorporated into the Final Evaluation Board Report which provided a clear justification for the selection of DCNS as the international partner.

7. Defence appropriately advised the Government of the outcome of the competitive evaluation process:

- The Minister for Defence and Defence provided advice to the Australian Government in April 2016 recommending DCNS as the preferred international partner.

- The advice to government was detailed, and provided a comprehensive analysis of the performance of each of the participants in the competitive evaluation and their respective rankings.

- The advice clearly identified the risks and caveats in proceeding with DCNS as the preferred international partner—Defence identified it was confident it could mitigate and resolve these issues through further negotiation with DCNS.

- The Government approved DCNS as the preferred international partner on 19 April 2016.

Summary of Defence’s response

8. Defence’s full response appears in Appendix 1 of this report. A summary of Defence’s response is below:

Defence acknowledges the findings of the ANAO Report on the Future Submarine—Competitive Evaluation Process.

The Future Submarine Program will involve the design and construction of a submarine to meet Australia’s unique capability requirements. Submarine design and construction are complex activities, the success of which depends on the commitment of dedicated resources by both the Australian Government and the designer/builder. Rather than competition, it remains the view of Defence that the most appropriate balance of capability, cost and schedule is derived through deep engagement with the designer throughout the design process, alongside focussed preparations for construction.

The purpose of the Future Submarine Competitive Evaluation process was to select the most suitable international partner to work with Australia to develop and deliver the Future Submarine.

Following the Government’s announcement of Direction de Constructions Navales Services (DCNS) as the preferred international partner for the Future Submarine Program on 26 April 2016, activities have progressed to include the start of mobilisation, design and work under contract. The engagement of Lockheed Martin Australia as the combat system integrator, and the negotiation of the Framework Agreement between the Government of Australia and the Government of the French Republic concerning Cooperation on the Future Submarine Program have also progressed.

Defence anticipates and welcomes the ongoing review of the Future Submarine Program by the ANAO.

1. Background

Introduction

1.1 The Royal Australian Navy (Navy) currently operates a fleet of six Collins Class submarines. Without an extension to their service life, the first Collins is due to withdraw from service in 2026, with the remainder of the fleet to be retired by 2036. The Defence White Paper 2009 identified that a new submarine platform would be acquired to replace the Collins fleet, the first of which would enter service in 2032–33.

The competitive evaluation process

1.2 In February 2015, the Government announced an acquisition strategy for Australia’s Future Submarine, involving a competitive evaluation process.3 The Government announced that Direction de Constructions Navales Services (DCNS) of France; ThyssenKrupp Marine Systems GmbH (TKMS) of Germany; and the Government of Japan would be invited to participate in the competitive evaluation, with final responses due from each participant on 30 November 2015. The Government announced that:

As part of this competitive evaluation process, the Department of Defence will seek proposals from potential partners for:

- Pre-concept designs based on meeting Australian capability criteria;

- Options for design and build overseas, in Australia, and/or a hybrid approach;

- Rough order of magnitude (ROM) costs and schedule for each option; and

- Positions on key commercial issues, for example intellectual property rights and the ability to use and disclose technical data.4

1.3 Defence was tasked with designing and implementing the competitive evaluation process. The competitive evaluation was to be completed by March 2016.

1.4 Defence defined the term ‘competitive evaluation’ to its Minister in October 2015:

A competitive evaluation process comprises an evaluation of two or more options under a common evaluation framework. The common evaluation framework would address a range of criteria, which could include matters such as capability, interoperability, cost, schedule and commercial issues.

Defence undertakes numerous kinds of competitive evaluation processes that are not necessarily based on a request for tender. Risk reduction and design processes, feasibility studies, and offer definition and refinement processes, are some examples of different kinds of processes run by Defence that are competitive but not necessarily based on request for tenders.

1.5 The competitive evaluation process was not aimed at eliciting and assessing a full design for the Future Submarine, or identifying firm cost and schedule data. These processes will be undertaken with the successful international partner subsequent to the competitive evaluation.

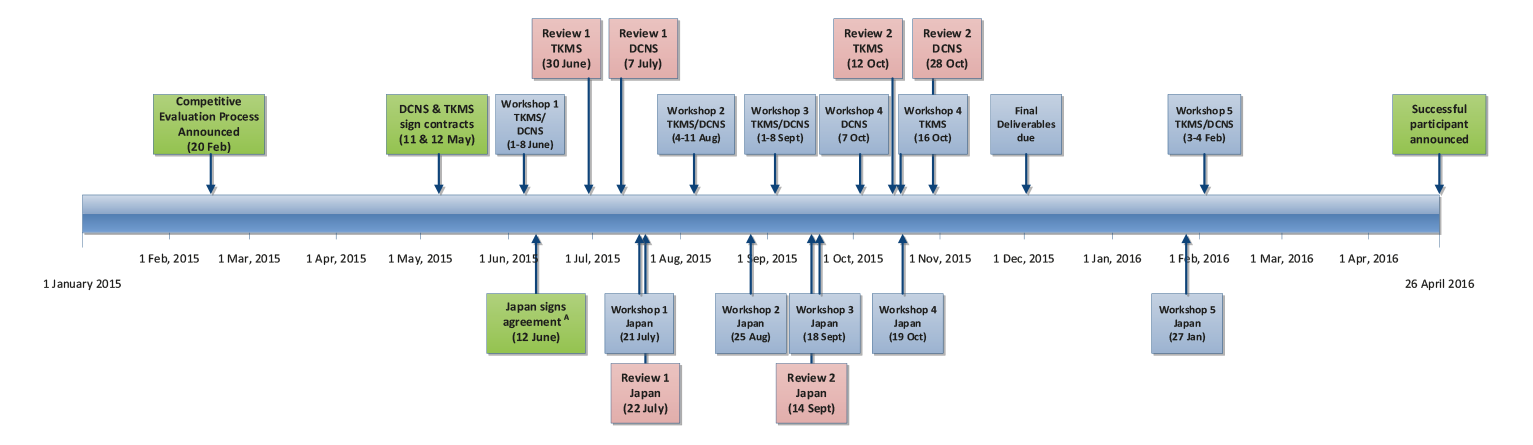

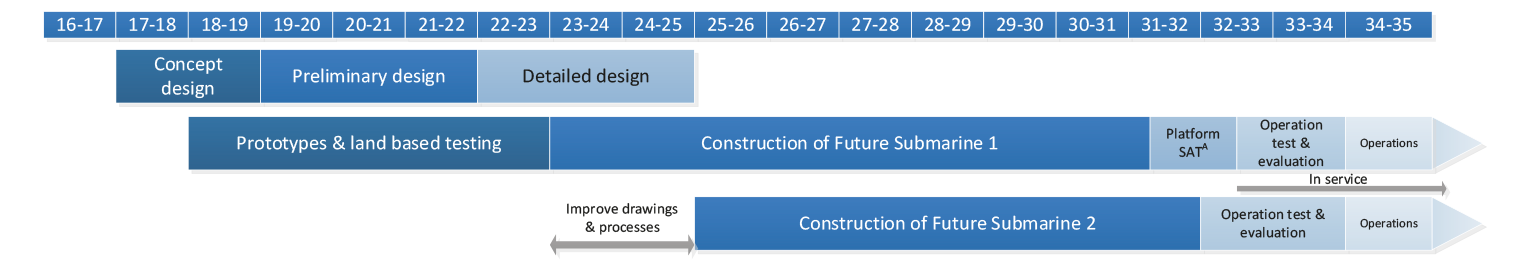

1.6 Figure 1.1 identifies the timeline for the competitive evaluation process. Figure 1.2 shows the Future Submarine program schedule subsequent to the completion of the competitive evaluation process.

Figure 1.1: Timeline for the competitive evaluation process

Note A: The relationship with Japan was governed by an inter-government agreement.

Source: ANAO analysis of Defence documents.

Figure 1.2: Future Submarine program indicative schedule

Note A: Ship Acceptance Trials (SAT).

Source: ANAO analysis of Defence Documents.

Timeline of the competitive evaluation process

1.7 As outlined in Figure 1.1, the participants were required to attend a number of workshops and reviews as well as deliver three responses to Defence: an initial response—the outline deliverables; an intermediate response—the draft deliverables; and the completed response—the final deliverables.

1.8 On 26 April 2016, the Prime Minister announced that DCNS had been selected as the Government’s preferred international partner for the Future Submarine program. The Defence Minister stated that the competitive evaluation process had considered cost, schedule, program execution, through-life support and Australian industry involvement, and that DCNS was best placed to meet the Government’s key requirements.5

Navy’s submarine capability

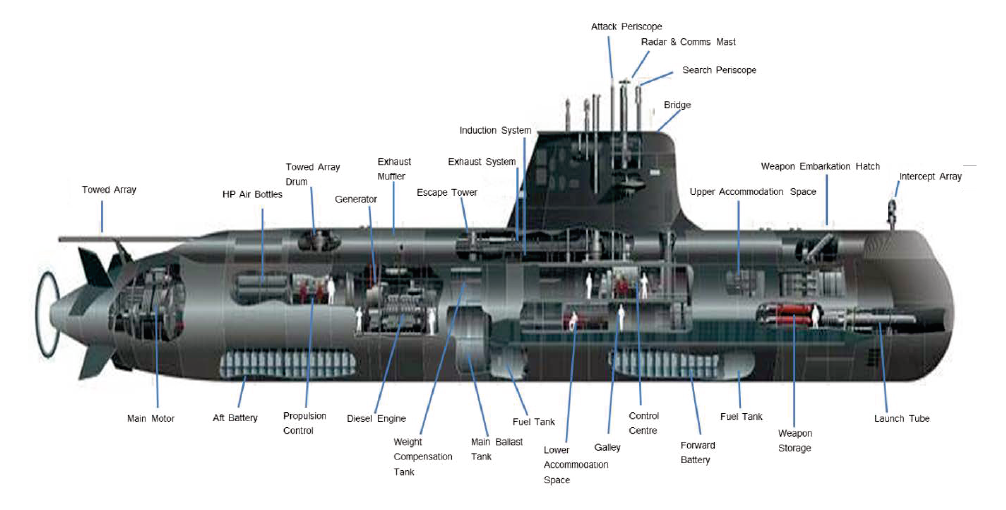

1.9 The Government has decided that the Future Submarine will be a diesel-electric platform.6 Diesel-electric submarines rely on diesel engines to power generators, which in turn charge the submarine’s main storage batteries. Electricity from the main storage batteries provides power to the submarine’s propulsion system, and other electrically powered systems. Charging of the batteries occurs on the surface or when the submarine is at a shallow depth and can raise a snorkel to induct air and discharge emissions to operate the diesel engines.

1.10 Diesel-electric submarines require a significant amount of area within the boat to position the batteries. The challenge in designing a diesel-electric submarine is to achieve a balance between the amount of time the submarine is required to remain close to the surface to deploy the snorkel7, the amount of power that can be stored in its batteries, and the power consumption of the submarine when it is operating off its batteries.

1.11 Figure 1.3 provides an illustration of Navy’s current diesel-electric submarine platform, the Collins Class.

Figure 1.3: Collins Class Submarine

Source: Department of Defence.

Audit approach

1.12 The Future Submarine program has commenced the early phases of its design work, which will increase in scale in 2017 and beyond. Due to the program’s cost, longevity and risk, this audit is the first in a series of audits that will be undertaken by the ANAO, to provide assurance on the program’s progress.

1.13 The objective of the audit was to assess the effectiveness of Defence’s design and implementation of arrangements to select a preferred international partner for the Future Submarine program (SEA 1000). To form a conclusion against the objective, the ANAO adopted the following high-level audit criteria:

- Defence designed a fit-for-purpose process for evaluating and selecting an international partner for the Future Submarine program, and to support the establishment of a sovereign capability to sustain the Future Submarine Fleet.

- Defence effectively implemented the agreed evaluation process to select an international partner for the Future Submarine program, and to support the establishment of a sovereign capability to sustain the future submarine fleet.

1.14 The audit’s scope included Defence’s design and implementation of the competitive evaluation process, including: the development of the evaluation framework and criteria; how the potential international partners (the participants) were shortlisted; and the assessment process. The audit method included a review of records and data held by Defence, particularly the Capability Acquisition and Sustainment Group, the Royal Australian Navy, and the former Capability Development Group. The ANAO also interviewed key Defence personnel.

1.15 The audit was conducted in accordance with ANAO auditing standards at a cost to the ANAO of approximately $385 000.

1.16 Team members for this audit were Alex Wilkinson, Sonia Pragt, Dr Jordan Bastoni and Michelle Page.

2. Design of the competitive evaluation process

Areas examined

This Chapter examines Defence’s planning and design of the competitive evaluation process to select an international partner for the Future Submarine program.

Conclusion

Defence effectively designed a competitive evaluation process to select an international partner for the Future Submarine program.

Did Defence design a fit-for-purpose process to evaluate and select an international partner for Australia’s Future Submarine program?

Defence designed a fit-for-purpose process to evaluate and select an international partner for Australia’s Future Submarine program.

- Defence determined the suitability of the three participants based on their proven ability to design and build diesel-electric submarines.

- Defence established an evaluation organisation that provided appropriate governance and oversight, including from an independent expert advisory panel that provided assurance and advice directly to the Government.

- Defence developed a suite of preliminary capability requirements to inform the participants of its strategic and operational expectations of the Future Submarine Fleet.

- Defence developed and documented a competitive evaluation framework which included: a comprehensive set of criteria; an evaluation methodology with clear and consistent assessment processes; and clearly defined roles and responsibilities.

The potential International Partners

2.1 Defence determined that the Future Submarine would be designed and built by a proven submarine designer with recent experience in designing and building diesel-electric submarines. Defence analysis concluded that Direction de Constructions Navales Services (DCNS) of France, ThyssenKrupp Marine Systems GmbH (TKMS) of Germany and the Government of Japan were the only viable potential international partners to meet this requirement, and which could proceed to the competitive evaluation process.8

Defining the required capability

2.2 The 2009 Defence White Paper established the broad requirements of the Future Submarine and identified a requirement for 12 submarines.9 In 2012, Defence identified four possible options for designing and building its Future Submarine:

- military off-the-shelf design;

- an ‘Australianised’ military off-the-shelf design;

- enhancement of the Collins class design; and

- a new submarine design.

2.3 In 2014, Defence identified that there was no existing military off-the-shelf submarine design that met its operational requirements. In addition, Defence engaged an external review to determine the viability of enhancing the Collins design. The external review concluded that an enhanced Collins design would require the same budget, schedule and contingency as a new design, and would have significant engineering constraints such as hull diameter. On this basis, Defence determined that a new design was the preferred option for the Future Submarine.

2.4 Defence developed a suite of preliminary capability definition documents to signal its expectations for the Future Submarine platform. These documents informed the capability requirements for the competitive evaluation. The documents included:

- a Preliminary Operational Concept Document: from which mission profiles were developed to inform participants of Defence’s operational requirements; and

- a Preliminary Function and Performance Specifications document: which defined Defence’s preliminary requirements of the system in terms of functions; and how well these functions should be performed.10

Preliminary Operational Concept Document

2.5 The Preliminary Operational Concept Document defined Defence’s capability requirements of the Future Submarine. The document contained Defence’s operational needs and measures of effectiveness. The measures of effectiveness related to: the individual submarine; the submarine fleet; and the associated support system. The document contained:

- a set of defined mission scenarios;

- a description of the existing submarine system (Collins Class); and

- a functional analysis of the operational needs within the context of the defined mission scenarios.

2.6 The document detailed 154 requirements of the Future Submarine system across six categories:

- operational activities;

- enabling activities;

- seamanship activities;

- emergency activities;

- administrative and support activities; and

- support system activities.

Preliminary Function and Performance Specification

2.7 In 2014, Defence developed an in-house conceptual design for its Future Submarine to establish and consolidate its engineering and capability requirements. The data suite from this design formed the basis of the Preliminary Function and Performance Specification detailing Defence’s requirements for the Future Submarine. The requirements detailed the functions, characteristics, performance and interfaces required of the Future Submarine that meet the measures of effectiveness contained in the Operational Concept Document.11 In addition to the preliminary concept design data, the Preliminary Functional Performance Specification was informed by:

- the empirically observed performance of the Collins Class submarine and its support system;

- the original Collins Class specification;

- Navy’s Materiel Requirement Set;

- system performance modelling; and

- the judgement and experience of operators and other subject matter experts.

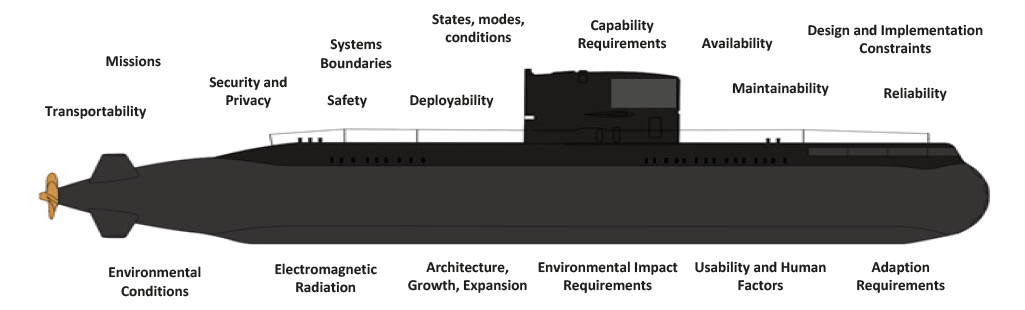

2.8 The Specification contained 625 requirements across 19 categories and is detailed in Figure 2.1.

Figure 2.1: Future Submarine—Preliminary Function and Performance Specification categories

Source: ANAO analysis of Defence documents.

Competitive evaluation framework

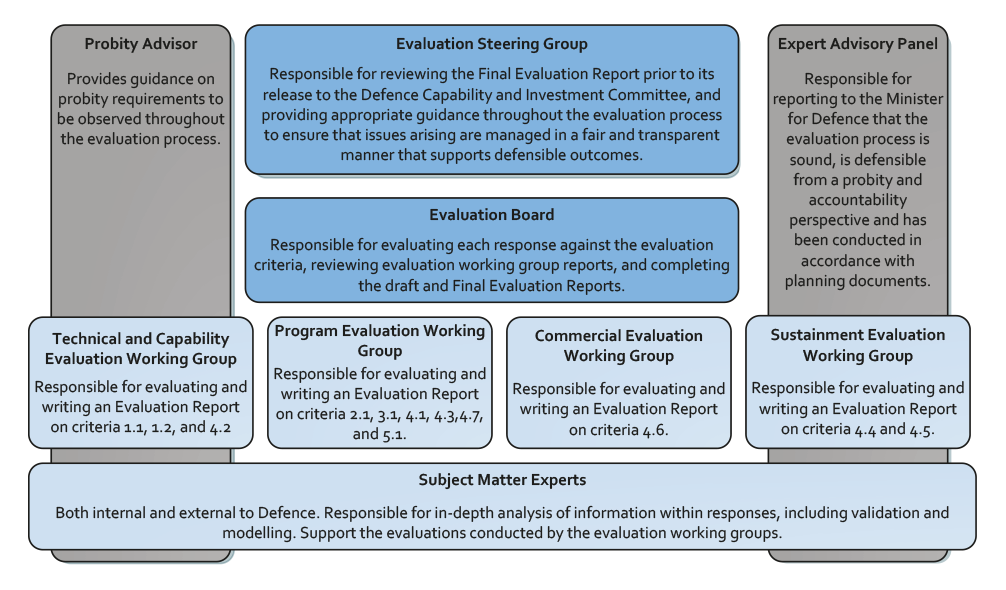

2.9 Defence designed a fit-for-purpose framework to assess the capacity of the three participants to effectively partner with Australia on the Future Submarine program, and to support the establishment of a sovereign capability to sustain the Future Submarine. The framework was underpinned by the SEA 1000 Competitive Evaluation Plan which established the structures and procedures for undertaking the competitive evaluation, including a competitive evaluation organisation (see Figure 2.2), a probity framework and evaluation criteria.

The competitive evaluation organisation

Figure 2.2: Competitive evaluation organisation

Source: ANAO analysis of Defence documents.

Probity Framework

2.10 The competitive evaluation framework incorporated comprehensive probity procedures that aimed to ensure the competitive evaluation was conducted in a manner that was defensible, transparent and fair. The probity framework was required to take account of the national security implications of the Future Submarine program, and the effect that the breakdown in probity processes could have on the submarine’s potential strategic advantage. Defence engaged the Australian Government Solicitor (AGS) to provide independent specialist services to develop and manage a probity framework. The AGS endorsed the probity procedural documentation, and provided ongoing support and advice to the competitive evaluation process through its role as probity advisor. Probity arrangements were documented in:

- The SEA 1000 Legal Process and Probity Framework—outlining the key probity principles and responsibilities of each of the parties involved in the competitive evaluation process including Defence officers and external personnel.12

- The competitive evaluation process Probity Plan (Probity Plan)—outlining the key probity principles which included:

- compliance with legislative and regulatory requirements;

- security and confidentiality;

- identification and management of all actual, potential and perceived conflicts of interest;

- acting in an ethical manner;

- fair and equitable treatment of participants; and

- establishing and maintaining a clear audit trail.

2.11 In September 2016, the ANAO interviewed officers of the AGS, who advised that the probity framework developed for the Future Submarine competitive evaluation process was the most comprehensive they had developed for a Defence acquisition.

The competitive evaluation criteria

2.12 After the Government’s announcement in February 2015 to proceed to a competitive evaluation process, Defence established the criteria to select its international partner. The criteria covered a broad and appropriate range of issues that Defence would consider to choose its international partner, including:

- capability13;

- cost;

- schedule;

- program implementation (including sustainment and Australian industry involvement14); and

- risk.

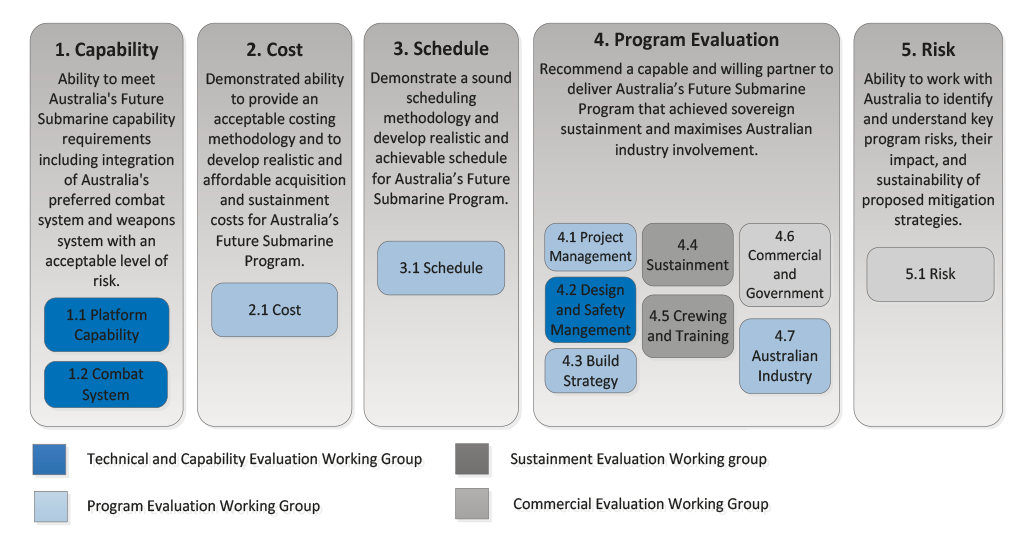

2.13 Each criterion was assessed by its respective Evaluation Working Group (see Figure 2.2). Figure 2.3 identifies the competitive evaluation criteria.

Figure 2.3: Competitive Evaluation Criteria

Source: ANAO analysis of Defence documents.

2.14 The participants were to be assessed against the criteria on the basis of providing eight submarines, not twelve, as was determined in the preliminary capability definition phase. The ANAO was advised by Defence that reducing the number of submarines required from twelve to eight was reflective of the Commonwealth’s fiscal position at the time the competitive evaluation process was announced.15

Australian Industry involvement

2.15 Government required maximisation of the Australian industry involvement in the Future Submarine program. The Future Submarine program incorporated an Australian industry plan that required the potential international partner to:

Demonstrate a commitment and ability to maximise Australian industry involvement through all phases of the Future Submarine Program without unduly compromising capability, cost, program schedule and risk.

2.16 As part of its response to the competitive evaluation, the participants were required to provide an Australian Industry Plan identifying opportunities for Australian industry, and how Australian industry involvement could be maximised during the lifecycle of the program. Participants were required to provide ‘rough order of magnitude’ cost estimates of Australian industry involvement, across three build options: overseas; hybrid and Australian build. Defence costing models forecast a premium of around 15 per cent on the Australian build option for the successful participant, DCNS.

Combat system integration

2.17 The Future Submarine’s combat system will be acquired separately to the submarine platform. The participants were required to demonstrate their ability to accommodate the combat system into the design of the Future Submarine.

2.18 The Government approved the selection of the AN/BYG-1 Tactical Weapon and Control Sub-system, and the Mark 48 Heavyweight Torpedo for integration into the Future Submarine. Both of these systems are to be jointly developed by the United States of America and Australia, and are based on current versions of these systems used by Navy’s Collins Class fleet. The Government’s decision to acquire these systems for the Future Submarine was based on maintaining the Australian submarine fleet’s strategic interoperability with the United States.

2.19 The integration of the combat system into the Future Submarine is to be completed by a separate combat system integrator to the Future Submarine international partner. In October 2015, Defence advised its Minister that:

The Combat System Integrator will initially manage the development of those elements of the [Collins Class] combat system that will be evolved for use in the Future Submarine. The [Combat System Integrator] will then work with the selected international design partner in the Future Submarine design process.

2.20 The integration of the combat system into the Future Submarine is a key risk to be managed by Defence.16 The process will involve the sharing of interface data between the international partner, the Australian Government, and the Combat System Integrator. Defence will have the primary role of managing this relationship.

2.21 A description of the Combat System was provided to each of the participants identifying the combat system’s required power at normal and peak levels, its weight, and volume. The participants were required to demonstrate their ability to incorporate the power, weight and volume requirements of the combat system into their potential submarine designs, and work with Defence and the Combat System Integrator to integrate the combat system into the Submarine.

2.22 The Government conducted a limited tender process in parallel to the competitive evaluation process to select the combat system integrator. On 30 September 2016, the Government announced Lockheed Martin Australia as the successful tenderer.

Competitive evaluation process methodology

2.23 The competitive evaluation Plan detailed that the purpose of the competitive evaluation process was to:

enable the Commonwealth to understand and evaluate important considerations relevant to its selection of a suitable International Partner, including capability, cost, programme schedule, commercial matters, intellectual property and risk.

2.24 The Plan also noted that:

- the early selection of an international partner would support the achievement of value for money, as required for a procurement conducted under the Commonwealth Procurement Rules; and

- while value for money would not be directly considered as part of the competitive evaluation process, accurate costs for the Future Submarine would be derived through the design process.

2.25 To assess participants against the criteria, Defence designed a competitive evaluation methodology with clearly defined procedures and clearly defined roles and responsibilities. The methodology focussed on fair and equal treatment and noted that:

- Each participant will be provided with substantially similar packs containing common information on Australian requirements, constraints and assumptions, and guidance on the evaluation and Evaluation Criteria;

- Each participant will be evaluated in accordance with this [competitive evaluation] Plan, applying common Evaluation Criteria;

- Each participant will be evaluated by a common team, drawing on Subject Matter Experts as appropriate;

- Data, communications, questions and clarifications from a participant will be strictly controlled and not disclosed to other participants, unless deemed appropriate on equity grounds, or the disclosure is deemed to be in the interest of the Commonwealth; and

- The AGS (Australian Government Solicitor), as the SEA 1000 Probity Advisor, will develop, and monitor, the detailed implementation of the [competitive evaluation process] Probity Plan for the [competitive evaluation process].

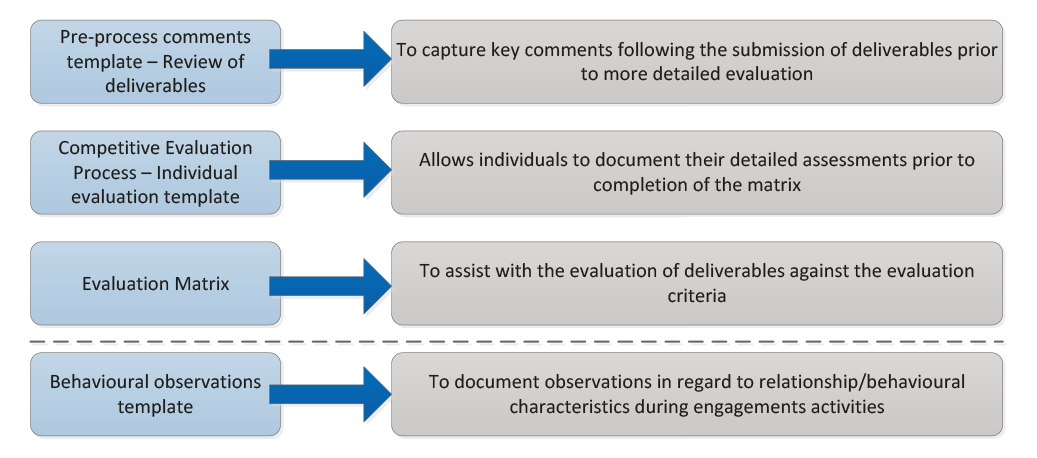

2.26 Three sets of deliverables were reviewed by Defence: outline, draft and final submissions. Reviews of the outline and draft deliverables were conducted to ensure that participants understood Defence’s requirements. Defence provided feedback from these reviews to each participant. The final submission was evaluated for the purpose of selecting the international partner. A number of tools were developed to help the evaluators assess the final submissions, as shown in Figure 2.4.

Figure 2.4: Evaluation tools

Source: ANAO adaptation from Defence document.

2.27 The evaluation matrix was the key tool used to collate, finalise and present the assessments of each of the participants’ proposals. The matrix took the form of a spreadsheet, with separate tabs for each evaluation working group, which contained the criteria assigned to each group; the detail being sought under each criterion; key questions for the group to consider when examining each criterion; and a record of risks.

2.28 The evaluation matrix assisted in the evaluation process detailed in Table 2.1. Each of the evaluation criteria was broken down into evaluation components, which were further broken down into evaluation details. Evaluation details corresponded to data item descriptions given to the participants.17 There were 171 evaluation detail items, 26 evaluation components, and 12 evaluation criteria.

Table 2.1: Evaluation process

|

Evaluation Stage |

Evaluation Outcome |

|

|

Evaluation detail |

Evaluation working groups review deliverables and record strengths and weaknesses of response. They then make an assessment of how the submission addresses the evaluation detail being assessed, and assign a rating. The primary risk associated with the evaluation detail is then identified. |

|

|

Evaluation component |

The assessment of the relevant evaluation details are then used to make an assessment of each evaluation component and a rating is assigned. Risks for each consequence category are then identified, and assessments for relevant risks are completed. Chairs of the evaluation working groups then convene a moderation meeting, at which risk assessments are completed for risks that could not be addressed at a lower level. |

|

|

Evaluation criteria |

Groups then assign ratings to the evaluation criteria, based on the assessments previously done of evaluation components, and provide descriptions justifying the rating. |

Evaluation working group reports are prepared after the evaluation criteria and risk summary stages. |

|

Risk Summary |

Groups produce a heat-map of the risks identified during the evaluation component assessment. |

|

Source: ANAO analysis of Defence document.

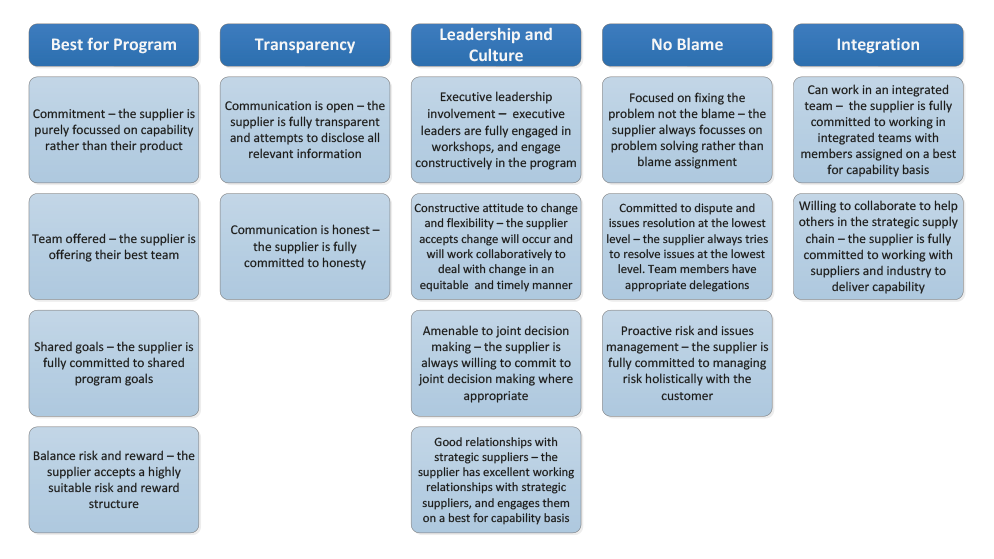

2.29 Defence compared its documented observations of the behaviour of the participants to determine how effectively Defence would be able to partner with them. For each of the face-to-face engagements between Defence and the participants (workshops and review points18), key Defence personnel rated the behaviour of the participants against specific criteria. Figure 2.5 demonstrates the behavioural attributes Defence measured through this process.

Figure 2.5: Behavioural attributes and descriptors for superior rating

Source: ANAO adaptation from Defence document.

Information sent to the participants

2.30 The participants were sent information in several packages, comprising: a contract detailing the terms and conditions of the competitive evaluation process and its annexes (in the case of DCNS and TKMS) and clarification of the government-to-government arrangement (in the case of Japan); templates that would form the basis of the participants’ responses to the evaluation criteria; and information on Defence’s detailed requirements for the Future Submarine.

2.31 Each of the participants was sent a statement of work which sought the following:

- a pre-concept design for a Future Submarine;

- local, overseas and hybrid build options;

- rough order of magnitude cost and schedule options;

- plans for support and sustainment, including Australian industry involvement;

- program management plans for the implementation of the Future Submarine program;

- positions on key commercial issues; and

- an assessment of potential Australian industry involvement.

2.32 Participants were to provide sufficient objective quality evidence to allow any claims made in response to the statement of work to be assessed.

2.33 The participants were to ensure that their responses were informed by the following assumptions:

- the Future Submarines will be sustained in Australia;

- responses will be based on building a number of submarines to be advised separately;

- the Future Submarine program should not adversely impact the sustainment of the Collins Class submarines;

- combat systems integration will take place in Australia under a separate contract between the Commonwealth and a third party supplier. Full operational software will be loaded in Australia and operational testing of the Future Submarine will also take place in Australia;

- the expected submarine delivery rate will be advised separately;

- the service life of a Future Submarine should be between 24 and 30 years; and

- the operational availability of a Future Submarine should be between 62 and 70 per cent.

2.34 The participants were provided with templates, which formed the basis of the majority of information provided as part of the competitive evaluation process. This ensured consistency in the structure of responses received from the participants.

Evaluating cost

2.35 The Defence White Paper 2009 signalled an approximate spend of $50 billion dollars on the construction and sustainment of the Future Submarine over its life. Participants were required to provide rough order of magnitude costs for the Future Submarine for hybrid, Australian and overseas build options. Defence validated the cost estimates provided by the participants and calculated associated price contingencies using historical price analysis19, parametric modelling20 and sensitivity analysis.21

2.36 Applying these cost modelling techniques to the cost data produced a set of cost estimations to a defined confidence interval. Each of the estimations was weighted according to its utility and reliability, and a weighted average was taken to produce the program’s final estimation of the cost of a particular build option by a particular Participant.22

2.37 The cost methodology was externally reviewed and endorsed by RAND Corporation.

Expert advisory panel

2.38 An expert advisory panel was established to provide independent expert oversight of the conduct of the competitive evaluation process. Specifically, the expert advisory panel was to:

formally report to Government on the soundness of the process, whether the conduct of the process is defensible from a probity and accountability perspective and whether the participants have been treated fairly and equitably in accordance with applicable Commonwealth legislative and policy requirements (as advised by Defence).

2.39 The tasks assigned to the panel were to:

- review and assess relevant and appropriate process documentation as identified or provided by Defence;

- review and assess, and provide an initial report on, the adequacy and appropriateness of the information exchange arrangements between Defence and the participants;

- review and assess, and provide an initial report on, the adequacy and appropriateness of the proposal evaluation arrangements;

- provide guidance to Defence on any issues that may arise that could impact on the integrity, fairness or defensibility of the process;

- attend briefings or review progress reports on the conduct of the process;

- provide initial, interim and final reports on the conduct of the process; and

- if required, review the debriefing procedures at the end of the process, and assist Defence to respond to internal or external review or enquiries on any aspect related to the integrity or fairness of the process.

2.40 The panel was to be provided with all relevant documentation, and access to request clarification as required. The panel consisted of four members: Professor Donald Winter (Chair); the Hon. Justice Julie Anne Dodds-Streeton; Mr Ron Finlay; and Mr Jim McDowell.23

3. Implementation of the competitive evaluation process

Areas examined

This Chapter examines Defence’s implementation of the competitive evaluation process to select its international partner for the Future Submarine program.

Conclusion

Defence effectively implemented the competitive evaluation process to select an international partner for the Future Submarine program.

Did Defence effectively implement the competitive evaluation process?

Defence effectively implemented the competitive evaluation process:

- Defence implemented the required probity procedures relating to the identification and management of conflicts of interest and confidentiality. All staff and contractors were required to attend probity briefings and complete declarations of conflicts of interest.

- Defence implemented procedures to maintain a consistent approach when interacting with the participants at the workshops, review sessions, and when answering questions.

- Payments made to the participants were approved as required by the Public Governance, Performance and Accountability Act 2013 (Cth).

- Defence implemented the documented evaluation procedures to measure the performance of the participants against the criteria. This allowed the individual evaluation working groups to produce well-reasoned conclusions. These conclusions were incorporated into the Final Evaluation Board Report which provided a clear justification for the selection of DCNS as the international partner.

The response period: 20 February 2015—30 November 2015

3.1 Defence wrote to the participants in April 2015 advising them of the Australian Government’s 20 February 2015 announcement that a competitive evaluation process would be conducted to select an international partner for the SEA 1000 Future Submarine program:

The CEP [competitive evaluation process] will enable the Australian Government to understand and evaluate important considerations relevant to its selection of a suitable International Partner, including capability, cost, program schedule, commercial matters, technology access and intellectual property arrangements and the overall level of risk associated with the response provided by each participant invited by Defence to participate in the CEP. The CEP will also assess the build options available to Australia and the ability of each Participant to partner with Australia to deliver and sustain a Future Submarine that meets Australian capability, sovereign and other program requirements, irrespective of which build option is ultimately selected by the Australian Government.

… The SOW [Statement of Work] identifies three (3) build options for the Future Submarine:

- Overseas Build in home country;

- Australian Build; and

- Hybrid Build (Combination of Overseas and Australian Build).

Defence intends to use the CEP to obtain information on each of the above build options. Accordingly, Participants are asked to consider and provide with their response information on all three (3) options. Under all options, the Australian Government is seeking to maximise the involvement of Australian industry.

3.2 Defence subsequently signed contracts with Direction de Constructions Navales Services (DCNS) and ThyssenKrupp Marine Systems GmbH (TKMS) (May 2015) to participate in the competitive evaluation. The contracts outlined deliverables, probity and security requirements. The Government of Japan was engaged in June 2015 through a government-to-government arrangement:

the decision to potentially share Japanese submarine technology with Australia has been a Government decision in Japan rather than a commercial initiative. As such, the Japanese Ministry of Defence is leading Japan’s proposal with the support of Japanese industry (Mitsubishi Heavy Industries and Kawasaki Heavy Industries).

3.3 The competitive evaluation methodology was applied consistently to the three participants. The participants were required under their respective instruments to provide their final responses by 30 November 2015.

Implementing the probity framework

3.4 Defence implemented the competitive evaluation probity framework. Specifically, Defence implemented procedures to:

- communicate probity protocols through briefings to all stakeholders and personnel;

- manage conflicts of interest;

- assure confidentiality of information;

- manage all interaction with participants; and

- conduct an evaluation in a manner that was fair and transparent.

Probity briefings

3.5 Probity briefings were held in Canberra, Melbourne and Adelaide during March, April, August and September 2015. The ANAO’s review of the probity registers found that all Defence personnel involved in the evaluation process were required to attend a probity briefing prior to any permissions being granted for access to Future Submarine evaluation data. Participation in these briefings was recorded in attendance registers.

3.6 Probity briefings were also conducted by an Australian Government Solicitor official prior to each of the workshops24 or review meetings being held with participating countries. Probity advisers were present at each of the workshops and review meetings held with the participants to provide assurance that this contact was in accordance with the probity framework.

Conflicts of interest

3.7 Defence personnel and contractors involved in the competitive evaluation process were required to declare any actual, potential or perceived conflict of interest. Individual declarations were signed by 482 people involved in the competitive evaluation. Declared conflicts were assessed by the probity adviser who reviewed each of the conflicts of interest declarations and provided advice on how these should be managed. Records were made detailing the small number of instances where the probity advisor took actions to manage or resolve issues.25

3.8 Personnel were regularly reminded to update their conflict of interest declarations and declarations were regularly reviewed by the probity advisor to provide assurance that any issues were immediately identified and managed on an ongoing basis. Where personnel received gifts or hospitality, these were recorded using the gift and hospitality register. The ANAO’s review of these registers indicated that no substantial issues were documented.

Confidentiality arrangements

3.9 Defence was required to manage information confidentiality on two levels: confidentiality of information held by participants; and the confidentiality of information held by Defence personnel and contractors.

3.10 The primary mechanisms used to manage the security of information provided to DCNS and TKMS were the Deed of Confidentiality and confidentiality clauses contained in the contracts. For Japan, confidentiality clauses were included in the government-to-government agreement.

3.11 All international personnel involved in the project had their security clearances assessed and recorded by Defence before the evaluation process commenced. Participants’ premises also underwent a security review.

Workshops, reviews and interaction with the participants

3.12 Defence conducted a range of workshops and reviews with the participants. An information pack was provided to each of the participants, followed by a start-up meeting. The start-up meeting discussed the Future Submarine program; the competitive evaluation process; evaluation criteria; management of questions and answers; probity; security; and process deliverables. Activity schedules were also discussed and agreed to with each of the participants.

3.13 Five workshops were held with each of the participants to communicate Defence’s requirements. Workshops covered the following subject matters:

- Workshop 1: Safety and design workshop;

- Workshop 2: Sustainment workshop and build strategy;

- Workshop 3: Program and technical workshop;

- Workshop 4: Commercial; and

- Workshop 5: Commercial cardinal points.26

3.14 Two formal reviews were also conducted by Defence’s Future Submarine program staff—these are outlined in Table 3.1. The aim of the reviews was to assist participants to develop a response to the competitive evaluation in line with Defence’s requirements. Interactions with the participants at the workshops and reviews were observed by officers of the Australian Government Solicitor in their role as probity advisors.

Table 3.1: Workshop and review timing

|

Workshops |

DCNS |

TKMS |

Japanese Government |

|

Workshop 1 |

8 June 2015 |

1 June 2015 |

21 July 2015 |

|

Workshop 2 |

11 August 2015 |

4 August 2015 |

25 August 2015 |

|

Workshop 3 |

8 September 2015 |

1 September 2015 |

18 September 2015 |

|

Workshop 4 |

2 October 2015 |

16 October 2015 |

19 October 2015 |

|

Workshop 5 |

3 February 2016 |

4 February 2016 |

27 January 2016 |

|

Reviews |

DCNS |

TKMS |

Japanese Government |

|

Review 1 |

7 July 2015 |

30 June 2015 |

22 July 2015 |

|

Review 2 |

28 September 2015 |

12 October 2015 |

14 September 2015 |

Source: ANAO summary from Defence documents.

Questions and Answers

3.15 Defence implemented a consistent process to manage the clarification of questions from participants. Three officers, one officer for each participant, were responsible for receiving, researching and answering questions from each of the respondents, and recording these using a template. Information released was classified in accordance with the Security Classification and Categorisation Guide which also formed a part of the tender document suite. Responses to questions were recorded in participants’ respective registers and folders, which were periodically reviewed for the purpose of maintaining consistency and fairness.

Payments to participants

3.16 Under their respective contracts and the inter-governmental agreement, the participants were funded by the Australian Government to take part in the competitive evaluation process, receiving a total of AUD $8 million27 each. The funding was paid to DCNS and TKMS on the achievement of four milestones:

- start-up meeting: AUD $2 million;

- delivery of outline deliverables: AUD $2 million;

- delivery of draft deliverables: AUD $2 million; and

- final meeting: Clarification of Final deliverable: AUD $2 million.

3.17 Defence negotiated a payment plan for the Japanese Ministry of Defence in Japanese Yen, through the government-to-government agreement. The plan consisted of five unequal payments which could not exceed JPY ¥768 858 400 (approximately AUD $8 million). Five payments were made to Japan consisting of:

- JPY ¥348 240 600;

- JPY ¥7 020 000;

- JPY ¥19 710 000;

- JPY ¥9 458 600; and

- JPY ¥384 429 200.

3.18 The Commonwealth Procurement Rules (July 2014) require Commonwealth purchases which exceed the value threshold of $10 000 to be reported using the AusTender website. However, exemptions can be sought from an appropriate delegate for procurements for the ‘design and development of military systems and equipment’.28 On this basis, Defence did not report the competitive evaluation process on AusTender. The Head of the Future Submarine program authorised this exemption on 21 April 2015.

Approval to Commit Funds

3.19 Defence gained the necessary approvals to provide payment to the participants. The planned commitment, totalling AUD $24 million, was spread over 2014–15 (AUD $8 million) and 2015–16 (AUD $16 million) financial years. Defence gained approval from Defence’s ‘Special Advisor—Finance’ to confirm:

- the sufficiency of the budget;

- the soundness of the costing calculations; and

- the accuracy of the costing calculations.

3.20 On 3 May 2015, the Director General of the Submarine program authorised the payments under Section 23 of the Public Governance, Performance and Accountability Act 2013 (Cth).

3.21 The total cost of the Future Submarine competitive evaluation process was $30.1 million.29

The evaluation period: 1 December 2015—16 April 2016

3.22 The three participants submitted their responses on 30 November 2015.30 The evaluation working groups then commenced their assessments—each group was responsible for their respective criteria shown in Figure 2.3. On 22 January 2016, Defence issued a request for further information to the participants, regarding their commitment to key commercial principles and other requirements. This information was received from the participants on 4 March 2016.

3.23 Each of the evaluation working groups produced a report, detailing their findings against their respective criteria, and the reasoning behind their responses. The groups also considered the risks that the participants faced in achieving what they had provided in their responses. The reports are comprehensive, and show an adherence to the competitive evaluation methodology outlined in chapter 2 of this audit report.

3.24 The Evaluation Board met on 10 March and 17 March 2016, and produced the draft Evaluation Board Report. The draft Evaluation Board Report was reviewed by the Evaluation Steering Committee at meetings in March and April 2016, during which recommendations from the expert advisory panel were also considered.31 The Evaluation Steering Group agreed to modify the Evaluation Board Report to reflect the input of the expert advisory panel. The Evaluation Steering Group noted that an expert advisory panel meeting of February 2016 had not identified any material probity issues.

3.25 On 5 April 2016, the Evaluation Steering Group agreed that the Evaluation Board Report accurately represented the overall assessment of the participants, with DCNS emerging as the preferred participant, subject to the resolution of certain commercial caveats.32

3.26 The Final Evaluation Board Report was completed and endorsed on 7 April 2016 by the Head Future Submarine Program, the Director General Future Submarine Program, and each of the evaluation working group chairs. The Report detailed, but did not make a recommendation on, the different build options and the factors affecting them. The Report produced a clear and well-reasoned finding that DCNS was the preferred international partner, subject to the resolution of certain commercial issues.

3.27 On 13 April 2016, the expert advisory panel reported to the Government:

… in the unanimous opinion of the Panel Members:

(a) the Competitive Evaluation Process itself was a sound and appropriate process for the selection of an international partner for the SEA 1000 Future Submarine Program.

(b) The Competitive Evaluation Process was conducted in a very sound and defensible manner, from both a probity and accountability perspective.

(c) Each of the participants has been treated fairly and equitably. This assessment is supported by a review of key metrics relating to numbers of meetings held and person hours involved in interactive sessions with participants, as well as person hours involved in the evaluation of Final Proposals as part of the Competitive Evaluation Process. This assessment was also confirmed by the participants.

(d) Each of the participants has been treated fairly and equitably in accordance with any applicable Commonwealth legislative and policy requirements (as advised by Defence).

(e) The assessment contained in the Evaluation Report is defensible based on the material received and reviewed by the Panel and traceable from the detailed assessments and materials provided by the Evaluation Working Groups.

The risk of selecting one international partner

3.28 The decision to select one design partner, as opposed to two, was made on the basis that Defence did not have the technical resources to retain two partners. Defence advised the Minister for Defence, in December 2015, that:

[t]he concept and preliminary design is resource-intensive work and will take about three to four years to complete…This is not work that can be outsourced. It requires submarine design knowledge coupled with a firm understanding of Australia’s operational requirements for the Future Submarine. These skills are in short supply internationally…and Australia should not be confident of assembling more than one Government team to work through the concept and preliminary design in a robust manner. Endeavouring to work with two international partners would dilute our capability and undermine the effort required to arrive at a sound understanding of the capability, cost range and risks of the proposed design for the Future Submarine.

3.29 This approach is in contrast to the one taken by Defence for the acquisition of its Future Frigate program—SEA 5000. The Future Frigate program also conducted a competitive evaluation process, which selected three design partners to further compete through the design phase of the acquisition. The successful partner will be chosen at the completion of the design phase.

3.30 The approach adopted for the Future Frigate program was justified by Defence as a means of achieving efficiencies and economies in the program, as each design partner would be required to minimise costs, meet Defence’s capability requirements, and produce an efficient build and sustainment program in order to be chosen as the successful build partner. Defence advised the ANAO that:

… the fundamental difference between the Future Submarine Program and the Future Frigate Program [is that] the Future Submarine Program will be a new design (there is not a military off-the-shelf option that will meet Australia’s submarine capability requirements). The design of the Future Submarine will continue until 2026 (with build starting in 2022–23 once 85 per cent of the design is completed). The Future Frigate Program is based on the selection of a largely designed frigate, with construction commencing in 2020.

3.31 The approach taken by Defence for the Future Submarine program removes competition in the design phase, and removes incentives for the international partner (DCNS) to produce a more economical and efficient build. This places the onus on Defence to ensure that its approach to the Future Submarine’s design and build phases, where final costs and schedules will be determined, returns value-for-money to the Commonwealth in the absence of a competitive process.33

Review and evaluation

3.32 In early 2016, the Future Submarine program’s Senior Management sought third party assurance on the integrity of the competitive evaluation process. The process was reviewed by two former senior United States submarine program managers, who also served as Chief Engineers in the United States Navy. The review concluded that:

The work of the competitive evaluation process is competent, diligent, expert and consistent. It is sufficiently disciplined to withstand scrutiny and is well documented. The competitive evaluation process to identify the right international partner will be successful in finding the right answer.

3.33 The review’s key findings included:

- The competitive evaluation process Evaluation Working Groups are competent, disciplined, diligent, and consistent and demonstrate a high degree of expertise.

- The process followed by the Evaluation Working Groups includes taking each claim and examining the evidence to make an objective evaluation. This is very effective.

- The competitive evaluation process to identify the right international partner will be successful in finding the right answer. It is sufficiently disciplined to withstand scrutiny and is well documented …

- … review of the proposals corroborates the Evaluation Working Groups’ observations and findings.

- Evaluation Working Groups’ evaluations on a comparative basis are based on objective evidence and consistent with our conclusions…

- The Evaluation Working Groups have done an excellent job in diligent pursuit of the facts. They were able to elicit the facts and disaggregate the biases introduced into the competitive evaluation process in the proposal.

- [Navy’s] approach to select a partner now is a bold and much preferred stroke, because once the partner is chosen from a competitive process, we can build the relationship and drive cost realism from a team approach versus “rigging the game” to win the bid, only to follow with many changes and adders as the true cost versus bid price emerges.34

Did Defence appropriately advise Government of the outcome of the competitive evaluation process?

Defence appropriately advised the Government of the outcome of the competitive evaluation process:

- The Minister for Defence and Defence provided advice to the Australian Government in April 2016 recommending DCNS as the preferred international partner.

- The advice was detailed, and provided a comprehensive analysis of the performance of each of the participants in the competitive evaluation and their respective rankings.

- The advice clearly identified the risks and caveats in proceeding with DCNS as the preferred international partner—Defence identified it was confident it could mitigate and resolve these issues through further negotiation with DCNS.

- The Government announced DCNS as the preferred international partner on 26 April 2016.

Advice to Government

3.34 On 19 April 2016, the Minister for Defence provided written advice to the Australian Government that DCNS was Defence’s recommended international partner.35 The advice to Government was based on the outcomes of the competitive evaluation process. It contained a detailed analysis of the evaluation, illustrated the performance of each of the participants against the criteria, and a provided a measurable justification for DCNS’s selection. The Australian Government agreed that DCNS would be Australia’s preferred international partner subject to further discussions on intellectual property and cost issues.

3.35 Defence had identified that appropriate intellectual property rights would be required to support Australia’s ability to sustain and operate the Future Submarine. This approach was considered necessary to avoid the problems Defence encountered during the acquisition and early sustainment of the Collins Class submarines.36 Defence expected that the commercial issues could be addressed through further negotiation with DCNS.

3.36 The Australian Government announced DCNS as the successful international partner on 26 April 2016:

… DCNS of France has been selected as our preferred international partner for the design of the 12 Future Submarines, subject to further discussions on commercial matters.

… Subject to discussions on commercial matters, the design of the Future Submarine with DCNS will begin this year.37

3.37 Defence and DCNS entered into a Future Submarine Program Design and Mobilisation Contract in October 2016. The contract objectives included ‘to conduct early mobilisation activities and commence preliminary design studies for the delivery of the Future Submarine Program’.

3.38 A Framework Agreement Concerning Cooperation on the Future Submarine Program was signed by the Australian and French Governments on 20 December 2016. The purpose of the Agreement was:

… to define the principles, the framework, and the initial means of support and cooperation settled between the Parties for Australia’s Future Submarine Program, considering Australia’s enduring commitment to establish a long-term partnership with DCNS for the design and construction of the Future Submarine to be built in Australia, and the importance of maximising Australian industry involvement in these activities. 38

Participant debriefs

3.39 Following the advice to participants of their result in the competitive evaluation process, Defence undertook debriefing sessions with Japan and TKMS. The Australian Government Solicitor advised on the most appropriate way to provide feedback as well as a suggested outline of the debrief meeting.

3.40 Debriefs were undertaken verbally using a scripted approach. The use of a written script was intended to prevent any comments being made to the participant which may be inaccurate or inadvertently mislead the participant as to how their response was evaluated. A script further helped to ensure that all of the common points were made to participants. The two unsuccessful participants were debriefed on the strengths and weaknesses of their responses against each of the criteria, and were provided with an overall assessment of their proposal.

Appendix

Department of Defence’s Response

Footnotes

1 The Hon. Kevin Andrews MP, Minister for Defence—Statement on Australia’s future submarine, 9 February 2015; and Press Release—Strategic direction of the Future Submarine Program, 20 February 2015.

2 Prime Minister and Minister for Defence, Joint Media Release, Future Submarine Program, 26 April 2016.

3 The Hon. Kevin Andrews MP, Minister for Defence—Statement on Australia’s future submarine, 9 February 2015; and Press Release—Strategic direction of the Future Submarine Program, 20 February 2015.

4 ibid.

5 Prime Minister, Minister for Defence—Joint media release–Future submarine program, 26 April 2016.

6 Navy’s current submarine platform, the Collins Class, is also a diesel-electric platform. The ANAO’s review indicated that there are no current plans for an alternative platform. This was confirmed by Defence advice.

7 A diesel-electric submarine is at its most vulnerable when it is close to the surface and operating its diesel engines. The engines create noise, and other signatures are altered, increasing the range at which the submarine can be detected.

8 Defence had also considered ship builders in the United States of America and the United Kingdom. In both cases, Defence found these ship builders were engaged in their own submarine build programs and had no capacity to participate in the Future Submarine design and build.

9 Defence White Paper 2009, p. 70.

10 Capability definition documentation is usually finalised during the design phase of a program, for non-military off-the-shelf acquisitions. The capability definition suite for the Future Submarine was therefore still in its preliminary form when the competitive evaluation was undertaken. However, due to the work undertaken by Defence since the release of the 2009 Defence White Paper, these preliminary documents were detailed and contained significant amounts of information relating to Defence’s requirements. A further capability definition document, the Test Concept Document, will be developed during the design phase. The Test Concept Document provides an outline of the test strategy to be used to verify and validate that the design and operational requirements of the capability requirements have been complied with.

11 See paragraph 2.5 above.

12 The framework was formally endorsed and approved by the Australian Government Solicitor; the Director General Future Submarine Program; and the Head of the Future Submarine Program. Forms for the declaration of Conflict of interest; Engagement protocols for external service providers; Legal Process key action plan; and the Deed of Confidentiality each make up the Legal Process and Probity framework.

13 See paragraphs 2.2–2.8 above.

14 See paragraph 2.16 below.

15 Current government policy, in-line with the 2016 Defence White Paper, is to acquire a fleet of 12 submarines.

16 The integration of combat systems into Defence platforms has been identified as a key risk in previous Defence acquisition and upgrade programs. See ANAO Audit Report No. 22 2013–14 Air Warfare Destroyer Program, pp. 235–236; and ANAO Audit Report No. 11 2016–17 Tiger—Army’s Armed Reconnaissance Helicopter, p. 30.

17 DCNS and TKMS were provided with data item descriptions. The Government of Japan was provided with the same information in Annexes to the government to government agreement.

18 See paragraphs 3.12–3.15.

19 Historical analysis involved comparison of the costs provided by the participants to relevant, publicly available submarine prices.

20 Parametric analysis used the True Planning software tool, ‘a predictive modelling system that translates available parameter data into costs of delivering products and services.’ This used various inputs, including design information and subject matter expert evaluations, and variables, such as design margins and build locations, as interrelated factors in a predictive model. The True Planning model was calibrated against available historic submarine data and Collins Class data, and was shown to produce valid cost estimations.

21 Sensitivity analysis involved examining the effect of changes in key cost drivers, including material premiums, Australian productivity, labour rates, labour hours, learning curve rates, and design and construction hours. Two scenarios were considered—one in which small changes were made to these values, and one in which larger changes were made. This approach established the upper and lower boundaries for comparison against the Participant provided data.

22 The process generated rough order of magnitude costs which are not reflective of the final cost of the Future Submarine. The cost data was expected to provide Defence with an indication of the potential cost of the Future Submarine, and an opportunity to assess the competency of the participants’ costing methodology. Final costs will be determined during the design phase of the Future Submarine Program and are subject to approval by Government.

23 Professor Donald Winter is a former Secretary of the United States Navy; the Hon. Justice Julie Ann Dodds-Streeton is former Justice of the Federal Court of Australia; Mr Ron Finlay is a Principal and Chief Executive of Finlay Consulting with expertise in construction, development and infrastructure projects; and Mr Jim McDowell is the Deputy Chair of the Australian Nuclear Science and Technology Organisation.

24 Five workshops were conducted with each participant. See paragraph 3.13.

25 The ANAO’s review of the register indicated that the vast majority of issues were minor, for example, Defence staff being invited to share taxis with staff of the process participants.

26 The fifth workshop specifically covered commitment on key commercial principles.

27 GST Exclusive. GST was not considered applicable to the funding as the work was completed outside of Australia.

28 In accordance with the Defence Exemption of Services (DPPM 1.2–5, paragraph 26) pursuant to paragraph 2.6 of the Commonwealth Procurement Rules relating to the protection of essential security interests.

29 Excluding salary costs. Defence advised that it did not measure the costs of salaries as part of the project cost.

30 While the participants were asked to submit their responses based on the acquisition of eight Future Submarines, the 2016 Defence White Paper committed to the acquisition of 12 Future Submarines. The Future Submarine program does not consider this to have materially affected the results of the assessment of the responses against the evaluation criteria.

31 See paragraphs 2.38–2.40 of this audit report on the expert advisory panel.

32 The findings of the Behavioural Observations Report were used to determine if there were any major discrepancies between the observed behaviours and the content of each participant’s submission in relation to partnering with the Commonwealth.

33 This audit is the first in a series of audits the ANAO will conduct on the Future Submarine Program. The ANAO will examine Defence’s performance in managing a single design partner and achieving value-for-money in the absence of competition.

34 The ANAO notes that this is in contrast to Navy’s approach to the SEA 5000 Future Frigate program, which relies on a competitive process to reduce costs. See paragraphs 3.28–3.31 above.

35 The written advice was supplemented by a Defence Briefing.

36 Restrictive intellectual property rights for the Collins Class submarines impacted Defence’s ability to address design issues and to update and upgrade systems after the program separated from the original designer, Kockums. Additionally, an independent ability to sustain and operate the Future Submarines would be important in the event of an unforeseen shift in Australia’s relationship with France.

37 Prime Minister and Minister for Defence, Joint Media Release, Future Submarine Program, 26 April 2016.

38 Framework Agreement Concerning Cooperation on the Future Submarine Program, 20 December 2016, p. 2.