Browse our range of reports and publications including performance and financial statement audit reports, assurance review reports, information reports and annual reports.

Implementation and Performance of the Cashless Debit Card Trial — Follow-on

Please direct enquiries through our contact page.

Audit snapshot

Why did we do this audit?

- Auditor-General Report No.1 2018–19 found that while the Department of Social Services (DSS) largely established appropriate arrangements to implement the Cashless Debit Card (CDC) Trial, its approach to monitoring and evaluation was inadequate.

- The 2018–19 audit made six recommendations related to risk management, procurement, contract management, performance monitoring, post-implementation review, cost–benefit analysis and evaluation.

- This audit examined the effectiveness of DSS’ administration of the CDC program, including implementation of the previous audit’s recommendations.

Key facts

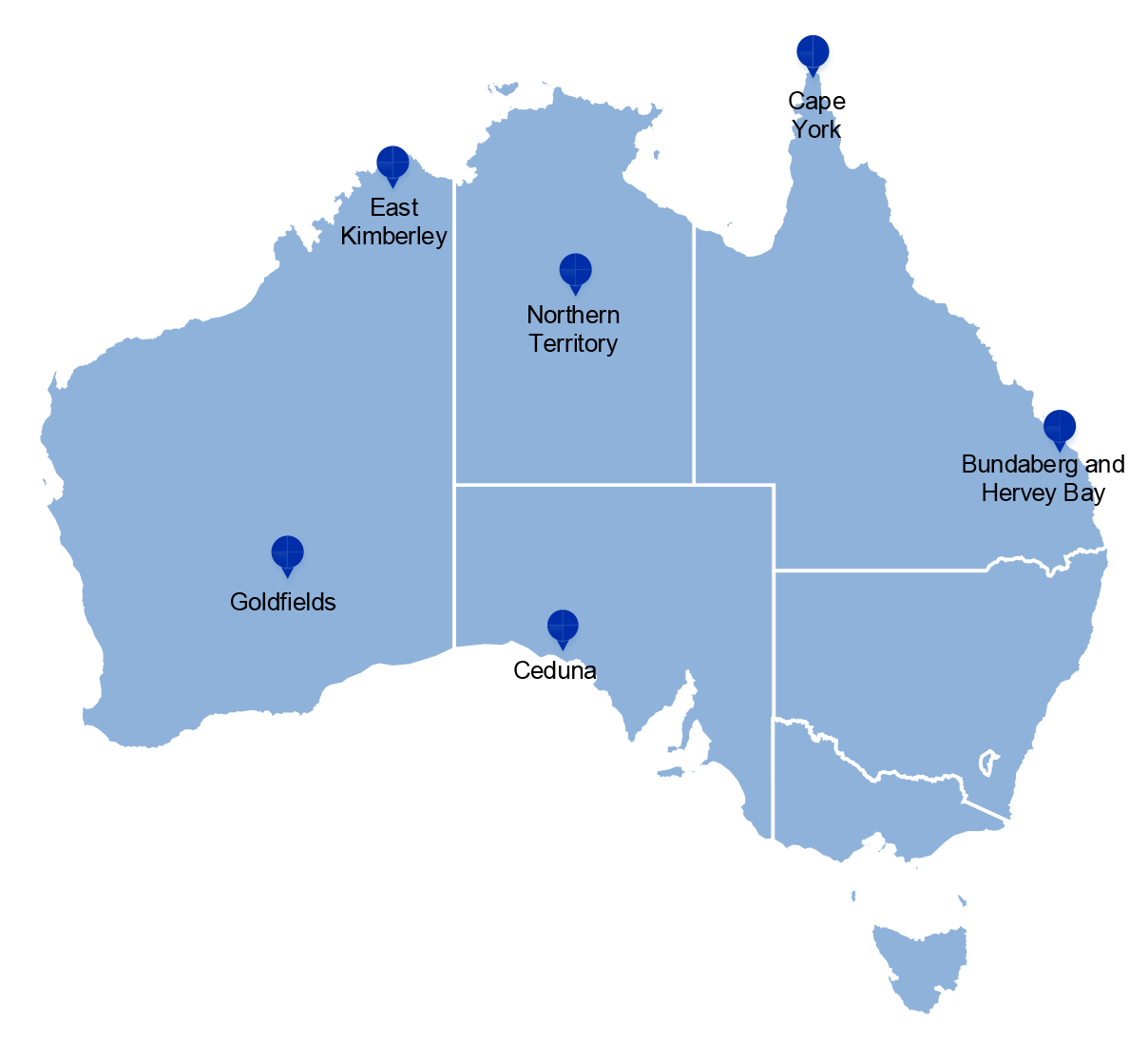

- The CDC Trial commenced in 2016. It is operating in Ceduna, East Kimberley, Goldfields, Bundaberg, Hervey Bay, Cape York and the Northern Territory.

- The objective of the CDC Trial is to encourage socially responsible behaviour by restricting cash available to participants to spend on alcohol, drugs or gambling.

What did we find?

- DSS’ administrative oversight of the CDC program is largely effective, however DSS has not demonstrated that the CDC program is meeting its intended objectives.

- DSS has implemented the recommendations from Auditor-General Report No.1 2018–19 relating to risk management, procurement and contract management.

- DSS has partly implemented the recommendations relating to performance monitoring.

- DSS has not effectively implemented the recommendations relating to cost–benefit analysis, post-implementation reviews and evaluation.

What did we recommend?

- There were two recommendations to DSS. The recommendations relate to the development of internal performance monitoring, and the conduct of an external review of the evaluation.

- DSS agreed to the first recommendation and disagreed to the second recommendation.

16,685

Active CDC participants as at February 2022

$36.5m

Costs of the CDC program in 2020–21

591

Number of CDC participants who have exited the program as at February 2022

Summary and recommendations

Background

1. The Australian Government introduced the Cashless Debit Card (CDC) Trial, later known as the CDC program, in 2016.

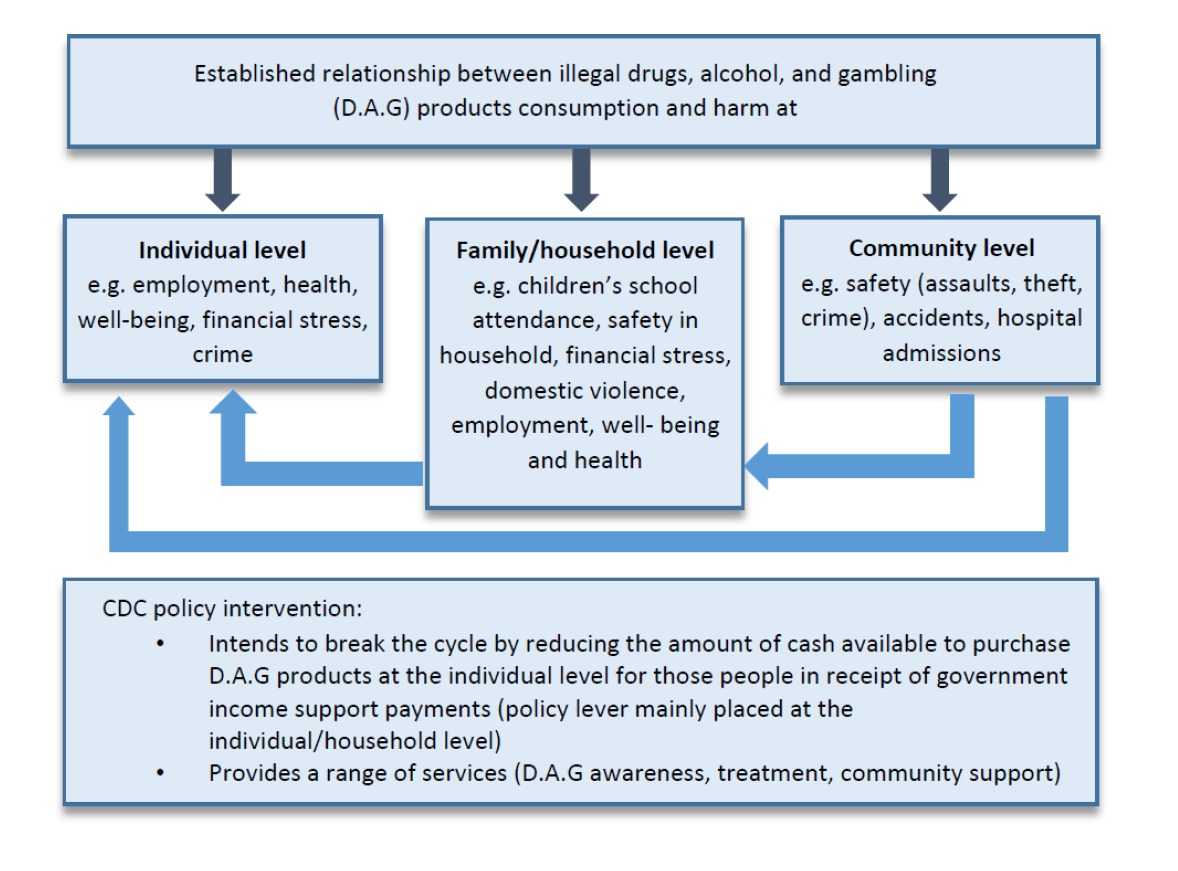

2. Under the CDC program, a portion of a participant’s income support payment is allocated to a restricted bank account, accessed by a debit card (the CDC). The CDC does not allow cash withdrawals; or purchase of alcohol, gambling or cash-like products. The objective of the CDC program is to assist people receiving income support to better manage their finances and encourage socially responsible behaviour.

3. The CDC is enabled under the Social Security (Administration) Act 1999. The Department of Social Services (DSS) and Services Australia are responsible for the CDC program. There are two card providers: Indue Ltd (Indue) and the Traditional Credit Union (TCU).

4. The CDC program has been implemented in Ceduna in South Australia; the East Kimberley region in Western Australia; the Goldfields region in Western Australia; the Bundaberg, Hervey Bay, and Cape York regions in Queensland; and the Northern Territory.

Rationale for undertaking the audit

5. Auditor-General Report No.1 2018–19 The Implementation and Performance of the Cashless Debit Card Trial found that while DSS largely established appropriate arrangements to implement the CDC Trial, its approach to monitoring and evaluation was inadequate. It was therefore difficult to conclude if the CDC Trial was effective in achieving its objective of reducing social harm and whether the card was a lower cost welfare quarantining approach compared to other components of Income Management such as the BasicsCard.

6. The report made six recommendations relating to risk management, procurement, contract management, performance monitoring, cost–benefit analysis, post-implementation reviews and evaluation.

7. This follow-on audit provides the Parliament with assurance as to whether:

- DSS has addressed the agreed 2018–19 Auditor-General recommendations;

- DSS’ management of the extended CDC program is effective; and

- the extended CDC program was suitably informed by a second impact evaluation of the CDC Trial.

Audit objective and criteria

8. The objective of the audit was to examine the effectiveness of DSS’ administration of the Cashless Debit Card program, including implementation of the recommendations made in Auditor-General Report No.1 2018–19, The Implementation and Performance of the Cashless Debit Card Trial.

9. To form a conclusion against the audit objective, the following criteria were applied:

- Do DSS and Services Australia have effective risk management, procurement and contract management processes in place for the CDC program?

- Has DSS implemented effective performance measurement and monitoring processes for the CDC program?

- Was the expansion of the CDC program informed by findings and lessons learned from an effective evaluation, cost–benefit analysis and post-implementation review of the CDC Trial?

Conclusion

10. DSS’ administrative oversight of the CDC program is largely effective, however, DSS has not demonstrated that the CDC program is meeting its intended objectives. DSS implemented the recommendations from Auditor-General Report No.1 2018–19 relating to risk management, procurement and contract management, partly implemented the recommendations relating to performance monitoring, and did not effectively implement the recommendations relating to cost–benefit analysis, post-implementation review and evaluation.

11. DSS and Services Australia have effective risk management processes in place for the CDC program, although DSS has not yet developed a risk-based compliance framework. DSS’ limited tender procurement processes were undertaken in accordance with Commonwealth Procurement Rules, however DSS’ due diligence over its procurement of the Traditional Credit Union could have been more thorough. Contract management arrangements with the card providers are effective. A service level agreement between DSS and Services Australia was finalised in April 2022. Recommendations from Auditor-General Report No.1 2018–19 relating to risk management, procurement and contract management were implemented.

12. Internal performance measurement and monitoring processes for the CDC program are not effective. Monitoring data exists, but it is not used to provide a clear view of program performance due to limited performance measures and no targets. DSS established external performance measures for the CDC program. These were found to be related to DSS’ purpose and key activities, but one performance indicator was not fully measurable. External public performance reporting was accurate. Recommendations from Auditor-General Report No.1 2018–19 relating to performance measurement and monitoring were partly implemented.

13. The CDC program extension and expansion was not informed by an effective second impact evaluation, cost–benefit analysis or post-implementation review. Although DSS evaluated the CDC Trial, a second impact evaluation was delivered late in the implementation of the CDC program, had similar methodological limitations to the first impact evaluation and was not independently reviewed. A cost–benefit analysis and post-implementation review on the CDC program were undertaken but not used. The recommendations from Auditor-General Report No.1 2018–19 relating to evaluation, cost–benefit analysis and post-implementation review were not effectively implemented.

Supporting findings

Risk management, procurement and contract management

14. There are fit-for-purpose risk management approaches in place in DSS and Services Australia for the CDC program although there is no risk-based compliance strategy. Risks are identified and treatments are established. DSS’ CDC risk management processes are aligned with the DSS enterprise risk framework. Services Australia has appropriate risk documentation and processes in place. Documentation of shared risk between DSS and Services Australia is developing. (Paragraphs 2.4 to 2.37)

15. The limited tender procurements for the extension and expansion of CDC services and for an additional card issuer in the Northern Territory were undertaken in a manner that is consistent with the Commonwealth Procurement Rules. Procurement processes, procurement decisions and conflict of interest declarations were documented. The conduct of procurements through limited tender was justified in reference to appropriate provisions within the Commonwealth Procurement Rules. The procurement of Indue for expanded card services was largely effective. A value for money assessment for one of the Indue procurements was not fully completed. The procurement of a second card provider (TCU) was meant to be informed by a scoping study. The scoping study, which was contracted to TCU, did not fully inform the subsequent limited tender procurement. There was limited due diligence into TCU’s ability to deliver the services. A value for money assessment was conducted during contract negotiations. (Paragraphs 2.38 to 2.67)

16. There are appropriate contract management and service delivery oversight arrangements in place for the CDC program. Effective contract management plans are in place for the contracts with the card providers. Documentation supporting the monitoring of Indue service delivery risk could be more regularly reviewed. In April 2022, DSS and Services Australia finalised a CDC service level agreement. This was established late in the relationship, which commenced in 2016. (Paragraphs 2.68 to 2.90)

Performance measurement and reporting

17. Reports of internal performance measures are not produced as required under the CDC data monitoring strategy. There are a number of data reports provided to and considered by DSS on a regular basis. These reports include some performance measures and no performance targets, and provide limited insight into program performance or impact. DSS has not implemented the recommendation from Auditor-General Report No.1 2018–19 that it fully utilise all available data to measure performance. (Paragraphs 3.5 to 3.19)

18. In 2020–21, DSS developed two external performance indicators for the CDC program. An ANAO audit of DSS’ 2020–21 Annual Performance Statement found that the two indicators were directly related to a key activity (the CDC program), that one of the performance indicators was measurable, and part of the second indicator was not measurable because it was not verifiable and was at risk of bias. A minor finding was raised. DSS reports annually against the two CDC performance measures. (Paragraphs 3.22 to 3.32)

Evaluation, cost–benefit analysis and post-implementation review

19. DSS’ management of the second impact evaluation of the CDC Trial was ineffective. Results from a second impact evaluation were delivered 18 months after the original agreed timeframe and there is limited evidence the evaluation informed policy development. The commissioned design of the second impact evaluation did not require the evaluators to address the methodological limitations that had been identified in the first impact evaluation. DSS did not undertake a legislated review of the evaluation. (Paragraphs 4.7 to 4.49)

20. A cost–benefit analysis and post-implementation review were undertaken on the CDC. Due to significant delays and methodological limitations, this work has not clearly informed the extension of the CDC or its expansion to other regions. (Paragraphs 4.50 to 4.73)

Recommendations

Recommendation no. 1

Paragraph 3.20

Department of Social Services develops internal performance measures and targets to better monitor CDC program implementation and impact.

Department of Social Services’ response: Agreed.

Recommendation no. 2

Paragraph 4.39

Department of Social Services undertakes an external review of the second impact evaluation of the CDC.

Department of Social Services’ response: Disagreed.

Summary of entity responses

Department of Social Services

The Department of Social Services (the department) acknowledges the insights and opportunities for improvement outlined in the Australian National Audit Office (ANAO) report on Implementation and performance of the Cashless Debit Card (CDC) Trial — Follow-on.

We acknowledge the ANAO’s overall conclusion that the department’s administrative oversight of the program is largely effective. The department accepts the conclusion relating to internal performance measures. We acknowledge the rationale and supporting evidence that the second impact evaluation and the cost-benefit analysis were constrained by limitations to available data.

The department accepts Recommendation 1 and acknowledges the suggested opportunities for improvement. The department has taken steps to address these and actions are either underway or already complete. This will strengthen the department’s oversight of the operation and effectiveness of the CDC program.

The department does not agree with Recommendation 2. Limitations of the second impact evaluation are openly acknowledged. An external review will not generate additional evidence or insights and would only reiterate data availability and accessibility constraints. This would not constitute value for money to the taxpayer.

The department is supportive of the independent review process and commits to undertaking a review of any future evaluations.

ANAO comment on Department of Social Services response

21. In relation to Recommendation 2, the Social Services Legislation Amendment (Cashless Debit Card Trial Expansion) Act 2018 included a requirement that any review or evaluation of the CDC Trial must be reviewed by an independent expert within six months of the Minister receiving the final report (refer note ‘c’ to Table 1.1). Contrary to the legislative requirement, the evaluation of the CDC program was never reviewed by an independent expert. Although the Department of Social Services (DSS) describes the 2021 evaluation as having limitations, the results were used by DSS to conclude that it met one of two externally reported CDC program performance measures: ‘Extent to which the CDC supports a reduction in social harm in communities’ (refer paragraph 3.30). In making the legislative amendment, the clear intent of Parliament was to obtain independent assurance that the cashless welfare arrangements are effective in order to inform expansion of the arrangements beyond the trial areas. A review of the evaluation methodology would, moreover, help ensure that the design of future evaluation work is fit for purpose and represents an appropriate use of public resources.

Services Australia

Services Australia (the agency) welcomes this report and notes that there are no recommendations directed at the agency. Recognising that the audit concluded that the agency had effective risk management processes in place for the Cashless Debit Card (CDC) program, we will ensure that the improvement opportunity identified for risk treatments to be reviewed regularly is incorporated into these processes.

In respect to the service level agreement between the agency and the Department of Social Services, this was finalised and acknowledged by the ANAO during the report comment period in April 2022. We will take into consideration the broader audit findings, and incorporate any lessons where appropriate as we work with the Department of Social Services to implement the CDC program policies and deliver services.

Key messages from this audit for all Australian Government entities

Below is a summary of key messages, including instances of good practice, that have been identified in this audit and which may be relevant for the operations of other Australian Government entities.

Policy/program design

Performance and impact measurement

Policy/program implementation

1. Background

Introduction

1.1 The Australian Government introduced the Cashless Debit Card (CDC) Trial, later known as the CDC program, in 2016.

1.2 Under the CDC program, a portion of a participant’s income support payment is allocated to a restricted bank account, accessed by a debit card (the CDC). The CDC does not allow cash withdrawals or the purchase of alcohol, gambling or cash-like products. The objective of the CDC program is to assist people receiving income support to better manage their finances and encourage socially responsible behaviour.

1.3 The Department of Social Services (DSS) and Services Australia are responsible for the CDC program. Service delivery functions were originally delivered by DSS and transitioned to Services Australia from March 2020.1 There are two card providers: Indue Ltd (Indue) and the Traditional Credit Union (TCU). Other organisations support the CDC program including local partners and Australia Post outlets (contracted by Indue), and community panels.2 The roles and responsibilities of DSS, Services Australia, Indue, TCU and local partners are set out in Appendix 3.

1.4 In 2020–21 the cost of the CDC program was $36.5 million.

1.5 A number of supporting services and programs have been introduced and funded by the Australian Government as part of the CDC program to provide direct assistance to CDC participants and communities. These include employment programs and training; mobile outreach services for vulnerable people; child, parent and youth support services; family safety programs to reduce violence against women and children; alcohol and drug services; allied health and mental health programs; and assistance to manage and build personal financial capability. These programs are delivered by non-government organisations in the CDC regions. In 2020–21 and 2021–22, the total funding for these programs totalled $22.1 million across both years.

Cashless Debit Card enabling legislation

1.6 There have been several amendments to the Social Security (Administration) Act 1999 (the SSA Act) to establish, expand and extend the CDC Trial and program (Table 1.1).

Table 1.1: CDC legislative changes, 2015 to 2020

|

Name of legislation |

Date passed |

Changes to the CDC |

|

Social Security Legislation Amendment (Debit Card Trial) Act 2015 |

12 November 2015 |

|

|

Social Security Legislation Amendment (Cashless Debit Card) Act 2018 |

20 February 2018 |

|

|

Social Services Legislation Amendment (Cashless Debit Card Trial Expansion) Act 2018 |

21 September 2018 |

|

|

Social Security (Administration) Amendment (Income Management and Cashless Welfare) Act 2019 |

5 April 2019 |

|

|

Social Security (Administration) Amendment (Cashless Welfare) Act 2019 |

12 August 2019 |

|

|

Social Security (Administration) Amendment (Continuation of Cashless Welfare) Act 2020 |

17 December 2020 |

|

Note a: Legislative instruments are laws on matters of detail made by a person or body authorised to do so by the relevant enabling (or primary) legislation.

Note b: Notifiable instruments differ from legislative instruments in that they are not subject to parliamentary scrutiny or automatic repeal (sun-setting).

Note c: The amendment was that ‘(1) If the Minister or the Secretary causes a review of the trial of the cashless welfare arrangements mentioned in section 124PF to be conducted, the Minister must cause the review to be evaluated. (2) The evaluation must: (a) be completed within 6 months from the time the Minister receives the review report; and (b) be conducted by an independent evaluation expert with significant expertise in the social and economic aspects of welfare policy. (3) The independent expert must: (a) consult trial participants; and (b) make recommendations as to (i) whether cashless welfare arrangements are effective; and (ii) whether such arrangements should be implemented outside of a trial area. (4) The Minister must cause a written report about the evaluation to be prepared. (5) The Minister must cause a copy of the report to be laid before each House of Parliament within 15 days after the completion of the report.

Note d: Participants can apply to exit the CDC program if they meet the eligibility criteria and provide all necessary documentary evidence.

Note e: Income Management, or welfare quarantining, is designed to assist income support recipients to budget their income support payments to ensure they have the basic essentials, such as food, education, housing and electricity. Income Management was introduced in 2007 and is in place in 14 regions across Australia.

Source: ANAO analysis of relevant legislation.

1.7 In the December 2020 legislative amendment, the objectives of the CDC were changed as set out in Table 1.2.

Table 1.2: Changes to the CDC program objectives

|

SSA Act — November 2015 |

SSA Act 10 — December 2020 |

|

The objects of this Part are to trial cashless welfare arrangements so as to:

|

The objects of this Part are to administer cashless welfare arrangements so as to:

|

Source: SSA Act, section 124PC.

Location, scope and size of the Cashless Debit Card Trial and program

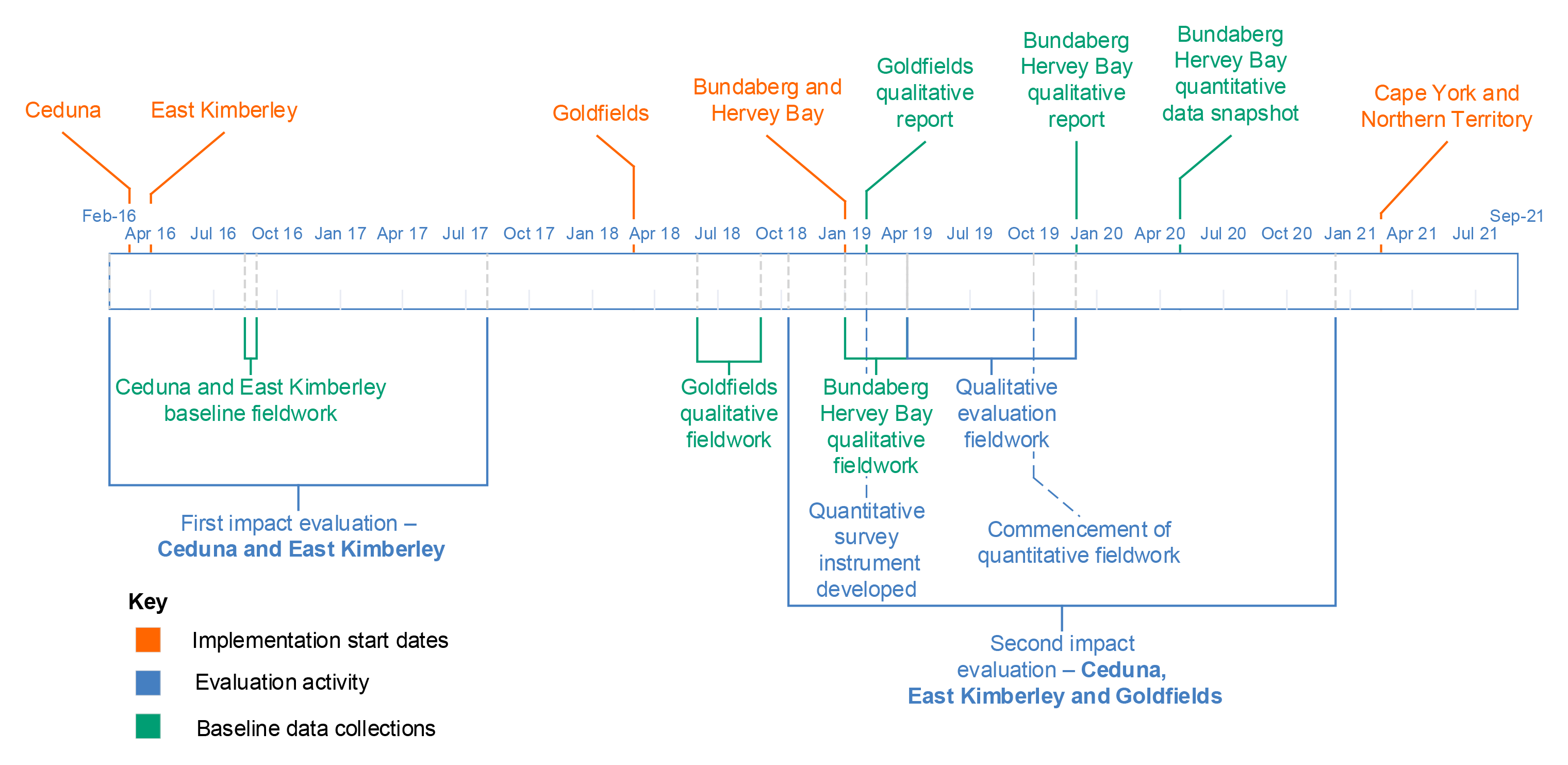

1.8 The CDC Trial commenced in Ceduna in South Australia in March 2016 and the East Kimberley region in Western Australia in April 2016. The CDC Trial was expanded to: the Goldfields region in Western Australia in March 2018; the Bundaberg and Hervey Bay region in Queensland in January 2019; the Cape York region in Queensland; and the Northern Territory in March 2021 (Figure 1.1).

Figure 1.1: CDC locations

Source: ANAO analysis of CDC eligibility.

1.9 Table 1.3 provides an overview of the CDC regions, date of commencement, eligible participants and split of income support payments on the CDC.

Table 1.3: CDC program eligibility and conditions

|

CDC region |

Date of commencement |

Eligible participants |

Split of income support payments |

|

Ceduna, South Australia |

March 2016 |

The program applies to all people who receive a ‘trigger’ paymenta and are not at pension age. |

Participants receive 20 per cent of their welfare payment in their regular bank account, which can be spent as they wish and can be accessed as cash; and 80 per cent of their welfare payment on to the CDC. |

|

East Kimberley, Western Australia |

April 2016 |

||

|

Goldfields, Western Australia |

March 2018 |

||

|

Bundaberg and Hervey Bay, Queensland |

December 2018 |

The program applies to people aged under 36 years who receive JobSeeker Payment, Youth Allowance (JobSeeker), Parenting Payment (Partnered) and Parenting Payment (Single). |

|

|

Cape York, Queensland |

March 2021 |

The program applies to people who have been referred to the Queensland Family Responsibilities Commission.b |

The Queensland Family Responsibilities Commission determines the CDC payment split. |

|

Northern Territory |

March 2021 |

The program applies to existing Income Management (BasicsCard)c participants in the Northern Territory who volunteer to transition to the CDC. |

Participants receive the same payment split they received under Income Management. In most cases, they receive 50 per cent of their welfare payment in their regular bank account and 50 per cent of their welfare payment on to the CDC. For participants that have been referred under the Child Protection Measure or by a recognised authority of the Northern Territory (the Banned Drinkers Register), 70 per cent will be placed on to the CDC. |

Note a: Subsection 124PD(1) of the SSA Act defines a trigger payment as a social security benefit or a social security pension of the following kinds: Carer Payment, Disability Support Pension, Parenting Payment Single; or ABStudy that includes an amount identified as living allowance.

Note b: The Queensland Family Responsibilities Commission is an independent statutory body that aims to support welfare reform, and community members and their families; restore socially responsible standards of behaviour; and establish local authority.

Note c: The BasicsCard was introduced in 2007 to support the Australian Government’s Income Management initiative. It can only be used at approved merchants. It differs from the CDC in that it cannot be used to purchase cigarettes or pornographic material, in addition to the common restrictions on alcohol purchase, gambling and cash withdrawals.

Source: ANAO analysis of CDC eligibility and DSS advice to the ANAO.

1.10 In addition to the payment splits shown in Table 1.3, 100 per cent of any lump sum payments, for example the Newborn Upfront Payment3, are paid on to the participant’s CDC.

1.11 Participants remain on the CDC program if they move to a different region of Australia, unless they apply to exit the CDC or there is a well-being exemption. Exit applications are managed by Services Australia. The Services Australia delegate may determine that a participant can exit the CDC if the participant can demonstrate reasonable and responsible management of their affairs, including finances. There are also well-being exemptions if the Secretary of DSS is satisfied that participation in the CDC would pose a serious risk to a person’s mental, physical or emotional wellbeing.

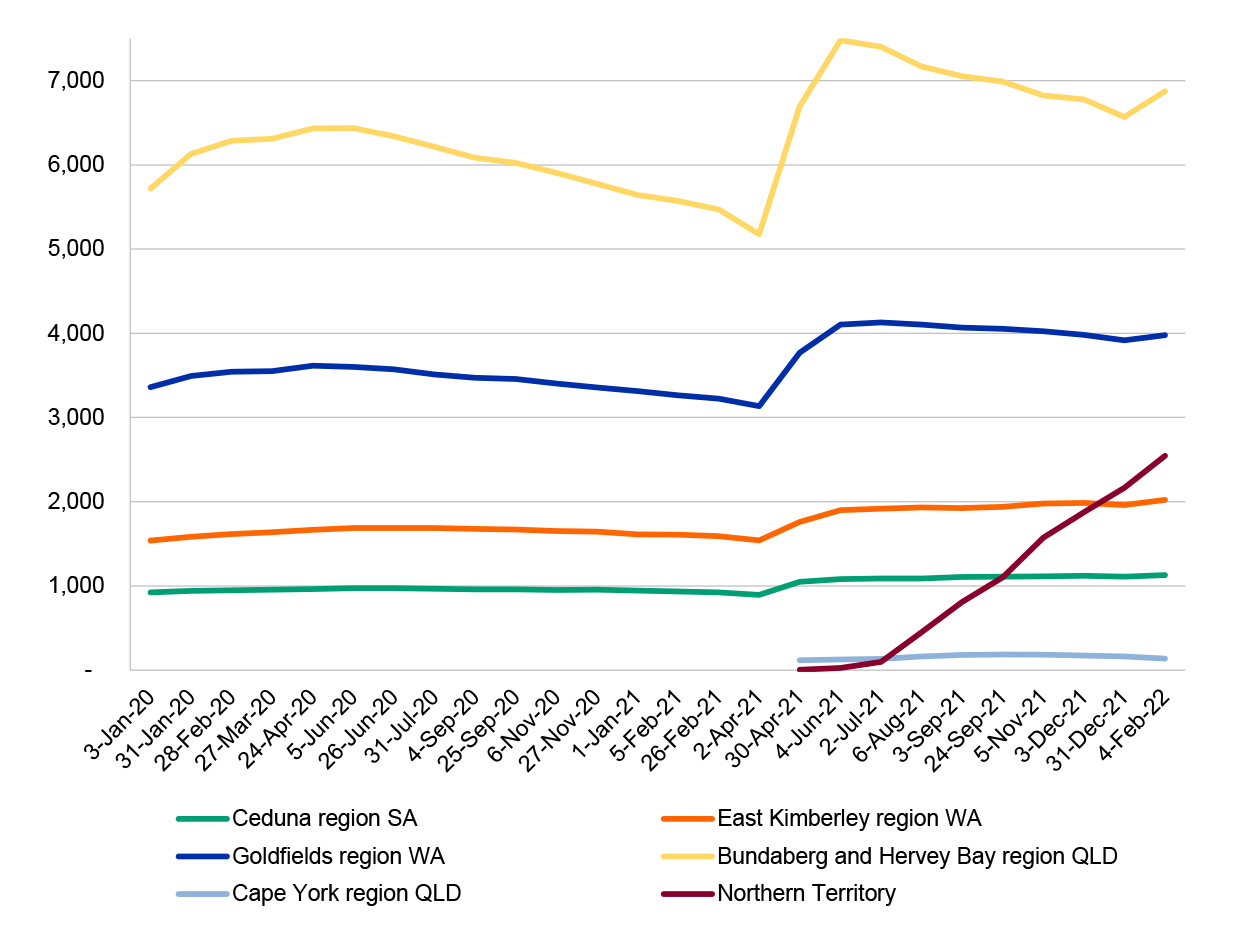

1.12 Figure 1.2 below shows the number of CDC participants by region. As at February 2022, the total number of active participants across all regions was 16,685.

Figure 1.2: Number of CDC participants, January 2020 to February 2022

Note: The decrease in active participants in the Bundaberg and Hervey Bay and Goldfields regions between June 2020 and April 2021 was partly due to the coronavirus disease 2019 (COVID-19) pandemic. The Minister agreed to pause the commencement of new participants on to the CDC due to the high workload that Services Australia was experiencing processing income support payments. The decrease was also due to CDC participants moving off the CDC for various reasons such as cessation of income support.

Source: ANAO analysis of CDC reports available from data.gov.au.

CDC technology

1.13 The CDC looks and operates like a regular financial institution transaction card (Figure 1.3), except that it cannot be used to buy alcohol, gambling products or cash-like products; or to withdraw cash. The CDC has contactless ‘tap and pay’ functionality. The participant’s CDC bank account accrues interest. The CDC can be used for online shopping, payment of bills online and payment for goods and services at most merchants nationwide that have eftpos or Visa facilities.4

Figure 1.3: Cashless Debit Cards

Source: DSS documentation.

1.14 For the purposes of the CDC, merchants are categorised into three groups:

- unrestricted merchants, which sell only unrestricted items (the majority of merchants);

- restricted merchants, which primarily sell restricted items; and

- mixed merchants, which sell both restricted and unrestricted items.

1.15 The card cannot be used at restricted merchants. Restricted merchants are those where the primary business is the sale of restricted products such as alcohol or gambling services. This includes online merchants that primarily sell restricted products.

1.16 Product level blocking assists CDC participants to shop at mixed merchants. Product level blocking prevents the purchase of restricted items at mixed merchants by automatically detecting restricted items at the point of sale. It aims to simplify operations for small businesses that wish to accept the CDC, and to provide more choice for participants.

1.17 Product level blocking involves three elements: a card payment terminal (‘PIN pad’), a point-of-sale system and a payment integrator that connects the PIN pad to the point-of-sale system. When items are scanned at the checkout point, the point-of-sale system checks for restricted items and sends a restricted item flag to the PIN pad. When the customer presents the CDC to the PIN pad, the PIN pad recognises the CDC and checks if there are any restricted item flags. If restricted items are flagged, the PIN pad cancels the transaction and displays the message ‘Cancelled, Restricted Item’ to the cardholder. The cardholder can remove the restricted items, purchase the restricted items with another payment method or cancel the sale altogether.

1.18 Product level blocking was initially implemented in 2018 by three major retailers — Australia Post, Woolworths and Coles. In February 2019 DSS commenced a product level blocking project targeted at small to medium-sized merchants. From June 2020 onwards, product level blocking was extended to more merchants. DSS advised that as at September 2021 there were 30 participating small to medium-sized merchants across the CDC regions, and work to increase the numbers was ongoing.

Rationale for undertaking the audit

1.19 Auditor-General Report No.1 2018–19 The Implementation and Performance of the Cashless Debit Card Trial found that while DSS largely established appropriate arrangements to implement the CDC Trial, its approach to monitoring and evaluation was inadequate. It was therefore difficult to conclude if the CDC Trial was effective in achieving its objective of reducing social harm and whether the card was a lower cost welfare quarantining approach compared to other components of Income Management such as the BasicsCard.

1.20 The report made six recommendations relating to risk management, procurement, contract management, performance monitoring, cost–benefit analysis, post-implementation reviews and evaluation.

1.21 This follow-on audit provides the Parliament with assurance as to whether:

- DSS has addressed the agreed 2018–19 Auditor-General recommendations;

- DSS’ management of the extended CDC program is effective; and

- the extended CDC program was suitably informed by a second impact evaluation of the CDC Trial.

Audit approach

Audit objective, criteria and scope

1.22 The objective of the audit was to examine the effectiveness of DSS’ administration of the Cashless Debit Card program, including implementation of the recommendations made in Auditor-General Report No.1 2018–19 The Implementation and Performance of the Cashless Debit Card Trial.

1.23 To form a conclusion against the audit objective, the following criteria were applied:

- Do DSS and Services Australia have effective risk management, procurement and contract management processes in place for the CDC program?

- Has DSS implemented effective performance measurement and monitoring processes for the CDC program?

- Was the expansion of the CDC program informed by findings and lessons learned from an effective evaluation, cost–benefit analysis and post-implementation review of the CDC Trial?

Audit methodology

1.24 The audit involved:

- reviewing contracts with the card providers and the bilateral arrangements between DSS and Services Australia, including reporting against deliverables;

- reviewing procurement activity in DSS;

- examining an evaluation report, post-implementation review and cost–benefit analysis on the CDC Trial, including the underpinning methodologies and application of the findings;

- reviewing DSS’ performance monitoring and reporting;

- reviewing the end-to-end business process for the extraction, provision and usage of CDC participant data;

- meetings with staff in DSS and Services Australia; and

- four submissions received through the ANAO citizen contribution facility.

1.25 The audit was conducted in accordance with ANAO Auditing Standards at a cost to the ANAO of approximately $399,100.

1.26 The team members for this audit were Renina Boyd, Sonya Carter, Supriya Benjamin, Ji Young Kim, Peta Martyn and Christine Chalmers.

2. Risk management, procurement and contract management

Areas examined

This chapter examines whether the Department of Social Services (DSS) and Services Australia have effective risk management, procurement and contract management processes in place to support the administration of the Cashless Debit Card (CDC) program.

Conclusion

DSS and Services Australia have effective risk management processes in place for the CDC program, although DSS has not yet developed a risk-based compliance framework. DSS’ limited tender procurement processes were undertaken in accordance with Commonwealth Procurement Rules, however DSS’ due diligence over its procurement of the Traditional Credit Union could have been more thorough. Contract management arrangements with the card providers are effective. A service level agreement between DSS and Services Australia was finalised in April 2022. Recommendations from Auditor-General Report No.1 2018–19 relating to risk management, procurement and contract management were implemented.

Areas for improvement

The ANAO suggested that DSS promptly develops a compliance framework for the CDC program and that Services Australia ensures that risk treatments are reviewed regularly.

2.1 Auditor-General Report No.1 2018–19 The Implementation and Performance of the Cashless Debit Card Trial found that DSS did not actively monitor risks identified in risk plans, there were some deficiencies in elements of the procurement process and there were weaknesses in its approach to contract management.

2.2 The ANAO recommended in 2018–19 that: DSS should confirm risks are rated according to its risk management framework requirements; ensure risk mitigation strategies and treatments are appropriate and regularly reviewed; implement a consistent and transparent approach when assessing tenders; fully document procurement decisions; and employ appropriate contract management practices.5

2.3 The ANAO examined the:

- risk management arrangements including treatment of shared and fraud risks, issues escalation processes and establishment of a risk-based compliance strategy;

- procurement processes in place for the CDC program; and

- contract and service management processes.

Are fit-for-purpose risk management arrangements in place?

There are fit-for-purpose risk management approaches in place in DSS and Services Australia for the CDC program although there is no risk-based compliance strategy. Risks are identified and treatments are established. DSS’ CDC risk management processes are aligned with the DSS enterprise risk framework. Services Australia has appropriate risk documentation and processes in place. Documentation of shared risk between DSS and Services Australia is developing.

2.4 As non-corporate Commonwealth entities, DSS and Services Australia must manage risk in accordance with the Commonwealth Risk Management Policy. The goal of the Commonwealth Risk Management Policy is to embed risk management into the culture of Commonwealth entities where a shared understanding of risk leads to well-informed decision making.6

DSS’ CDC risk management arrangements

2.5 The DSS enterprise risk management framework (the DSS risk framework) includes a risk assessment toolkit, risk matrix and templates for risk and issues logs. The DSS risk framework is generally consistent with the Commonwealth Risk Management Policy. DSS’ overall enterprise risk appetite is low.

CDC risk management plans

2.6 In accordance with the DSS risk framework, DSS has developed a risk management plan for the CDC program. The CDC risk management plan is reviewed monthly and endorsed by the managers of the two DSS branches involved in administering the CDC program: the Cashless Welfare Policy and Technology Branch and the Cashless Welfare Engagement and Support Services Branch.

2.7 The August 2021 CDC risk management plan outlines 11 risks, comprising eight ‘medium’ rated risks and three ‘high’ rated risks. The high rated risks are:

- evaluation [of the CDC program] is not perceived as being independent, methodologically sound and/or does not extend and enhance the evidence base of the CDC;

- failure to maintain stakeholder support for the CDC program; and

- the transition of Income Management participants in the Northern Territory and Cape York to the CDC does not occur seamlessly for participants.

2.8 The risk ‘that a second issuer of the CDC in the Northern Territory is not implemented in a timely manner’ was rated incorrectly — according to the DSS risk matrix it should have been rated high whereas it was rated medium. This means that it did not receive the required risk escalation discussed at paragraph 2.12. This risk is now closed as a second card issuer in the Northern Territory has been contracted.

2.9 The CDC risk management plan contains the elements of an effective risk plan: risks, controls and treatments are identified. No risk tolerances or appetites are prescribed for CDC risks and the DSS enterprise risk framework does not require individual programs to establish a tolerance. Although the CDC program area did not use DSS’ Risk-E tool, which automatically calculates risk ratings7, it received support from the DSS enterprise risk area for an alternative approach using a different risk management template. Analysis of the risk management plan found that: 10 of the 11 risks are rated correctly; controls and their effectiveness are identified; and treatments are in place for mitigating seven of eight medium rated risks and all high rated risks.

2.10 As found in Auditor-General Report No.1 2018–19, most treatments are listed as ongoing rather than having specific due dates. Some treatments had specific due dates but these dates were obsolete, indicating a lack of review of the treatments. For example, the August 2021 risk management plan contained one treatment for an open risk that was still listed as due for completion in May 2021 and two treatments that were still listed as due for completion in July 2021.

Risk reporting

2.11 Project status reports for the CDC program cover issues and high risks, and are provided to DSS’ enterprise Implementation Committee and Executive Management Group quarterly. One incorrectly rated risk was not included in the status reports but was included in a risk management plan which accompanies the status reports.

2.12 According to the DSS risk framework, high and extreme risks must be reported to the relevant branch and group managers (for awareness, treatment authorisation or acceptance) and the Chief Risk Officer (for awareness and consolidation). The Group Manager, Communities reviews the CDC risk management plan and issues log (refer paragraph 2.33) before it is provided to the Executive Management Group. DSS advised that the Chief Risk Officer is provided with a copy of the CDC risk management plan and issues log on a quarterly basis as a member of the Executive Management Group.

2.13 Risk is a standing item at the CDC Steering Committee.8 DSS and Services Australia risk registers are tabled and discussed at the meetings. Steering Committee discussions include discussion of risks shared by DSS and Services Australia as well as the development of a shared risk register. Risks are also discussed regularly at the other CDC governance committees, comprising the:

- Northern Territory Transition Implementation Committee;

- Bundaberg and Hervey Bay Community Reference Group;

- Cashless Welfare in Cape York Meeting;

- Cashless Welfare Working Group (refer paragraph 2.90); and

- Cashless Debit Card Technology Working Group.

Services Australia’s CDC risk management arrangements

2.14 Services Australia has a risk management framework, risk management policy, enterprise risk management model dated May 2021 and a suite of risk management tools including a risk matrix and risk management process guidelines.

2.15 Services Australia has two risk plans in place to assist in the management of CDC risks. The first plan (for CDC extension and expansion) comprises two low and five medium rated risks. The second plan documents the risks associated with the transition from Income Management to the CDC in the Northern Territory and Cape York. The second plan has one low and 11 medium rated risks. The open risks at February 2022 were rated in line with Services Australia’s risk matrix.9

2.16 The Services Australia risk management plans contain the required elements of an effective risk plan, including risks and controls with assigned owners and treatments. The two risk plans were approved by the Deduction and Confirmation Branch National Manager.

2.17 The transition to the CDC in the Northern Territory and Cape York plan states that the plan should be reviewed monthly or when significant change occurs. Services Australia advised the ANAO that the plans are reviewed monthly and senior executive endorsement of the plans generally occurs on a quarterly basis or when a significant change is made. In both of the latest plans, the treatments for open risks are overdue. Services Australia should ensure that risk treatments are reviewed regularly, so that risk ratings and specified treatments remain appropriate.

Management of CDC shared risks

2.18 Entities have a responsibility under the Commonwealth Risk Management Policy to implement arrangements to understand and contribute to the management of shared risks.10 The bilateral management arrangement between DSS and Services Australia (refer paragraph 2.85) requires the entities to work cooperatively to identify and manage existing and emerging risks, and to comply with Commonwealth risk management policies.11 The DSS risk framework states that in order to manage shared risks, DSS must develop joint risk management plans that determine the governance arrangements in overseeing and monitoring shared risks.

2.19 Prior to October 2021, where a risk control was the joint responsibility of DSS and Services Australia, DSS stated this in its CDC risk management plan. Services Australia also had DSS listed as a joint risk owner in both of its risk management plans against relevant risks and controls. For example, a risk that ‘customer communication is ineffective’ is jointly owned by DSS and Services Australia in the Services Australia CDC risk management plan. Joint risks and controls were communicated though sharing respective CDC risk management plans at CDC Steering Committee meetings.

2.20 DSS and Services Australia did not have an approved shared risk register in place for the CDC program until October 2021, when a shared risk register was endorsed by the CDC Steering Committee. From October 2021, the content of the shared risk register is agreed by the relevant branch manager of each entity. The DSS and Services Australia shared risk register contains five risks that are all rated medium using the Services Australia risk matrix. The minutes of the 21 October 2021 meeting of the CDC Steering Committee note that that the joint risk register is supported by the entity risk plans which provide further detail on the risks. The risks in the shared risk register focus on the transition of participants from Income Management to the CDC in the Northern Territory rather than on the CDC more broadly.

2.21 The shared risk register does not contain a risk review date, risk control owners or treatment owners, or due dates for treatments. Services Australia advised the ANAO that the detail of risk controls and treatments, including their due dates, appear in the entities’ respective risk plans rather than the shared risk register. Service Australia also advised that further detail, such as the risk identification number, would be added to the shared risk register to allow for clearer linkage between the shared risk plan and the respective DSS and Service Australia risk plans. All risks in the shared risk register could be mapped by the ANAO to the Services Australia risk register. However, approximately 50 per cent of the treatments and controls in the shared risk register could not be mapped to the Services Australia register and there was no linkage to shared risks in the DSS risk register. It is unclear which entity owns the unmapped treatments and controls for shared risks.

Fraud and compliance risk

2.22 The kinds of fraud and compliance risks that could affect the CDC program include:

- participant fraud — CDC cardholders circumvent the program;

- merchant fraud — merchants circumvent the program;

- third party fraud —fraud or theft by family, friends or other members of the public; and

- service provider fraud — fraud committed by the card provider (for example, insider knowledge or abuse of market position) or the support service providers (for example, service not provided).

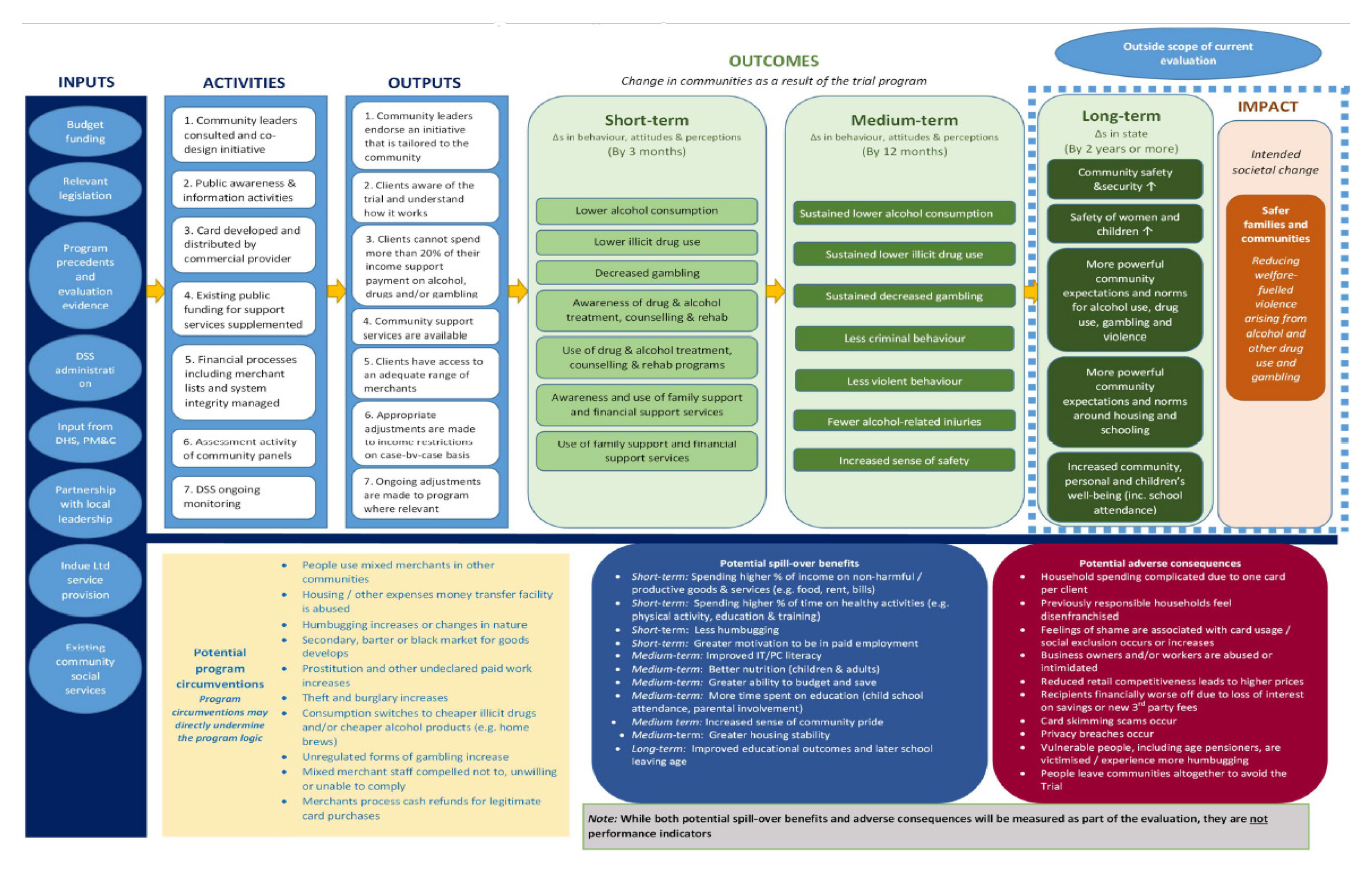

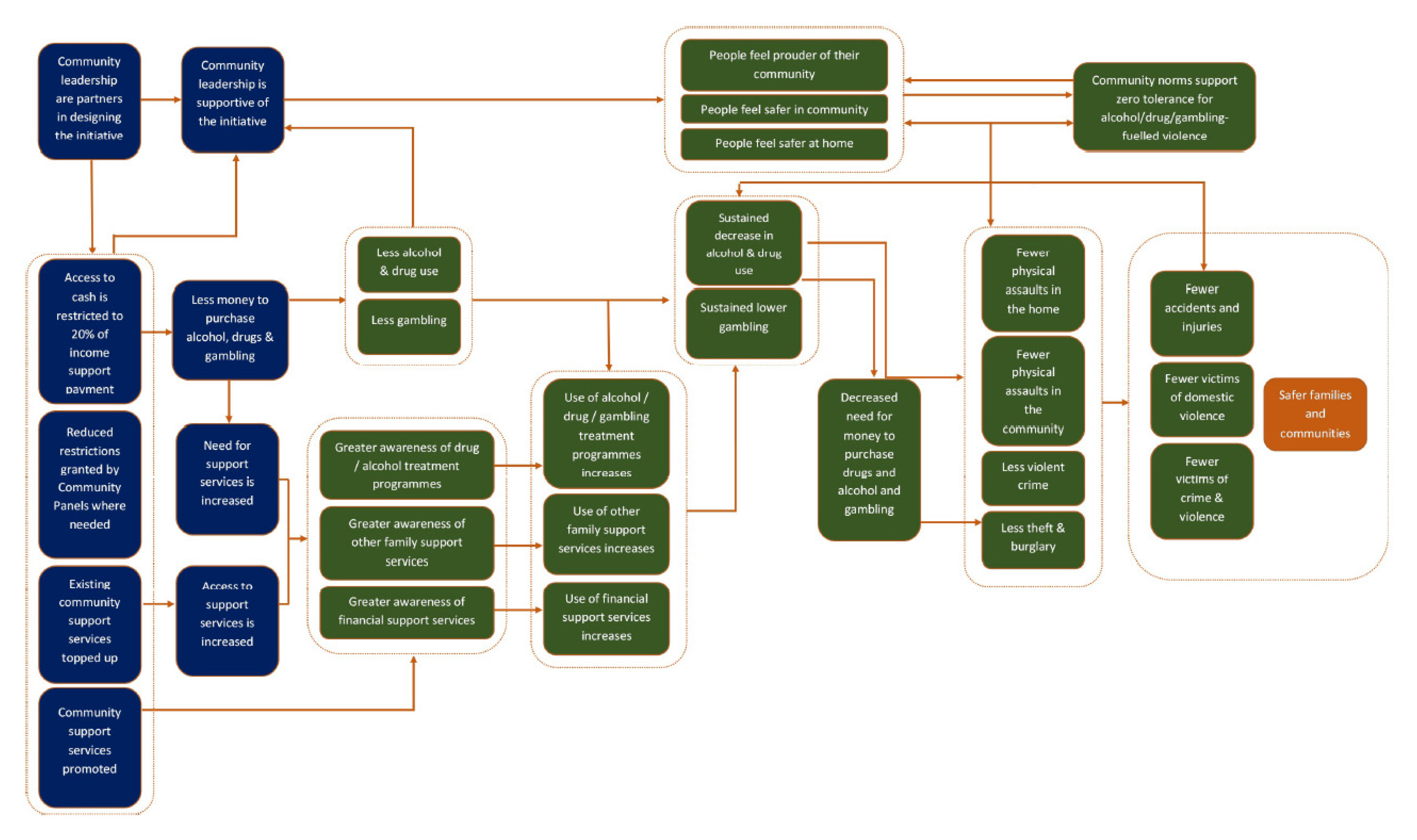

2.23 A CDC program logic developed in 2016 outlines some potential specific circumventions (refer Appendix 4).

2.24 The DSS CDC risk management plan includes two participant, merchant and third party fraud and compliance risks.

- Participant/merchant fraud — program integrity is jeopardised and participants and/or merchants significantly circumvent the program.

- Third party fraud — participants experience fraud or theft by family members, friends, other members of the public and online shopping.

2.25 The CDC risk management plan includes treatments for the two fraud and compliance risks.

- The risk controls for participant and third party fraud are to be managed primarily by Services Australia and Indue Ltd (Indue).

- Merchant fraud is to be managed primarily by DSS through product level blocking, data monitoring and merchant engagement. DSS advised the ANAO that Indue provides fraud protection services, including real time fraud detection, in line with standard banking practices.

Management of fraud by Services Australia, Indue and Traditional Credit Union

2.26 The DSS CDC risk management plan specifies the controls and treatments to be applied by Services Australia and Indue for fraud risks. The second card provider, Traditional Credit Union (TCU), has a responsibility to implement a fraud control plan and to report to DSS any instances of fraud against the Australian Government committed by TCU staff. TCU relies on Indue to apply fraud controls and treatments, as it relies on Indue systems.

2.27 Controls and treatments specified in the CDC risk management plan include provision of a ‘hotline’ and customer service centre, giving card security advice to participants, staff training, card blocking and fraud monitoring software. The ANAO did not confirm whether these fraud controls and treatments were implemented by Services Australia and Indue. DSS advised the ANAO that it monitors Services Australia and Indue’s fraud control activities through monthly operational meetings. A standing agenda item to discuss risks outlined in the CDC risk management plan was introduced in the 22 March 2022 meeting agenda and discussion was evidenced in the minutes. DSS advised that the CDC Compliance Section, established in November 2021, also reviews Indue’s performance against agreed service levels on a monthly basis. Finally, DSS advised that Indue has integrated into the existing system a new fraud monitoring software, IBM Safer Payments, to improve fraud detection. The ANAO did not confirm the application of the new software.

Management of fraud by DSS

2.28 DSS has a fraud control plan for the CDC dated February 2021. The plan includes actions such as the development of a compliance framework, fraud awareness training, analysis of CDC fraud risk, undertaking compliance checks and data analytics, and operation of a fraud hotline. DSS advised that it monitors social media12 relating to the functionality of the CDC at specific merchants. The ANAO did not confirm whether all of the actions outlined in the fraud control plan or advised to the ANAO have been implemented by DSS. Indue analytics software is used to identify suspicious merchant activity, which is reported to DSS each month. DSS conducts some merchant investigations based on this data.

2.29 DSS has two standard operating procedures in place to assist with compliance activities. These are about generating merchant investigation reports and conducting social media monitoring. DSS advised the ANAO that the CDC Compliance Section is working with the CDC Data Section to develop improved ways of identifying patterns in compliance data to identify any instances of possible circumvention by merchants or participants and whether further investigation is required.

Compliance framework

2.30 A compliance framework guides compliance activities to ensure that they are managed effectively and are risk-based, and establishes thresholds for triggering investigations and other compliance actions.

2.31 Although DSS undertakes compliance activities, thresholds for triggering compliance activity are not applied.

2.32 DSS advised the ANAO that CDC compliance is guided by the DSS 2021–23 fraud control plan and supported by an overarching Enterprise Compliance Framework dated December 2018. Although it is listed in the fraud control plan as an activity, DSS does not have a compliance framework or strategy for the CDC. In February 2022 DSS advised the ANAO that the CDC Compliance Section established within the Cashless Welfare Policy and Technology Branch intends to develop a compliance framework. This should be undertaken as a priority to ensure that compliance activities are appropriately risk-based.

CDC issues management and escalation

2.33 DSS maintains an issues log for the CDC that is reviewed monthly by the CDC branch managers. The issues log is largely consistent with the DSS departmental issues log template except for an issues consequence column, which was not included in the CDC issues log. As at August 2021, the DSS CDC issues log had seven open issues — one was rated ‘low’ and six were rated ‘medium’. It was unclear whether the issues were rated based on consequence or another consideration.

2.34 Services Australia maintains two CDC issues logs that contains 10 active issues — five minor, four moderate and one major. The major issue is about the requirement to undertake manual processing in order to transition participants on to the CDC in the Northern Territory.

2.35 DSS and Services Australia manage issues together primarily through the CDC governance committees. The ANAO was advised that time sensitive issues are raised directly with the senior managers. For example, the issue of manual transition of customers in the Northern Territory was managed by executive staff in DSS and Services Australia, and noted in the shared risk register and both entities’ risk management plans and issues logs.

2.36 Issues with Indue are managed by Services Australia through weekly meetings. DSS holds monthly meetings with Indue and an action register is kept to track operational issues arising from these meetings. DSS advised that these meetings have been held since March 2016. The register was started in late September 2021.

2.37 There is no documented issues escalation process for the CDC in DSS or Services Australia. In DSS, issues escalation occurs through the issues log update that is provided to the DSS Executive on a quarterly basis through the CDC project status report. General issues are discussed at monthly CDC Steering Committee meetings and at weekly Cashless Welfare Working Group meetings. DSS advised that they are discussed at weekly branch meetings and that the relevant Group Manager and Deputy Secretary also meet weekly to discuss the CDC program.

Were transparent procurement processes undertaken, consistent with the Commonwealth Procurement Rules?

The limited tender procurements for the extension and expansion of CDC services and for an additional card issuer in the Northern Territory were undertaken in a manner that is consistent with the Commonwealth Procurement Rules. Procurement processes, procurement decisions and conflict of interest declarations were documented. The conduct of procurements through limited tender was justified in reference to appropriate provisions within the Commonwealth Procurement Rules.

The procurement of Indue for expanded card services was largely effective. A value for money assessment for one of the Indue procurements was not fully completed.

The procurement of a second card provider (TCU) was meant to be informed by a scoping study. The scoping study, which was contracted to TCU, did not fully inform the subsequent limited tender procurement. There was limited due diligence into TCU’s ability to deliver the services. A value for money assessment was conducted during contract negotiations.

Procurement of Indue

2.38 DSS’ contractual relationship with Indue commenced in August 2015 when DSS engaged Indue to deliver the CDC under an IT build contract for delivery of systems technology and infrastructure for the CDC. A contract was then required for Indue to deliver operational aspects of the CDC Trial, commencing April 2016, including a debit card product, spending restrictions and ongoing support to participants.

2.39 The operational contract was varied 10 times between April 2016 and February 2022. Variations were to extend the duration of the CDC program, expand the CDC to additional regions and implement functionality improvements.

2.40 The operational contract between DSS and Indue states that DSS can extend the contract more than once, provided that it does not extend the commitment beyond five years after the start date of the contract (that is, April 2021). As amendments to the Social Security (Administration) Act 1999 (SSA Act) extended the CDC program beyond April 2021, DSS was required to extend CDC service provision in existing sites and newly introduced sites to 31 December 2022 through a new procurement.

Procurement to extend services in existing CDC sites

2.41 On 15 December 2020 the DSS delegate approved a limited tender procurement, with the sole tenderer being Indue, for the continued delivery of CDC card and account services in existing sites.13 A request for quote was issued to Indue for the provision of CDC and associated services to existing sites for the period 1 January 2021 to 31 December 2022. These services were to be in line with the terms and conditions of the existing Indue operational contract.

2.42 Extending the Indue contract was assessed as low risk due to the ongoing relationship and Indue’s proven capacity to deliver the services.

2.43 DSS’ evaluation of Indue’s tender found that Indue represented value for money and that there was likely to be an opportunity to realise savings as part of commercial negotiations. The value for money assessment included a comparative analysis of the pricing between the quote, the then contract and the proposed costs for 2020–21 and found there was no significant difference.

Procurement to expand CDC services in new sites and for shared services

2.44 On 29 January 2021, the delegate approved a limited tender procurement, again with Indue, for the expansion of CDC card and account provider services in the Northern Territory and Cape York region for the period 17 March 2021 to 31 December 2022. A request for quote was issued on 9 February 2021.

2.45 Indue responded in two parts: Part A was to secure a provider for the CDC and related services for the Northern Territory and Cape York; and Part B was for the provision of shared services for the Additional Issuer Trial.14

- Part A — the tender evaluation committee found that the pricing assessment was consistent with requirements and in line with existing Indue contract prices.

- Part B — the tender evaluation committee noted that although most of the required information was presented, some pricing information had been omitted and the required pricing template was not used. Initially, a partial value for money assessment was undertaken and DSS was not able to gather a full understanding of the costs and risks. The tender evaluation committee decided that an updated value for money and risk assessment be undertaken during contract negotiations. In December 2021, the response was determined to represent value for money.

2.46 The tender evaluation committee assessed Indue’s tender to be medium risk based on the considerations set out below.

- Services Australia would need to be involved in negotiations to ensure compliance with Services Australia’s service delivery processes.

- Indue would not provide information, correspondence or advice to participants in the Northern Territory about the CDC until Indue, DSS and Services Australia were satisfied it met all regulatory requirements. This was resolved during contract negotiations as Indue has a regulatory requirement to be compliant with consent requirements for participants before providing them with advice on the CDC.

- The short timeframe for commercial negotiations might impact DSS’ ability to attain savings on pricing.

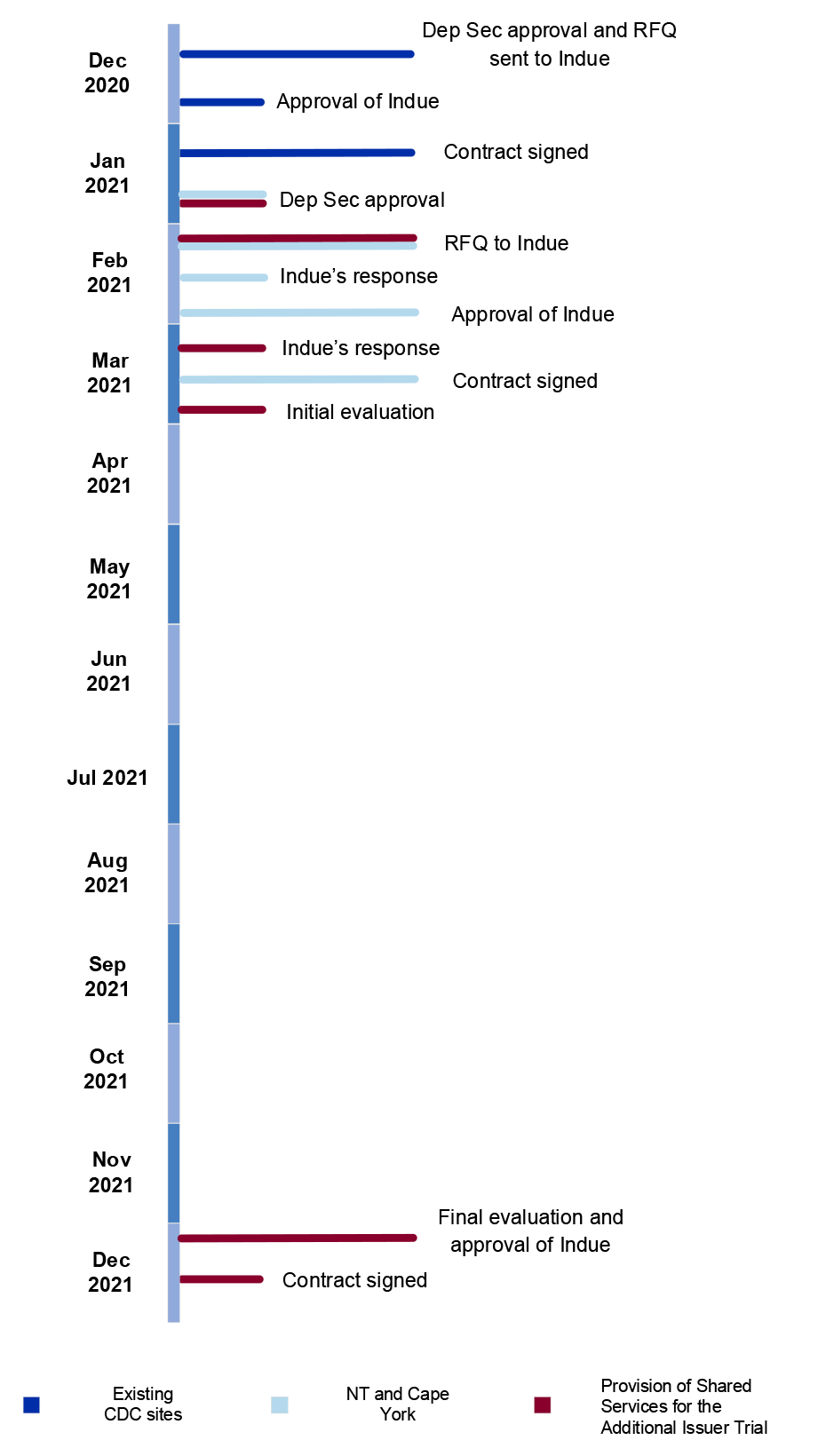

2.47 A timeline for the Indue procurements is depicted in Figure 2.1.

Figure 2.1: Procurement of Indue

Source: ANAO analysis.

2.48 DSS justified the sole provider approach for both procurements under Division 2, clause 10.3(g) of the Commonwealth Procurement Rules.15 This clause allows a limited tender in the case of a limited trial. The justification was that the CDC program was a limited trial to test the suitability of the service concept and its ability to achieve the program’s goals. After the passage of the amended CDC sections of the SSA Act in December 2020, which removed the term ‘trial’ and replaced it with ‘program’, DSS continued to justify a limited tender under clause 10.3(g) by stating that ‘the suitability of technology that underpins the CDC and its ability to achieve the program objectives will continue to be tested until 31 December 2022’.

2.49 The members of the tender evaluation committee identified no conflicts of interest with any part of the tenders and all declarations were appropriately documented.

Procurement of the Traditional Credit Union

2.50 In November 2017 the Minderoo Foundation16, in consultation with retail, banking, and payment organisations, compiled the Cashless Debit Card Technology Report with the aim of advising the Australian Government on ways to improve the technology model behind the CDC. The report found that there would be benefits in introducing additional CDC card issuers.

Feasibility study

2.51 In May 2020 DSS commissioned eftpos Australia to conduct a feasibility study into the potential enhancements to CDC participant experiences through having a choice of financial institutions, and the lowest cost and effort alternatives for financial institutions to implement the program.

2.52 eftpos Australia initially consulted five banking institutions (including Indue) to understand how best the industry could meet the requirements of the CDC. eftpos later engaged with three other banking institutions (including TCU).

2.53 The feasibility study presented three models for an additional card issuer:

- in-house solution — financial institutions would modify their own banking infrastructure to provide the CDC;

- modified basic banking — financial institutions utilise their own infrastructure with complex CDC-specific functions outsourced; and

- shared service model — shared service providers process CDC accounts on behalf of other financial institutions.

2.54 The study recommended that DSS develop a shared service model in the short-term as it requires less implementation time than creating a new card provider infrastructure. The shared services model would involve adding one or more card issuers onto Indue’s existing systems infrastructure. The report did not recommend a specific financial institution but focused on the feasibility of and options for a second card issuer.

2.55 The results of the feasibility study were sent to the Minister for Social Services in October 2020, with DSS recommending the Minister agree ‘to explore adding TCU as a CDC issuer’ in the Northern Territory. Although the briefing to the Minister noted that DSS was conducting market research with potential providers to look at options and costs for adding additional card issuers in the future, TCU was the only provider considered and recommended for the Northern Territory. The briefing did not mention plans to commission TCU to undertake a scoping study.

Scoping study

2.56 In November 2020 the Secretary of DSS approved a direct request for quote with TCU to undertake a multiple issuer scoping study. The purpose of the scoping study was to examine how best to add additional card issuers and deliver CDC banking services in regional and remote communities. The request for quote required ‘a comprehensive review of how a financial institution or issuer could work with the customer, Services Australia and the selected card provider to provide support to CDC participants’.

2.57 The final TCU report was provided to DSS on 1 February 2021 and met the requirements set out by DSS in the scoping study contract. The scoping study found that the delivery of the CDC by an additional issuer was feasible and would improve services to participants in regional and remote communities. The study also stated that a CDC and associated banking account could be developed as a product by small to medium-sized authorised deposit-taking institutions and would provide CDC participants with a greater choice of providers.

2.58 TCU stated in its final report that the study had limitations when discussing small to medium-sized authorised deposit-taking institutions, as the majority of the examples, experiences and learnings in the report were based specifically on TCU as the potential CDC issuer. Although DSS anticipated that the scoping study would identify other potential card issuers it was not a requirement of the contract with TCU for the scoping study.

2.59 The delegate approved a limited tender procurement of TCU as a second card issuer on 29 January 2021, before the final scoping study report was provided on 1 February 2021. The DSS Secretary was advised on 5 February 2021 that ‘Traditional Credit Union has scoped this work and has concluded it is able to deliver this product‘.

Limited tender for Additional Issuer Trial

2.60 On 10 February 2021, a request for quote was issued to TCU for the services of an additional card issuer to deliver the CDC and associated operational and support services to participants in the Northern Territory. DSS justified a limited tender approach for the Additional Issuer Trial in the Northern Territory because:

- of the trial nature of the activity (refer to paragraph 2.48); and

- it was procuring services from a small to medium-sized enterprise with at least 50 per cent Indigenous ownership17, noting TCU was the only Indigenous-led and owned provider in the Northern Territory with experience in delivering banking services to Indigenous customers in regional and remote communities.

2.61 DSS also noted that TCU had an existing relationship with Indue through the provision of banking systems infrastructure.

2.62 The procurement of an Indigenous enterprise for this activity was in line with the Indigenous Procurement Policy and whole-of-government efforts to increase procurements from Indigenous small to medium-sized enterprises. TCU’s Indigenous Participation Plan18 stated that TCU met the mandatory minimum requirements of the Indigenous Procurement Policy.

2.63 Following the release of the request for quote, the procurement process for the second card issuer for the CDC is set out below and summarised in Figure 2.2.

- A draft contract was provided to TCU as part of the request for tender. TCU expressed concern about meeting the tender requirements and timeframes. The tender closing date was extended and DSS withdrew the draft contract from the tender documentation with a view to negotiating the contract terms with TCU based on TCU’s capacity and capability to deliver the service.

- The DSS Procurement Branch advised the CDC program area that ‘a comprehensive value for money assessment could not be undertaken without an understanding of how the [TCU] proposal interacted with the likely conditions of the contract’. The tender evaluation report stated the following:

As the Committee considered that the impact on government policy objectives of delaying the procurement (e.g. by re-issuing documentation) was unacceptable, the Committee has instead adopted a recommendation proposing that the department proceed to negotiations with the Traditional Credit Union, and that once these negotiations are substantively complete and contract positions agreed, a value for money assessment will be made using the agreed contract terms and a further recommendation made to the delegate.

- The evaluation committee’s recommendation was approved by the delegate in March 2021.

- Upon receiving TCU’s response to the tender, the evaluation committee identified multiple weaknesses including a finding that some aspects of TCU’s tender response ‘reflected a lack of understanding of government requirements or the scale of changes that may be required in order for the Traditional Credit Union to meet those requirements’. DSS advised that the evaluation committee was unable to make a value for money assessment.

- Further negotiations led to TCU submitting a revised proposal. The price in the revised proposal was 20 per cent less than the first proposal, but 23 per cent more than the proposed budget approved by the delegate for the TCU procurement.19 After assessing the revised response, the evaluation committee recommended that the revised proposal represented value for money and DSS entered into contract negotiations. The value for money assessment was based on the draft contract rather than a costed proposal from TCU.

- During contract negotiations, it was recommended that the maximum contract value be reduced to reflect the reduction in time available for TCU to implement the service and the reduction in the number of CDC accounts that TCU had the capability to deliver. The delegate approved this change to the contract terms on 9 September 2021.

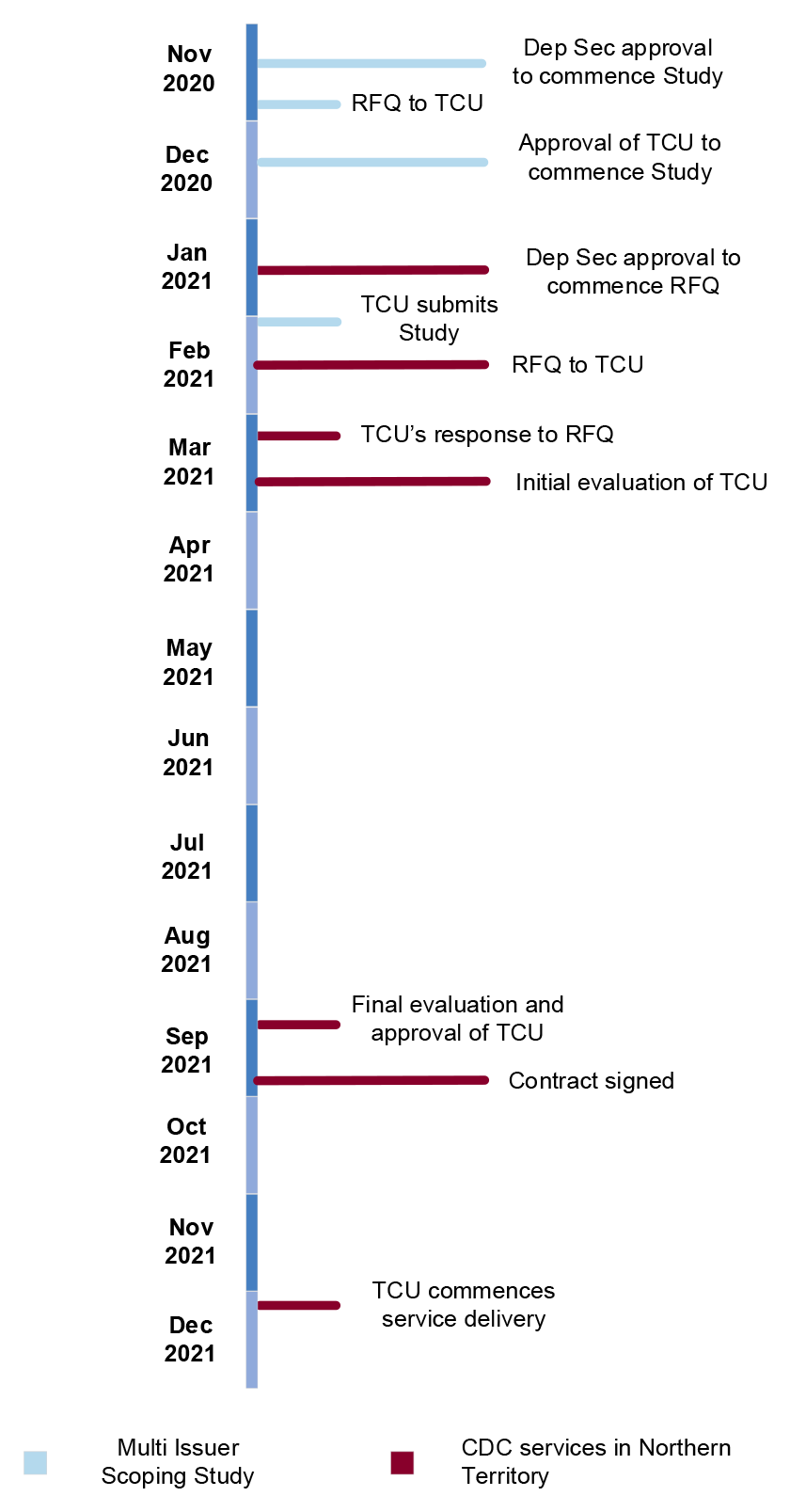

2.64 A timeline of the process for the procurement of TCU is depicted in Figure 2.2.

Figure 2.2: Procurement of TCU

Source: ANAO analysis.

2.65 In accordance with the Commonwealth Procurement Rules, a risk assessment of the limited tender procurement of TCU was undertaken in January 2021 which did not identify any high risks. A revised risk assessment was undertaken in March 2021 which identified the following high risks:

- TCU’s expectations of the contract process may not align with the Commonwealth’s expectations;

- TCU may not be sufficiently mature or resourced at present to meet additional preferred requirements of the Commonwealth; and

- supplier may be unwilling to accept standard contract terms (as reflected from the withdrawal of draft contract from the tender documentation).

2.66 The mitigation strategies for these risks were addressed during contract negotiations. The mitigations primarily involved TCU engaging additional staff to meet the terms of the contract and service delivery requirements.

2.67 Each member of the tender evaluation committee signed a deed of confidentiality, which warranted that no conflicts of interest existed or were likely to arise. The Deputy Secretary approved the limited tender procurement. Separation of roles were appropriately managed. Final approval was given by an officer who did not participate in the tender evaluation.

Are there appropriate contract and service schedule management arrangements in place?

There are appropriate contract management and service delivery oversight arrangements in place for the CDC program. Effective contract management plans are in place for the contracts with the card providers. Documentation supporting the monitoring of Indue service delivery risk could be more regularly reviewed. In April 2022, DSS and Services Australia finalised a CDC service level agreement. This was established late in the relationship, which commenced in 2016.

Management of Indue and Traditional Credit Union contracts

Indue contract management

2.68 The contract management arrangements between DSS and Indue are underpinned by a Services Agreement; the latest contract variation; and a contract management plan.

2.69 The Services Agreement is the base contract that was established in 2016 between DSS and Indue for the implementation and operation of the CDC Trial. This agreement provides comprehensive details of the terms and conditions of the relationship, such as the statement of work, required service levels, respective roles and responsibilities and risk management. DSS has maintained records of variations to the Services Agreement, with the latest contract variation dated 5 December 2021.

2.70 DSS developed a contract management plan in September 2019 in response to the recommendation of Auditor-General Report No.1 2018–19. The contract management plan aligns with DSS’ contract management guidance and addresses the essential requirements of the guidance.

2.71 The contract management plan has been reviewed after each material variation to the contract. According to the contract management plan, changes to the plan need to be approved by the Cashless Welfare Policy and Technology Branch Manager. The contract management plan was updated in August 2021 after the ninth contract variation; however, the updates did not receive the required approvals. DSS advised in January 2022 that the contract management plan is a live document and the current version is being reviewed for amendments and will be progressed for approval.

2.72 The ANAO reviewed DSS’ contract management approaches against its contract management plan for Indue. DSS’ approach aligned with the requirements in the plan. DSS developed risk plans and treatments, held progress meetings and assessed non-compliance with service levels. DSS’ risk register for its contract with Indue has been in place since May 2019. The contract management plan required DSS to review the risk register by March 2020. This review date was not appropriately updated in subsequent versions of the contract management plan. DSS advised the ANAO in January 2022 that the CDC Compliance Section was in the process of reviewing the register.

2.73 Indue is also required to maintain a risk register for the delivery of banking services for the CDC program. Indue provided DSS with its risk register in July 2020 and the contract management plan stated that an updated version was to be requested by DSS from Indue in August 2021. In January 2022, DSS requested that Indue provide an updated risk register which was received by DSS in February 2022.

2.74 There are 10 service levels in the Services Agreement relating to:

- system availability;

- account creation, card production and account funding;

- call centre and disputed transactions;

- cardholder support; and

- reporting and publication.

2.75 The service levels include targets and the methodologies to measure achievement against the specified targets for each service level.

2.76 DSS monitors Indue’s performance against the service levels through a monthly program outcomes report provided by Indue. Indue’s results are assessed against the targets in the Services Agreement and are recorded as a ‘pass’ or ‘fail’ in a ‘service level tracker’. In accordance with the Services Agreement, DSS applies a penalty when Indue fails to meet its service level targets to the next month’s invoice. The ANAO observed examples of this occurring.

2.77 The CDC Compliance Section was established in November 2021 and meets monthly with Indue to discuss operational issues. Prior to the establishment of the CDC Compliance Section, other areas of the CDC branches met with Indue on either a regular or ad hoc basis. The types of issues discussed include: participant behaviours; card operations; information and data sharing; the Northern Territory transition; and relationship matters between DSS, Services Australia, Indue and TCU. There are also weekly meetings with Indue to discuss infrastructure and IT-related matters.

TCU contract management

2.78 The contract management arrangements between DSS and TCU are underpinned by a Services Agreement; Term Sheet (Deed) for the Additional Issuer Trial20 (superseded by a Deed of Collaboration in March 2022); and a contract management plan.

2.79 The Services Agreement for implementation and operational services for the Additional Issuer Trial was established in September 2021 with a start date of 6 December 2021. This includes providing CDC card and account services for up to 12,500 participants in the Northern Territory.21 The Services Agreement specifies the roles and responsibilities of TCU that need to be undertaken in accordance with the terms and conditions, pricing provisions and statement of work.

2.80 DSS developed a contract management plan that provides background to the contractual relationship with TCU, information about the Services Agreement and guidance for managing the contract. The contract management plan is compliant with DSS’ contract management guidance. Overall, it is an effective baseline contract management plan that addresses the requirements of the contract.

2.81 The contract with TCU sets out 11 service levels relating to:

- system availability and performance;

- account creation, card production and account funding;

- call centre and disputed transactions;

- cardholder support; and

- reporting.

2.82 Similar to the Indue service levels, the TCU contract establishes the methodologies to measure achievement against specified targets for each service level. TCU submits monthly reports to DSS on progress against the service levels. DSS and TCU hold weekly meetings with Indue and another weekly meeting is held between DSS, TCU and Services Australia to discuss issues relating to the Additional Issuer Trial.

Service monitoring arrangements with Services Australia

2.83 Upon commencement of the CDC Trial in 2016, Services Australia was responsible for identification of participants; switching participants on to the CDC; calculation of the quarantined amount; directing funds to restricted and unrestricted accounts; managing changes of circumstances that may impact CDC eligibility; and provision of data to DSS.

2.84 From 16 March 2020, Services Australia assumed additional responsibilities for CDC service delivery. These included: provision of digital and telephony channels; face-to-face services through Centrelink offices and remote servicing arrangements; and managing CDC exit applications and wellbeing exemptions. Appendix 3 provides a list of Service Australia’s CDC responsibilities.

2.85 The bilateral management arrangement between Services Australia and DSS — established under the Services Australia Bilateral Agreement Framework22 — consists of an overarching Statement of Intent (April 2018) and a set of protocols and agreements.23 The Statement of Intent is intended to support interactions between the two entities and sets out the principles, governance and management information for the entire bilateral relationship.

2.86 Under the Statement of Intent, services schedules are required to be established which set out the specific elements of the service being delivered, such as service level standards and responsibilities of each entity.

2.87 Prior to October 2021, there was no services schedule in place for the delivery of the CDC by Services Australia. In October 2021, a draft CDC program Letter of Exchange was prepared and signed. Under the Bilateral Agreement Framework, a Letter of Exchange is meant to be used as an interim arrangement while a more detailed services schedule is being negotiated, or where the service is short term.

2.88 The ANAO reviewed the Letter of Exchange against the requirements of the Bilateral Agreement Framework. The Letter of Exchange included the majority of the required elements with the exception of service level standards. On 1 April 2022 a Service Level Agreement was finalised between DSS and Services Australia. This replaces the Letter of Exchange and contains specific service level standards for the CDC program’s service delivery.

2.89 In terms of service levels, DSS and Services Australia advised that two measures were already being externally reported for the CDC program.

- The first measure is that determinations to exit the program be made no more than 60 days after the person submits a complete and correct exit application. This measure is legislated under subsection 124PHB(4A) of the SSA Act.24 High-level exit application information is published on data.gov.au.

- The second measure is in relation to Services Australia’s enterprise-level telephony service level standard, which is the average length of time a customer waits to have a call answered. The service level target is under 16 minutes. CDC Telephony Reports are provided to DSS on a weekly basis. Services Australia’s overall performance on its telephony service level is published in its annual reports.

2.90 Operational matters for the CDC are overseen by the Cashless Welfare Working Group which comprises representatives from DSS and Services Australia. The group meets weekly to discuss CDC program-related matters, including service delivery and performance. The CDC Steering Committee oversees the relationship between DSS and Services Australia and receives updates from the Cashless Welfare Working Group, which primarily discusses operational issues.

3. Performance measurement and monitoring

Areas examined

This chapter examines whether the Department of Social Services (DSS) implemented effective performance measurement and monitoring processes for the Cashless Debit Card (CDC) program.

Conclusion